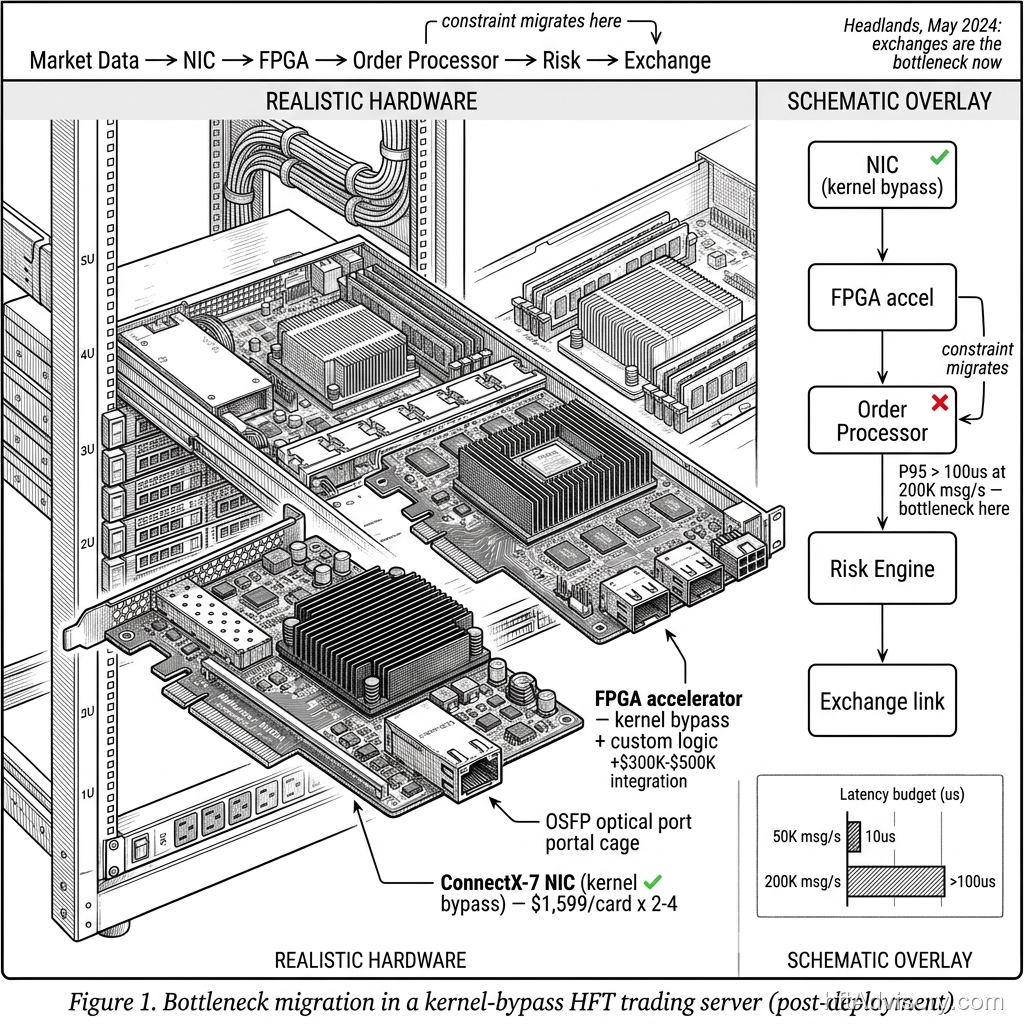

Based on engagements I have priced, a full kernel-bypass stack with FPGA integration has run $1M to $2M all-in — hardware, integration, and first-year engineering. ConnectX-7 NICs rack at $1,599 per card, typically two to four per server. The engineering to build correctly around them is the larger number.

Most trading desks make this investment expecting a durable latency advantage. In production deployments my team has profiled, kernel bypass delivers a 20–30% reduction in tick-to-trade — the exact gain depends on baseline kernel overhead and message rate. That is a real improvement. The problem is what happens next.

The bottleneck migrates.

Thank you for reading this post, don't forget to subscribe!

This is the pattern I have watched repeat: the network stack is addressed, tick-to-trade improves, the team declares victory — and then, eighteen months later, P95 is climbing again. Not because of anything they did wrong. Because the constraint moved downstream. The order processor is now the binding variable, not the NIC. The capex remains fully committed.

This article is the architectural diagnosis I give when a CTO is sitting across the table from me asking why their latency numbers are trending the wrong direction after a major infrastructure spend. It is not a build guide. It is a map.

Table of Contents

- What Kernel Bypass Actually Delivers

- Where the Bottleneck Migrates: The 200K msg/s Evidence

- Why Order Book Imbalance Monitoring Reveals Queue Pressure First

- The Architectural Diagnosis: What NIC-Only Metrics Miss

- The Bottleneck Migration Checklist

- Conclusion: The Test That Tells You Where You Are

What Kernel Bypass Actually Delivers

The kernel-bypass thesis is straightforward and well-supported. In a standard Linux networking path, every packet transits the OS kernel, adding 20–50 microseconds of processing overhead per transit. Technologies like DPDK and RDMA bypass that path entirely, reducing the kernel-contributed latency to 1–5 microseconds. That is a 10x compression on the component the kernel controls.

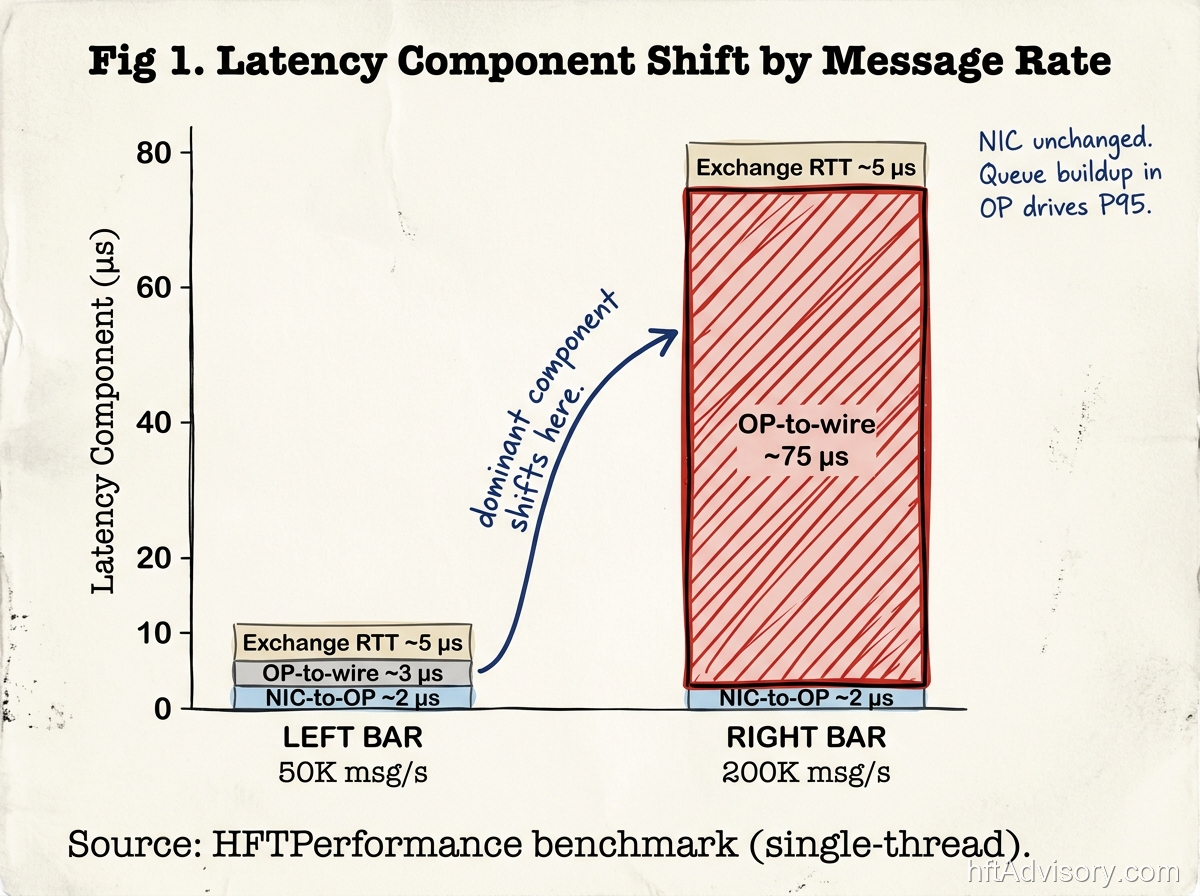

At 50,000 messages per second — a rate typical of mid-frequency strategies or venues with moderate quote activity — network is the binding constraint. Benchmarks from HFTPerformance show median latency around 10 microseconds at this throughput level. The NIC is the bottleneck, kernel bypass addresses it directly, and the investment produces visible improvement in tick-to-trade.

ConnectX-7 NICs at $1,599 per card, two to four per server, represent the hardware layer of that investment. The card itself is not what is expensive — it is the engineering discipline to instrument, profile, and sustain a kernel-bypass deployment in production that drives the total cost.

One number that appears frequently in conversations about kernel bypass deserves context: the 13.9 nanosecond STAC-T0 benchmark achieved by Exegy with AMD Alveo in June 2024. That figure represents actionable latency on the critical path — NIC to order sent — in a controlled benchmark configuration with no host-side strategy logic. It is a floor measurement for one segment of the stack. It does not include the order processor, and it is not the configuration you are running in production. Vendor benchmark numbers represent the theoretical floor for a specific segment; your production number is something different.

In production deployments my team has profiled, kernel bypass delivers a 20–30% reduction in tick-to-trade — the exact gain depends on baseline kernel overhead and message rate. That range is real. So is what comes after it.

Where the Bottleneck Migrates: The 200K msg/s Evidence

The HFTPerformance benchmark data is instructive here because it shows the crossover point precisely. A single-thread order processor saturates at approximately 120,000 messages per second. Extend to dual-thread and you reach roughly 220,000 messages per second. Push a single-thread system to 200,000 messages per second and P95 latency exceeds 100 microseconds — driven by queue buildup in the order processor, not by the network stack.

At 50,000 messages per second, the network is the variable. At 200,000 messages per second, the queue behavior in the order processor is the variable. The NIC has been optimized. The capex is already spent. The bottleneck is now somewhere the NIC cannot reach.

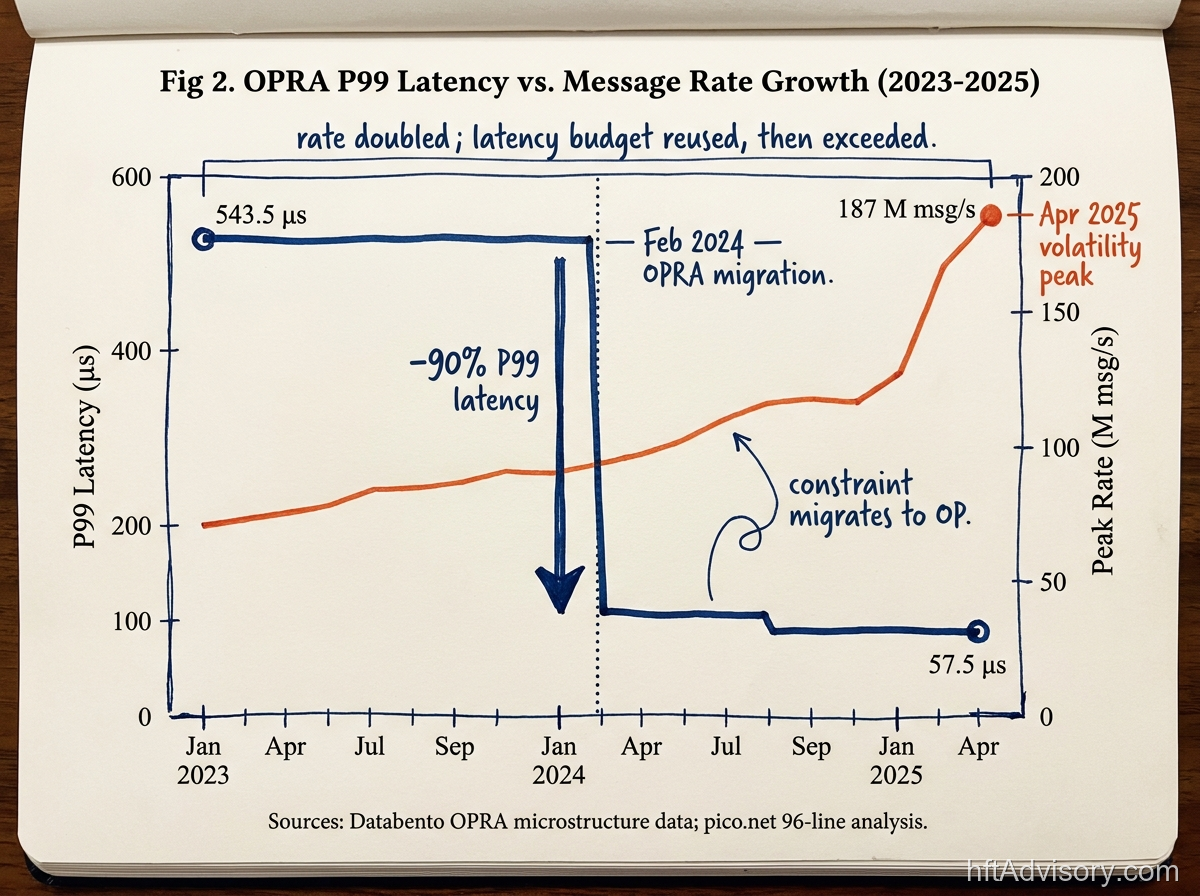

This is not a hypothetical concern. The message volume that trading infrastructure must absorb is not static. Consider what happened to OPRA in February 2024: the 96-line migration reduced P99 latency from 543.5 microseconds to 57.5 microseconds — a dramatic compression of the exchange-side bottleneck. But the same migration doubled aggregate feed bandwidth from 40 Gbps to over 70 Gbps in 1-millisecond microbursts. By April 2025, OPRA was delivering peak rates exceeding 187 million messages per second during volatility events.

This is the paradox of exchange infrastructure improvement: when the exchange-side bottleneck compresses, the incoming flood increases. Better exchange latency means more messages arrive faster. The desk that invested in kernel bypass to beat the exchange bottleneck now receives a higher-volume feed into an order processor that was sized for the old regime.

Headlands Technologies addressed this publicly in May 2024: “Particularly since exchanges are the bottleneck now, taking 10s of microseconds, we may struggle to see how shaving off nanoseconds helps.” The observation is precise. When the exchange is the dominant latency contributor, aggressive firm-side optimization below that floor produces diminishing returns. But when the exchange bottleneck itself compresses further, as OPRA’s February 2024 migration demonstrated it can, the message volume that follows drives the order processor harder than the NIC.

In my experience, the firm-side edge from a kernel-bypass investment typically erodes within 12 to 24 months as competitors close the hardware gap and the binding constraint migrates. The timeline is not a criticism of the original investment — it is the architectural lifecycle of any point solution that addresses a single layer without a framework for tracking where the constraint moves next.

Why Order Book Imbalance Monitoring Reveals Queue Pressure First

Most desks measure tick-to-trade at the NIC and track mid-price as the primary signal. Neither measurement tells you where queue pressure is accumulating before it shows up in P95.

The research basis for Order Flow Imbalance as a leading indicator is well-established. Cont, Kukanov, and Stoikov (2014), in the Journal of Financial Econometrics (vol. 12, no. 1, pp. 47–88), demonstrated a linear relationship between order flow imbalance — the imbalance between supply and demand at the best bid and ask prices — and price changes, with a slope inversely proportional to market depth, across 50 U.S. stocks in NYSE TAQ data. Price changes are mainly driven by order flow imbalance. The signal precedes the price move.

Separately, Byrd et al. (2020) — an agent-based simulation study published on arXiv (arXiv:2006.08682) — showed that latency rank, rather than absolute magnitude, is the key factor in allocating returns among agents pursuing similar strategies. You do not need to be the fastest. You need to be faster than the next competitor at the moment the signal arrives. Order Book Imbalance monitoring tells you when that moment is approaching.

Here is the structural gap that most desks have not closed: if your OBI calculations run against SIP data, you are working with an incomplete picture. Databento has documented that odd-lot quotes constitute nearly 50% of unreported U.S. equities market activity to the CTA/UTP SIPs. The SIP, by design, does not carry odd-lot quotes. OBI calculated at SIP resolution is structurally incomplete — it is modeling approximately half of the actual order flow at the top of the book.

Queue pressure accumulates in the order book before the mid-price moves. If your monitoring is at SIP resolution, you see the consequence but not the cause. The monitoring gap and the signal gap are the same gap. The desk that catches OBI deterioration on a direct feed ten milliseconds before it shows up in SIP data has a materially different decision window — and that lead disappears if the order processor is already queued behind 200,000 messages per second.

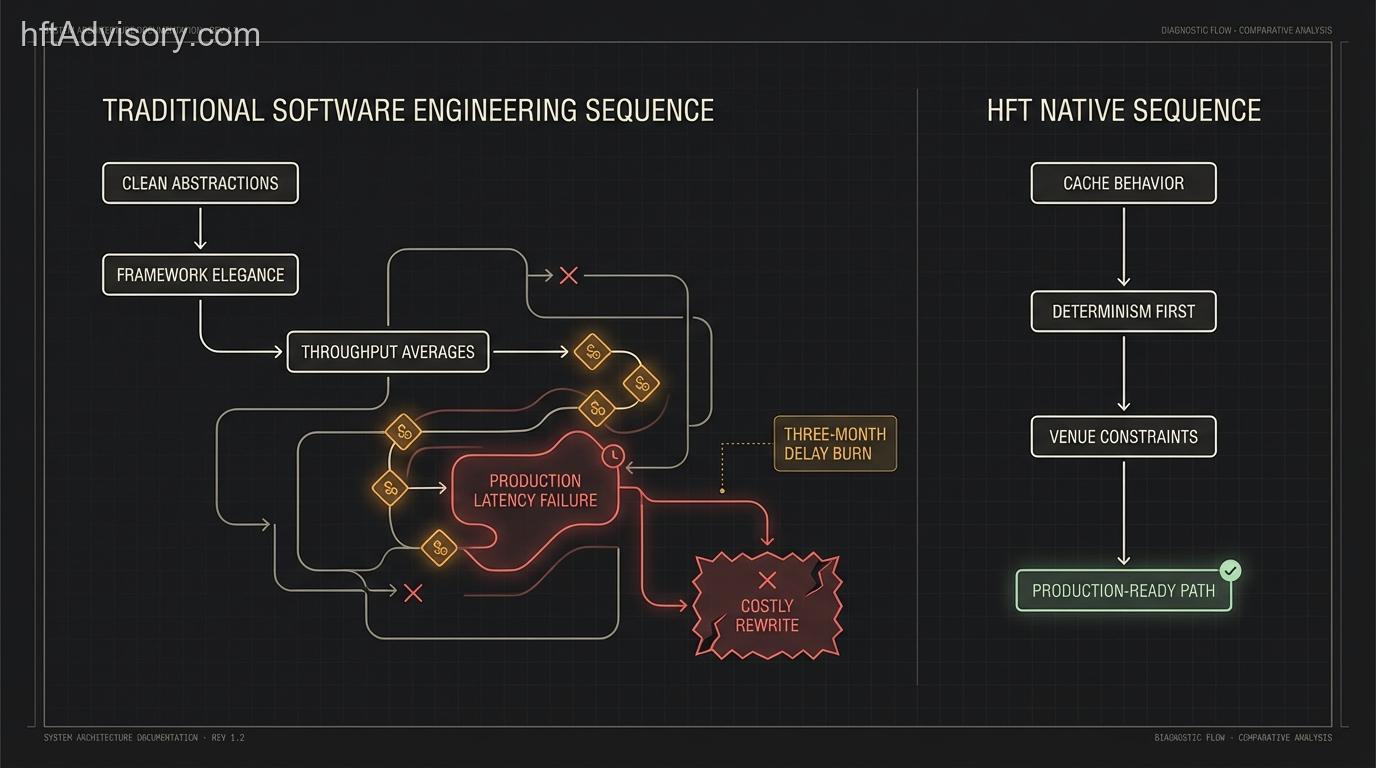

This is where the two failure modes compound. A kernel-bypassed NIC delivers low-latency ingest. An order processor running at saturation queues that ingest anyway. And an OBI monitor running on SIP data misses the leading indicator that would tell the risk engine the market structure is deteriorating. Three architectural decisions, each reasonable in isolation, producing a combined outcome that no single component explains.

The Architectural Diagnosis: What NIC-Only Metrics Miss

There is a measurement problem embedded in how most desks report tick-to-trade. The number is real, but the denominator matters. Tick-to-trade measured at the NIC — from packet receipt to order sent at the wire — captures the network segment. Tick-to-trade measured end-to-end — from market event to exchange acknowledgment — captures the full architectural stack.

Those two numbers can diverge by an order of magnitude as message rate increases.

At 50,000 messages per second, the order processor clears the queue before the next tick arrives. The NIC number and the end-to-end number are close. At 200,000 messages per second, queue buildup in the order processor inserts latency that the NIC metric does not see. The NIC reports 2 microseconds. The end-to-end number is 80 microseconds and rising. The capex review concludes that the NIC investment is performing. The strategy P&L tells a different story.

The Headlands observation — that exchanges are the bottleneck now — is directionally correct at low message rates and for strategies where exchange round-trip dominates. The OPRA data complicates it: when exchange-side P99 drops from 543 microseconds to 57 microseconds, the exchange contribution to the overall latency budget shrinks, and the firm-side contribution grows as a proportion. The constraint migrates not because the firm’s stack got worse, but because the exchange got better and the firm’s stack did not proportionally scale.

In my experience, the firm-side edge from a kernel-bypass investment typically erodes within 12 to 24 months as competitors close the hardware gap and the binding constraint migrates. The desks that sustained their edge beyond that window shared one characteristic: they had instrumentation that tracked the constraint migration in real time, not in retrospect.

The Bottleneck Migration Checklist

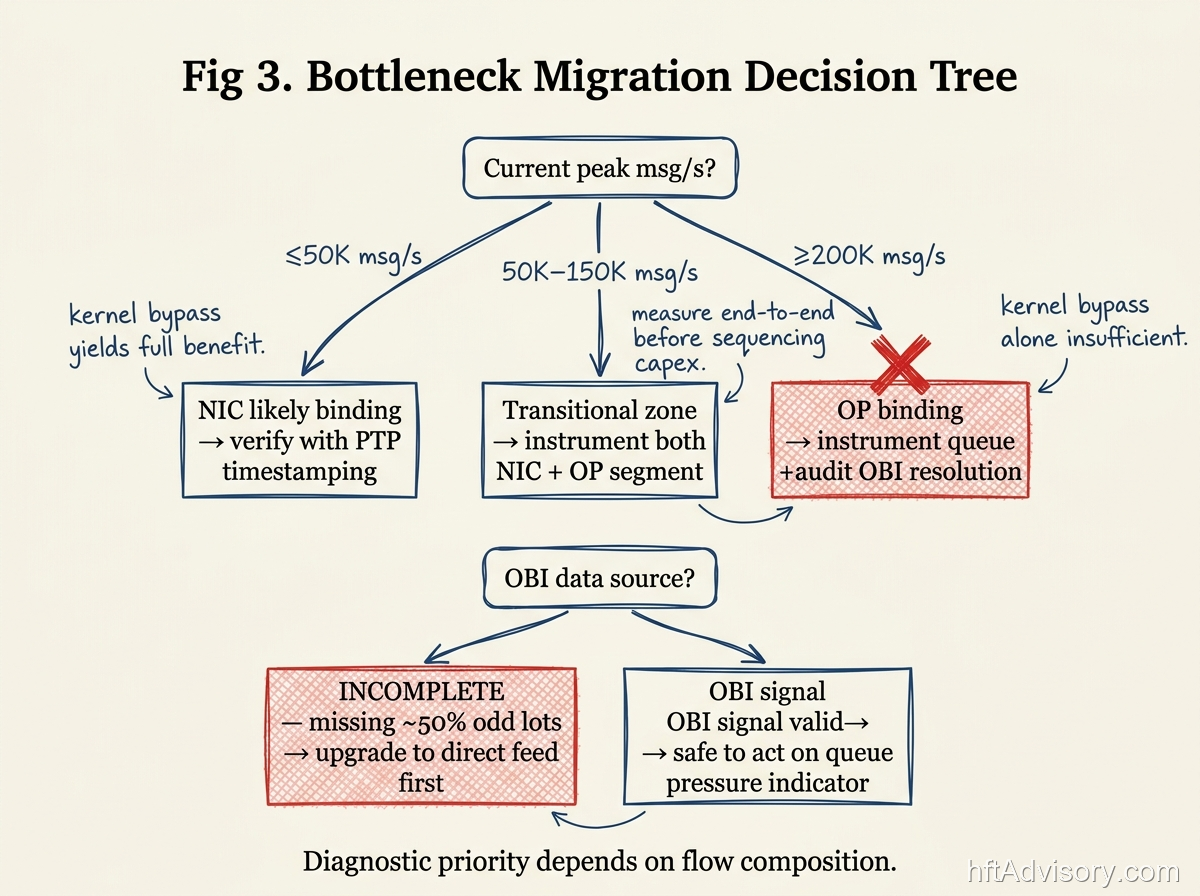

This is the framework I use to orient an architecture review when a desk is past the initial kernel-bypass investment and asking why latency is trending the wrong direction. It is a diagnostic sequence, not a build specification. The sequencing decisions — order book restructure versus risk-check placement versus exchange co-location — depend on flow composition and where your P95 spikes originate.

Step 1: Decompose message flow by type and rate.

Not all messages have the same latency budget or the same processing cost. Quote updates, trade reports, order acknowledgments, and cancel-replace messages move through the order processor differently. Before any hardware conversation, map your bind points: at 50,000 messages per second, which message types are queuing? At 100,000? At 200,000? The answer determines whether the constraint is architectural (order processor design) or operational (message rate regime you have entered).

Step 2: Measure tick-to-trade end-to-end — not just NIC-to-wire.

Break the measurement into three segments: NIC-to-order-processor, order-processor-to-wire, and round-trip at the exchange. Each segment has a different owner and a different remediation path. If you are only measuring NIC-to-wire, you are measuring the segment you already optimized and leaving the growing segment unobserved.

Step 3: Audit your OBI monitoring resolution.

Are your order flow imbalance calculations running against SIP data or a direct proprietary feed? If SIP, you are missing roughly half of the odd-lot activity at the top of the book. That is not a precision issue — it is a structural incompleteness issue. The leading indicator for queue pressure deterioration is only available at direct-feed resolution. This audit step costs almost nothing and changes the interpretation of every OBI signal you are currently acting on.

Step 4: Identify where queue buildup is occurring in your order processor.

Single-thread saturation begins around 120,000 messages per second. If your message rate is approaching or exceeding that threshold, queue buildup in the order processor is likely contributing more to your P95 than your NIC metrics suggest. Instrument the order processor, not just the NIC. The profiling target shifts from nanoseconds to microseconds, and the root cause shifts from network engineering to order processor architecture.

Step 5: Use flow composition to sequence the next dollar.

Once you have the measurement data from Steps 1 through 4, the sequencing question becomes tractable. If P95 spikes are originating in the order processor queue, the first question is whether a software path — dual-thread order processor architecture, batching logic, queue redesign — closes the gap before any hardware refresh is warranted. In several deployments I have reviewed, that software path recovered a substantial share of the addressable P95 improvement at a fraction of a hardware refresh cost. If the software ceiling is already hit and the constraint is architectural, then order book restructuring becomes the priority. If P95 spikes are originating at the exchange round-trip, co-location or feed-proximity decisions take precedence. If OBI monitoring is incomplete, that is the first fix — it costs nothing compared to a hardware refresh and immediately improves the quality of every risk decision downstream.

The shops that sequenced this well — the ones that sustained their edge past the 12-to-24-month erosion window — started with message-flow decomposition before touching hardware. Most go the other direction: hardware first, decomposition never. The result is a perfectly optimized NIC delivering messages to a saturated order processor.

One note on venue scope: the bottleneck-migration pattern described here is documented in equity HFT infrastructure, but the diagnostic framework applies wherever throughput drives constraint migration — multi-venue execution, cross-exchange market making, any architecture where a single-layer optimization shifts the binding variable to the next layer. The specific thresholds (50K vs. 200K msg/s, single-thread saturation at 120K) are equity-market benchmarks. In multi-venue environments, the analogous question is: which venue, connection, or processing stage is your binding constraint at peak correlated volatility? The five-step methodology answers that question regardless of the asset class.

Conclusion: The Test That Tells You Where You Are

The checklist above is the map. The test is simple.

Pull your P95 latency at peak message rate. Pull your NIC-level tick-to-trade for the same period. If the NIC number is 2 microseconds and the P95 is 80 microseconds, the 78-microsecond gap lives in your order processor, your risk check, or your exchange round-trip — not in the network stack you spent $1M to $2M optimizing.

If your P95 at 200,000 messages per second cannot be explained by your NIC-level metrics, the bottleneck has already migrated. The hardware investment was necessary. It was not sufficient. And the constraint is now sitting in a layer that NIC-only instrumentation cannot see.

The architecture review starts with that number — not with the hardware refresh conversation.

This article was originally shared as a LinkedIn post. View the original post

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

>> Learn more about what I do:

https://hftAdvisory.com

>> Your execution logs contain $200K+ in recoverable edge.

>> Microstructure Diagnostics — one-time audit, 3-5 day turnaround

https://hftadvisory.com/microstructure-diagnostics

... more info about me 👇