Table of Contents

- Why Queue Position Is an Architecture Decision, Not a Data Decision

- Why L2 Data Is Structurally Blind to Queue Position

- NASDAQ TotalView-ITCH 5.0: The Per-Order Event Tape

- The Three Structures That Compose a Production-Grade Book

- The Cancel Path Is the Hot Path and Most Books Get This Backwards

- The Fourth Structure: A Hot-Cache Layer for Recent Order IDs

- Practical Framework: Diagnostic Checklist for Architecture Reviews

- Conclusion

Why Queue Position Is an Architecture Decision, Not a Data Decision

Passive market making on FIFO venues lives and dies on queue position. That sentence is not new. What is underappreciated is where the failure lives: not in the strategy, not in the connectivity layer, but in the book design itself.

In code reviews of trading-desk codebases, the pattern I encounter most often is a book that was built to run. Feeds are ingested, price levels are maintained, top-of-book is accurate. What the book cannot tell you is where your resting order sits within its price level’s queue at any given moment, or whether the cancel that just arrived targets an order so recently placed that it almost certainly still sits close to the front. That distinction matters. On a price-tied FIFO venue, queue position determines whether your passive fill happens before or after the market moves away from you. A book that cannot reason about per-order position at cancel time is not a neutral tool. It is a systematic source of adverse selection.

The architectural mistake I see consistently across desks is not in the data subscription decision, though that matters. It is in the structural choice made at book construction time: optimize the ADD path, then bolt on cancel handling as an afterthought. That sequence made sense in a world where order books were sparse and cancellations were the exception. Modern electronic markets are the opposite. Cancel traffic dominates. Maureen O’Hara’s 2015 Journal of Financial Economics analysis found that more than 98% of orders are canceled. The dominant order-book event is not execution. It is cancellation. Designing for ADD-path throughput and tolerating cancel latency is architecting for the wrong workload.

Thank you for reading this post, don't forget to subscribe!

This article walks through four things: why L2 data cannot give you queue position by construction; how NASDAQ TotalView-ITCH 5.0’s per-order event tape enables full queue reconstruction; how a production-grade book composes three interlocking structures to handle this correctly; and one fourth structure, an L1D-resident hot cache for recent order IDs, that addresses the non-uniform access pattern cancel-dominated workloads create.

Why L2 Data Is Structurally Blind to Queue Position

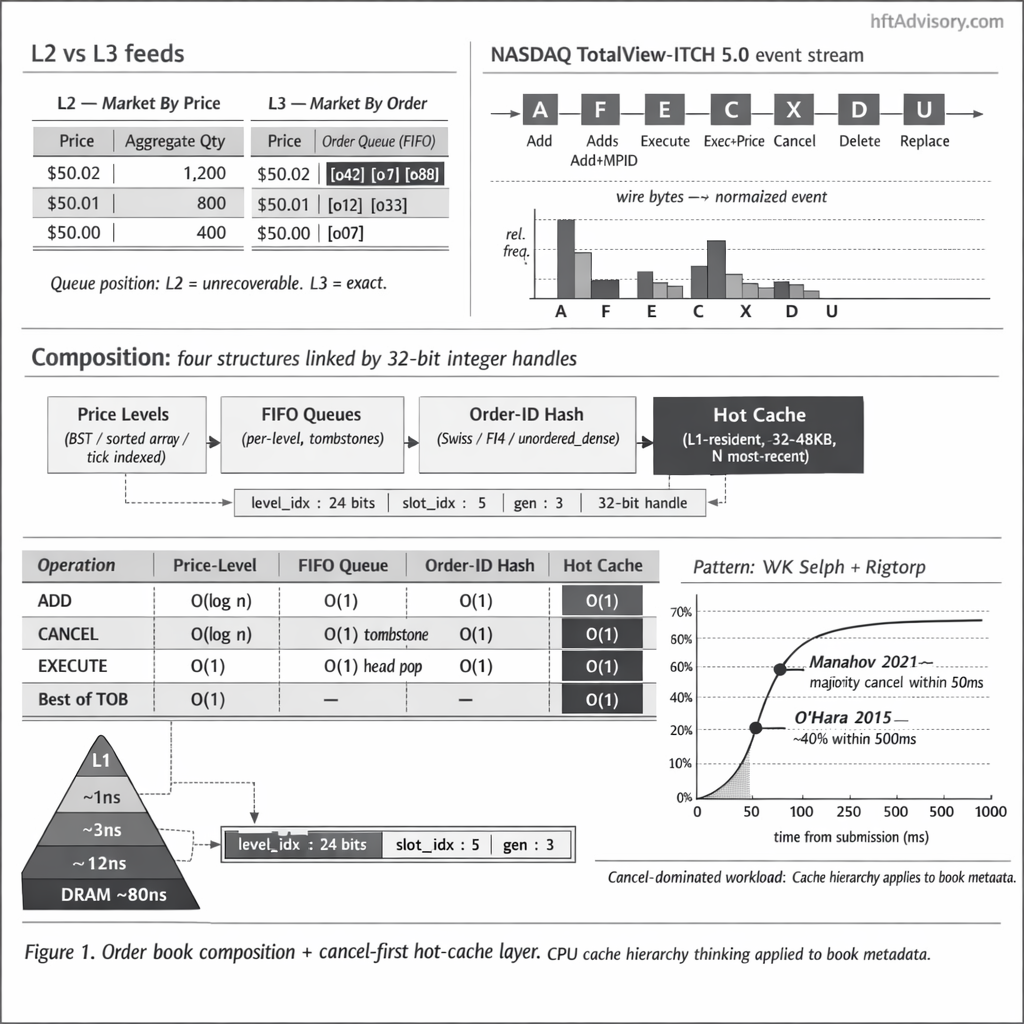

The distinction between L2 and L3 market data is not a marketing segmentation. It is a fundamental difference in what each feed exposes about the state of the book.

L2 data, also called Market By Price (MBP), aggregates the order book by price level. For any given price, you receive the total quantity available and, on some feeds, the number of orders at that level. What you do not receive is anything about individual orders: their timestamps, their IDs, their relative position within the queue at that level. That information is structurally absent. The aggregation is deliberate and irreversible. No amount of inference recovers per-order position from an MBP feed, because the per-order data was discarded before the message was formed.

L3 data, also called Market By Order (MBO), exposes every individual order and preserves its identity across its lifetime. As Databento documents, MBO data shows not just the orders at each price but also their position in the queue. When a new order arrives, you can place it at the back of the queue for its price level. When a partial cancellation arrives, you know exactly which order it targets and by how much its quantity changes. When a full deletion arrives, you remove that specific order from its position and apply the correct queue compaction logic.

For passive market making on a strict FIFO matching engine, this matters at a level that directly determines fill economics. If your book is running on L2 data, you can tell whether your order is at a given price level. You cannot tell whether you are first in queue, fifteenth, or whether five large orders that arrived before yours have all been partially reduced in size over the last three seconds in a way that puts your fill probability higher than the raw depth number implies. Queue-position uncertainty at cancel time is not a minor gap. On venues where queue sniping and layering are active, the difference between knowing your queue slot and not knowing it is the difference between a strategy that prices adverse selection correctly and one that does not.

This is the structural argument for L3 ingest. The economic argument follows from it: every passive fill you lose because your book was running a stale position estimate for a resting order is a direct revenue cost. The question becomes whether your book layer was designed to work with what L3 gives you, or whether you subscribed to L3 and fed it into a structure that was only ever designed for L2 semantics.

L3 ingest is not free. Venue direct feeds and aggregator routes carry hard costs in connectivity, line-rate ingest, parsing, and downstream storage. The cost calculus is venue-and-strategy-specific: passive market making on price-time priority venues, where queue position drives fill probability, typically justifies the cost with room to spare. Strategies that do not rely on per-order position, such as sweep execution or agency routing, may find the economics do not clear. The architectural argument is not that subscribing to L3 is always correct. It is that if you have subscribed, your book layer should not throw away what you paid for by using data structures that were designed for L2 semantics. Subscribing to MBO and feeding it into an MBP-shaped book is paying for precision and discarding it at the ingestion boundary.

NASDAQ TotalView-ITCH 5.0: The Per-Order Event Tape

NASDAQ TotalView-ITCH 5.0 is the canonical reference for per-order feed design. The protocol exposes the full lifecycle of every order on the exchange as a sequence of typed messages. Understanding the message taxonomy is a prerequisite for designing a book that uses the feed correctly.

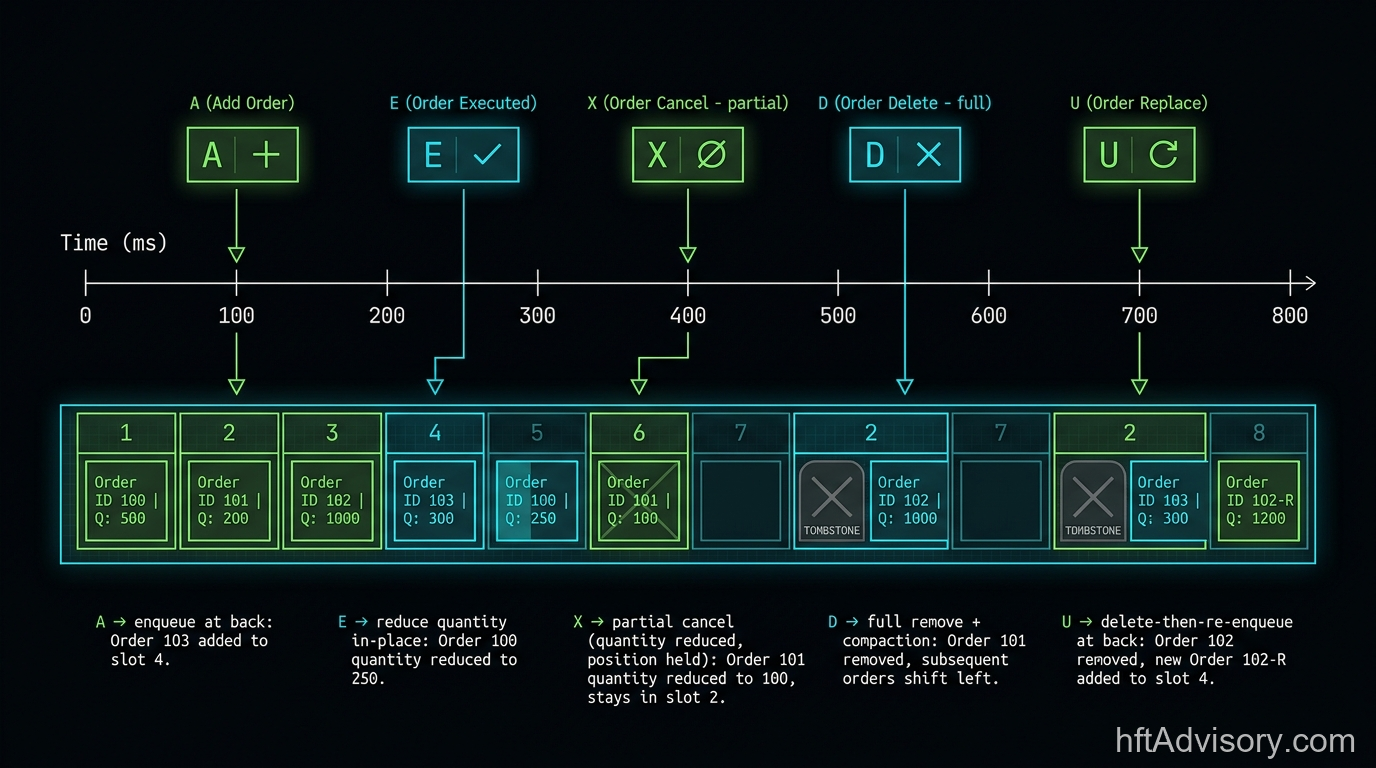

The message types that matter for book reconstruction are:

A (Add Order, no MPID attribution) and F (Add Order, with MPID attribution): A new order enters the book. You receive an order reference number, side, price, and quantity. This is the event that creates an entry in your per-level FIFO queue and registers the order in your ID lookup structure.

E (Order Executed): A portion of a resting order has been matched. You receive the order reference number and the executed quantity. The order may remain live with reduced quantity. You update the quantity in place.

C (Order Executed With Price): Same as E, but the execution occurred at a price different from the order’s displayed price, which happens in certain non-displayed order types. Same book-state logic applies.

X (Order Cancel): This is a partial cancellation. The order remains live, but its displayed quantity is reduced. The critical precision: X does not remove the order from the queue. It reduces shares on an order that is still live and still holds its queue position. The distinction matters for any code that computes remaining depth or estimates fill probability from queue slot.

D (Order Delete): Full removal. The order is gone. Your per-level queue must compact around the now-empty slot, and your ID lookup must release the handle.

U (Order Replace): The order loses its original queue position and is replaced with a new order reference number at a new price or quantity. In queue-position terms, U is equivalent to D followed by A at the back of the queue.

Every message type carries the order reference number. That is the design decision that enables full queue reconstruction. You do not need to infer which slot was affected. The feed tells you. The book’s job is to maintain structures that can action that information at the lowest latency per message, across a mix that is dominated by X and D messages.

The ITCH 5.0 specification has been stable since 2014 and reflects NASDAQ’s current feed design as of 2026. ITCH 5.0 is the reference implementation worth understanding in full, but the architectural argument generalizes. The same per-order event model is exposed by CME MDP 3.0 (TradFi futures), Eurex EOBI, Coinbase MBO, and OKX and Binance order-update streams. The implementation details differ across venue classes (sequence-gap recovery semantics, websocket reconnect behavior, and oracle-informed matching on DEX perpetual markets each require venue-specific handling), but the structural decision applies to every venue that exposes a per-order feed: design your book layer to use what that feed gives you. The cancel-first design argument does not depend on NASDAQ’s specific protocol; it depends on the underlying workload characteristic, which is consistent across venues.

The Three Structures That Compose a Production-Grade Book

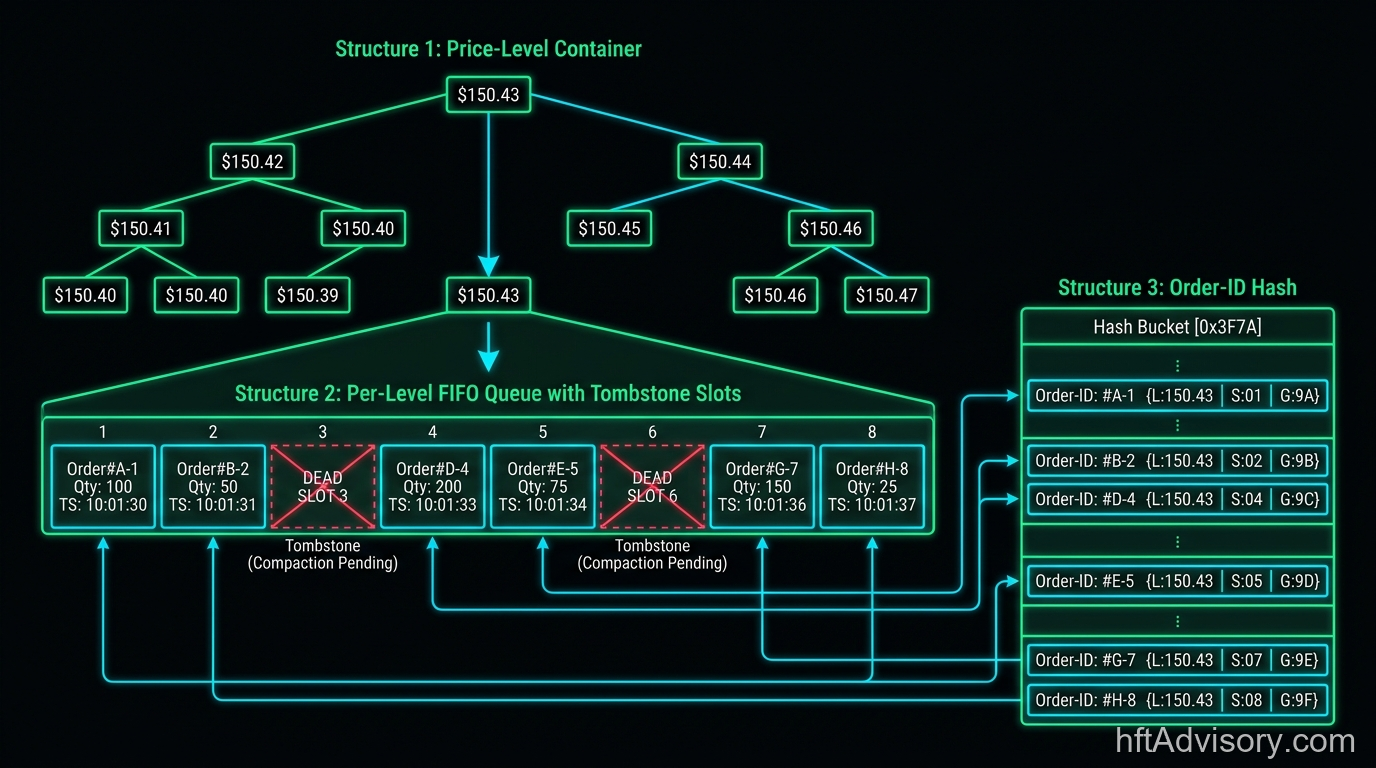

A book designed for L3 ingest at production grade composes three interlocking data structures. Understanding their responsibilities separately is necessary before understanding how they interact.

Structure 1: The price-level container. This is the top-level index from price to the state at that price level. The two canonical choices are a balanced BST (ordered by price, O(log M) lookup where M is the number of active price levels) and a tick-indexed array (O(1) lookup by pre-computed tick slot, higher memory cost but cache-predictable). WK Selph’s 2011 blog post “How to Build a Fast Limit Order Book” (the original post has been preserved as an archival GitHub gist and on the QuantCup site) documents the BST choice explicitly: “a binary tree of Limit objects sorted by limitPrice.” The complexity guarantees Selph documents: Add at a new price level is O(log M); all subsequent adds at an existing level are O(1); cancel and execute are O(1). For venues with predictable tick grids and bounded price ranges, a tick-indexed array gives better cache behavior at the cost of memory footprint. The right choice is venue- and workload-dependent.

Structure 2: The per-level FIFO queue with tombstone slots. For each price level, a queue of order slots that preserves FIFO ordering for matching semantics. The cancel path requires O(1) removal from an arbitrary position in the queue, which a naive linked list handles but with poor cache behavior. The production pattern is a slot-based queue where canceled orders write a tombstone (a dead-slot marker) in place rather than compacting the array immediately. Tombstones are collected lazily. This gives O(1) cancel-from-middle at the cost of periodic compaction work, which is amortized and can be scheduled outside the hot path.

Structure 3: The order-ID hash. A map from order reference number to a handle that locates the order within the two structures above. Selph’s documentation describes this as “a map keyed off idNumber.” In production implementations, these handles are packed integers rather than raw pointers.

The handle format worth noting is {level_idx, slot_idx, generation}. This three-field packed integer encodes which price level’s slot array to look in, which slot within that array holds the order, and a generation counter that invalidates stale handles without requiring explicit nulling. This is the generational arena pattern, which originated in game-engine ECS (Entity-Component-System) design. Catherine West’s RustConf 2018 keynote on ECS design, and the subsequent fitzgen/generational-arena library, document the ABA-safety argument: “a safe arena allocator that allows deletion without suffering from the ABA problem by using generational indices.” The same argument applies directly to order book slots. A cancel arrives with an order ID, you look up the handle, you check the generation field. If the generation does not match, the slot was reused and the cancel targets a stale reference. Without the generation field, ABA would require locking or epoch tracking. With it, the check is a single integer comparison. Cache density is the secondary benefit: because slots are fixed-size and allocated in contiguous arena blocks, pointer chasing drops significantly relative to a node-allocated structure.

These three structures reference each other through the packed integer handles, not through raw pointers. The same design discipline that game engines apply to entity management applies here, for the same reasons: predictable memory layout, no heap fragmentation under high-churn workloads, and ABA safety without locks.

The Cancel Path Is the Hot Path and Most Books Get This Backwards

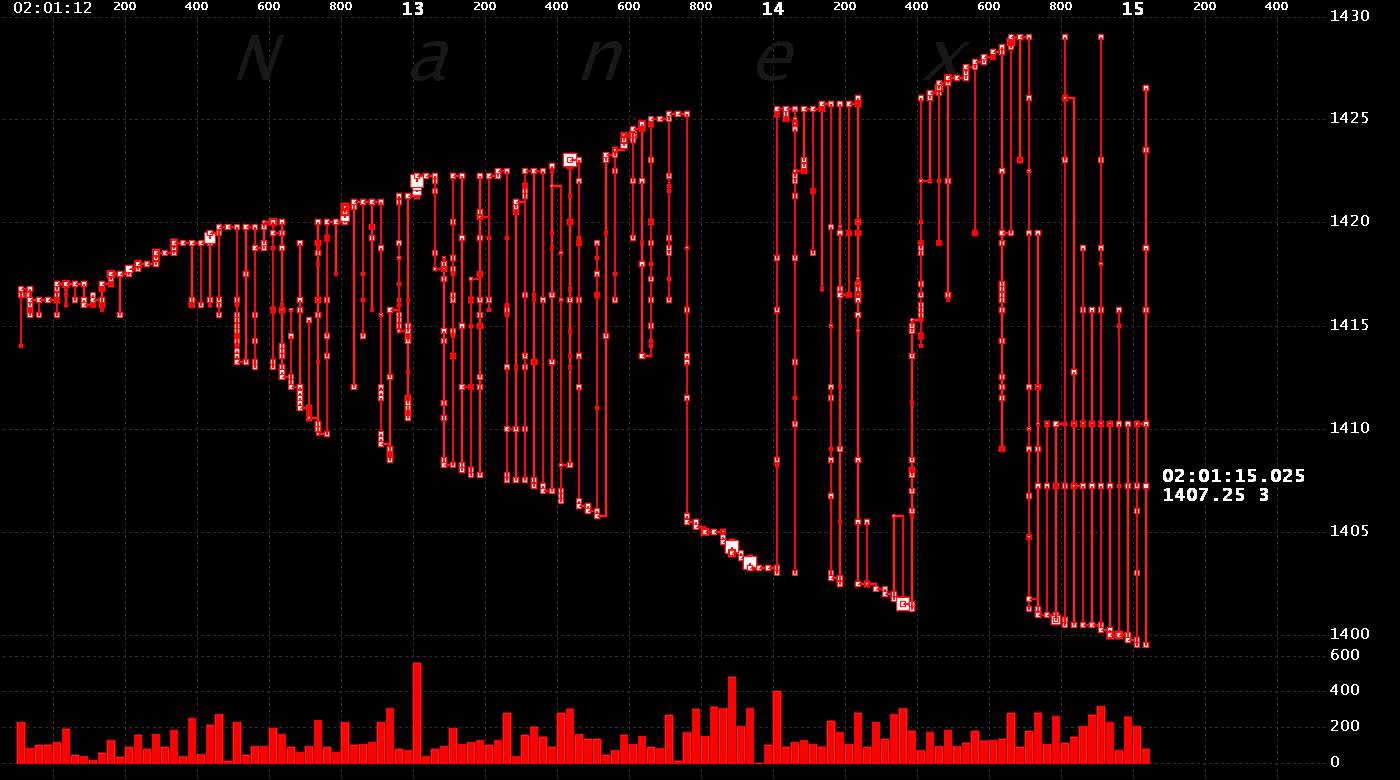

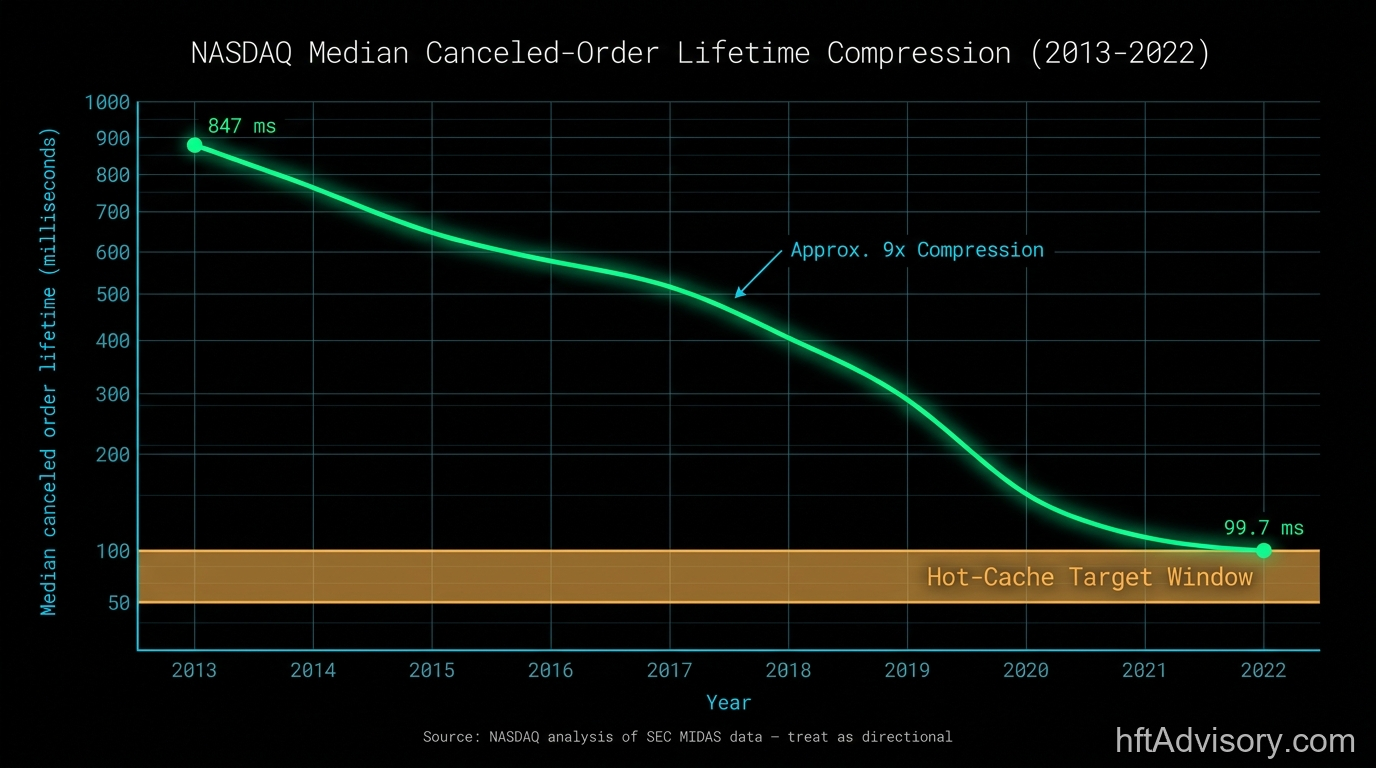

The empirical picture of modern order book activity is not ambiguous. Cancellation is the dominant event type by a large margin, and the time window over which cancellations target recent orders has been shrinking.

Maureen O’Hara’s 2015 Journal of Financial Economics analysis found that more than 98% of orders are canceled. Execution is the minority event. Manahov (2021), studying Australian Stock Exchange data, documents that HFTs cancel a large number of limit orders within 50ms to create arbitrage opportunities. NASDAQ’s analysis of SEC MIDAS data shows a trend that reinforces this: the median lifetime of a canceled order fell from approximately 847ms in 2013 to approximately 99.7ms in 2022, roughly a nine-fold compression over nine years. (This figure is from NASDAQ’s published analysis of SEC MIDAS data; treat it as directionally reliable rather than a primary regulatory citation.)

This cancellation-dominant workload pattern is not specific to US equities. Four independent regulatory and academic sources document the same structural signature across venue classes.

SEC MIDAS data has been cited as showing 96.8% of all orders were cancelled before they traded in 2013. By 2020 that ratio had grown further, reaching approximately 14 cancellations per trade at the national best bid and offer prices. Per the CFTC’s Haynes and Roberts 2015 study on automated trading in futures markets, order-to-execution ratios on the CME E-mini S&P 500 ran as high as 10:1 on liquid contracts, implying roughly a 90% cancellation rate on one of the world’s most liquid futures products. ESMA’s 2014 HFT report documented that HFT traders showed 34% duplicated orders compared to 12% for non-HFT traders across 100 stocks in nine EU countries, with approximately a quarter of all trades followed by cross-venue cancellation of duplicate resting orders. The Manahov (2021) ASX result noted above, where HFTs canceled large numbers of limit orders within 50ms, completes the geographic and asset-class spread: US equities, US futures, EU equities, and Australian equities all show the same pattern. Cancellation dominates. The cancel window is short. The order-to-execution ratio is heavily skewed toward cancellation in every venue class examined by primary regulatory and academic sources.

The architectural argument in this article does not depend on NASDAQ-specific cancel rates or US equity market structure. The workload characteristic is cross-venue.

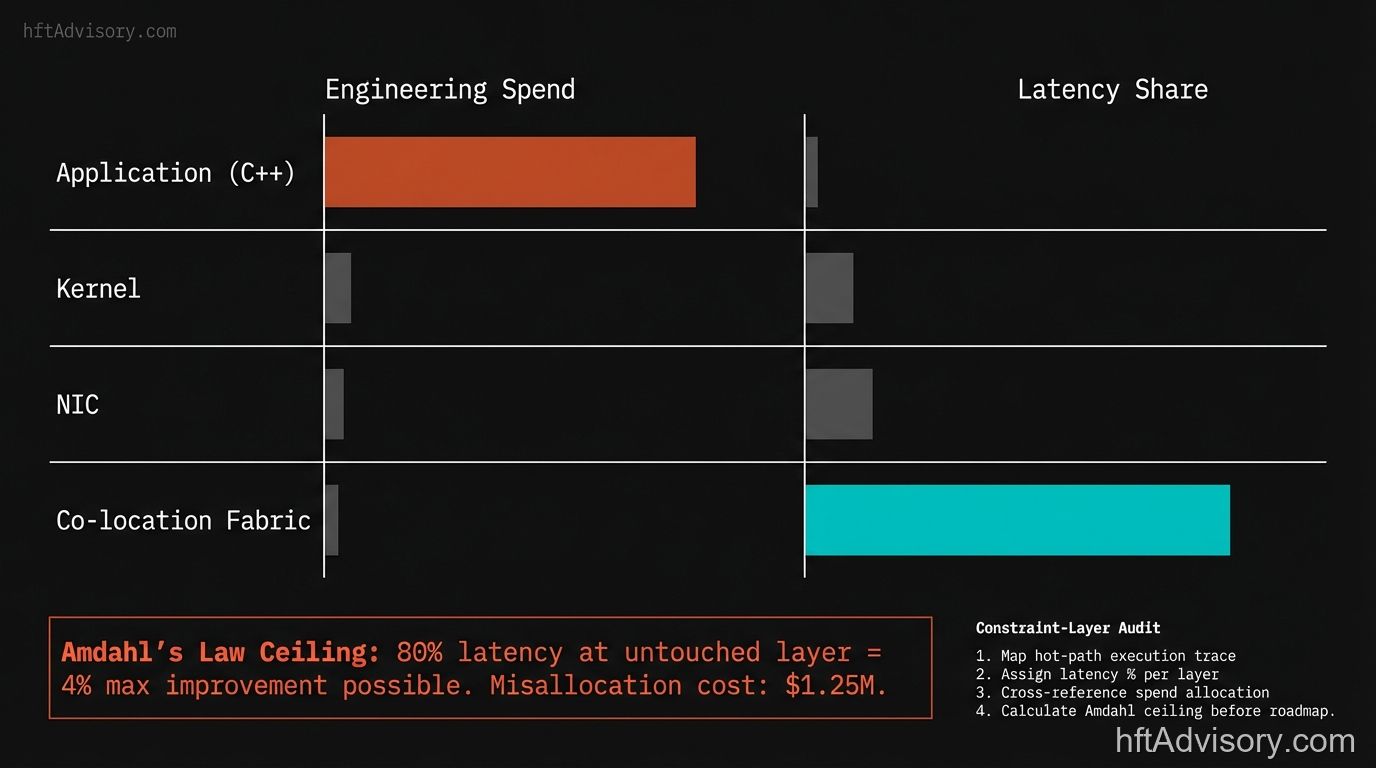

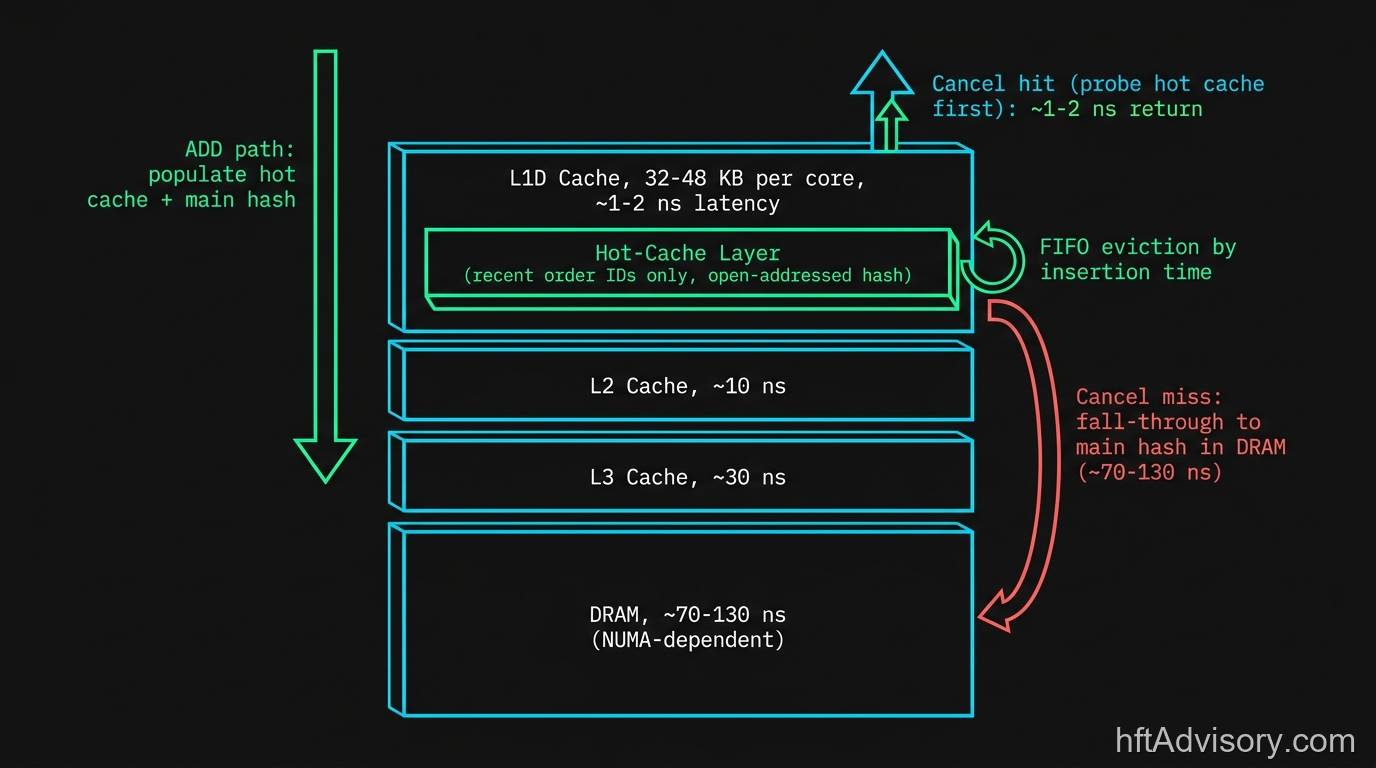

What these numbers say structurally: cancel messages arrive at high volume, they target recent orders, and the time window in which they arrive is measured in tens of milliseconds. A book designed to optimize the ADD path first, then handle cancels by falling through to the full order-ID hash, is optimizing for the minority event. Every cancel that misses a hot cache and falls through to main-memory lookup has to traverse DRAM at roughly 70-130 nanoseconds depending on NUMA topology, versus 4-5 cycles (approximately 1-2 nanoseconds at modern server clock speeds) for an L1D hit.

The reason most books are designed backwards is historical: ADD path latency was the first bottleneck practitioners hit. Early order books were sparse. Cancel handling was infrequent. Optimizing ADD made the system feel fast. The benchmark test at design time was “how quickly can I process a burst of new orders?” The benchmark that matters for a cancel-dominated production workload is different: “how quickly can I resolve a cancel against an order placed in the last 50 milliseconds?” Designing for the wrong benchmark produces a book that feels fast under synthetic ADD-burst testing and shows unexpected latency spikes under live cancel-heavy market conditions.

The architectural correction is not to abandon ADD-path optimization. It is to recognize that cancel is equally the hot path and allocate equivalent design attention to it.

The Fourth Structure: A Hot-Cache Layer for Recent Order IDs

The three-structure composition handles correctness. The fourth structure addresses the non-uniform access pattern that cancel-dominated workloads create in practice.

The hypothesis is straightforward: if the median lifetime of a canceled order has compressed to under 100ms, then the order IDs that cancel messages target are disproportionately drawn from the pool of orders added in the last 100ms or fewer. Your full order-ID hash holds the complete set of active orders across all price levels, which at any active venue is a large population. The subset of that population that cancel messages actually hit is much smaller. A cache that holds only that recent subset, sized to fit entirely within L1D, will resolve the majority of cancel lookups without touching main memory.

The implementation is a small open-addressed hash, sized for L1D residency: 32KB per core on AMD EPYC Genoa, 48KB per core on Intel Xeon 6 (Granite Rapids) and AMD EPYC Turin. The sizing target is the per-core L1D cache, not the L2 or L3. The structure holds order IDs and their handles. On ADD, the new order is populated into this hot cache alongside the main hash. On cancel, the hot cache is probed first. A hit resolves the lookup in approximately 1-2 nanoseconds. A miss falls through to the main order-ID hash, which may require a DRAM access at roughly 70-130 nanoseconds depending on NUMA topology. Eviction policy is FIFO by insertion time, which is a natural fit for the access pattern: the oldest entries are the least likely to be targeted by incoming cancels.

An important framing note: the documented benefits of generational arenas (ABA safety, allocation discipline) are the software-engineering rationale for packed-integer handles in the main hash. “Cache density” as a benefit from that specific pattern is practitioner framing rather than a named algorithmic guarantee; the hot-cache layer’s L1D residency benefit comes from the sizing decision, not from the arena pattern per se. These are separate properties from separate design decisions.

The non-uniform access pattern this exploits is not unique to order books. Game engines face exactly the same challenge: a large set of entities exists, but at any frame, only a small subset of recently-active entities receives updates. The ECS literature’s answer was the generational arena (for correctness) combined with archetype-sorted component layouts (for cache coherence in the hot loop). The order book parallel is direct: the full order-ID hash is the entity registry; the hot-cache layer is the archetype group for the recently-active entity class (new orders likely to be canceled within the next 100ms).

Jane Street’s public discussion of their JX exchange architecture describes processing approximately 3 million messages per second at peak with single-digit microsecond latencies. At that message rate, a DRAM access on every cancel lookup is not a periodic cost; it is a systematic structural tax that compounds across the distribution.

Practical Framework: Diagnostic Checklist for Architecture Reviews

When reviewing a trading desk’s order book implementation for a FIFO venue strategy, these are the questions I use to locate the structural gaps before looking at any code.

Queue position accounting:

- Can your book tell you, at any point in time, the absolute queue position of each resting order at its price level? Not an estimate from depth data, but the derived position from order-level events?

- If your L3 ingest stalls or reconnects, does your book enter a degraded state where per-order position is unknown, and does your strategy layer know this and adjust fill-probability estimates accordingly?

Cancel path performance:

- Of your cancel messages in a live session, what fraction target orders that were added in the last 100ms? Last 50ms? If you cannot answer this, your book is not instrumented for the workload it is running.

- What is your median cancel processing latency from message receive to book state update? What is your 99th percentile? A large spread between median and P99 often points to cache-miss variance on the cancel lookup path.

- Does your cancel path hit an L1D-resident structure first, or does it go directly to the main hash?

Handle design:

- Does your order-ID lookup use packed integer handles with a generation field, or raw pointers? Raw pointers require either locking or careful epoch management to be ABA-safe. Packed handles give you ABA safety for a single integer comparison.

- When a cancel arrives for an already-deleted order (a racing condition that occurs in multi-threaded book designs), does your book detect and discard it cleanly, or does it silently produce an incorrect book state?

Concurrency model:

- Is your book updated by a single writer thread, or by multiple writer threads coordinating via locks or lock-free primitives? Single-writer designs are easier to reason about for correctness but cap throughput at one core. Multi-writer designs add coordination overhead that can dominate the very latency savings the hot-cache layer provides.

- If your book is read concurrently by strategy and risk threads, how do you guarantee they read a consistent snapshot? Epoch-based reclamation, read-write locks, and sequence-number gating each carry different consistency and latency trade-offs. The right answer is venue-and-workload-specific, but there must be an answer.

- When the hot cache evicts an entry while a concurrent reader holds a handle to it, what is the safety guarantee? The generation field in the packed handle is the canonical answer for single-writer designs. If your design does not use it, confirm what replaces it.

- Are your hot-cache and main hash allocated on the same NUMA node as the book thread? A clean single-threaded design that crosses NUMA boundaries under load can erase the latency advantage the hot-cache structure was built to deliver.

Data feed alignment:

- Are you subscribed to an L3 (MBO) feed for this venue? If you are running an L2 (MBP) feed and relying on depth-change inference to estimate queue position, that inference is structurally unreliable. L2 aggregation discards the data before you see it.

- Are you correctly distinguishing ITCH message type X (partial cancellation, order remains live with queue position intact) from message type D (full delete, order removed, queue compacts)? A book that treats X as D will systematically undercount remaining queue depth.

If you cannot answer the cancel-path latency questions, your book is making architectural assumptions about its own workload that have not been tested against production data.

Build or rent. Not every firm should build the four-structure book in-house. The cost picture for L3 data access alone spans a wide range. On the vended side: Databento Standard tier is priced at $199 per month (as of the time of writing) and covers historical L3/MBO data only; live data requires moving up to the Plus tier, priced at $1,399 per month on an annual contract, plus applicable exchange licensing fees. (Live US equity L3/MBO via Databento starts at the Plus tier at $1,399 per month at the time of writing, plus applicable exchange licensing. Do not treat the Plus license fee as the all-in cost.) At the top of the published-pricing range, Databento Unlimited is priced at $3,500 per month on an annual contract. Historical US equity data is separately priced from $0.40 per GB (binary uncompressed) on the Standard tier.

If you need NASDAQ TotalView-ITCH direct access rather than a vended feed, NASDAQ’s 2025 official price list (current at the time of writing) publishes a distinct cost structure. Internal Distributor fees run $26,570 per distributor per month. External Distributor fees run $2,660 per distributor per month. Professional and Corporate per-subscriber fees are $80.50 per subscriber. NASDAQ’s Non-Display Enterprise License (Direct Access) for TotalView is listed at $75,000. These are the direct-feed access costs before any infrastructure build.

For enterprise managed book-engine infrastructure (OneTick, Pico Quantitative Trading, Exegy), no published pricing is available. Contact-sales only. That absence of published pricing is itself signal: enterprise managed book-engine stacks start where the published-pricing market ends.

The build decision is not purely a data cost question. Building the four-structure book in-house is a six-to-twelve month senior-engineer effort, with ongoing operational cost in concurrency reviews, latency profiling, and NUMA tuning that compounds across the system’s lifetime. The build case is when your strategy’s economics depend on a specific architectural property that no off-the-shelf book exposes, typically per-order queue-position accounting under cancel pressure beyond what standard book engines guarantee. The decision criterion is precise: if your strategy’s economics survive the Databento Plus tier cost ($1,399 per month plus exchange licensing, at the time of writing) AND your cancel-targeting-window fraction sits below the build threshold described in the Conclusion, renting is the default answer. Build when you can name the specific property your strategy requires that no vendor delivers. If you cannot name it, the build cost is likely not recoverable.

Conclusion

The falsifiable test for whether the hot-cache layer is worth building is not architectural; it is empirical. Take a live session of ITCH messages. For each cancel (X or D type), compute the time elapsed since the targeted order’s A or F message. Plot the distribution. If a large fraction of cancels target orders added within the last 50-100ms, the hot-cache layer pays for itself: that fraction represents lookups that will hit the L1D-resident structure instead of DRAM. If that fraction is small (orders being canceled after many seconds or minutes), the L1D sizing budget is better allocated elsewhere and the full hash is sufficient.

The measurement is the decision criterion. What threshold justifies building it? The design sizing for a 32-48 KB hot cache sets a natural N: the structure holds however many order records fit in L1D at the handle-plus-metadata size you choose. If your cancel-targeting-window fraction is above 60-70% for orders within the last 100ms, the L1D hit rate justifies the implementation complexity. Below that, the marginal gain over a well-tuned main hash diminishes.

Before committing to the build, instrument your production or simulation feed to establish a baseline. Measure your current P99 cancel-lookup latency and record it. Then define what success looks like post-deployment: the hot-cache hit rate should trend in line with your measured cancel-targeting-window fraction; P99 cancel-lookup latency should tighten relative to median (less variance between the two is the signature of consistent L1D hits displacing DRAM misses); and book correctness should show zero net change, because the hot cache is a lookup optimization that is invisible to the rest of the system. If your cancel-targeting-window fraction sits below 30-40% across multiple sessions or multiple venues, the structure does not pay back its complexity cost and the full hash is the better answer. That is not a failure of the design argument; it is the measurement-first protocol working as intended. Bring the distribution to your next architecture review, not the intuition.

Originally shared as a LinkedIn post on 2026-05-17.

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

>> Learn more about what I do:

https://hftAdvisory.com

>> Your execution logs contain $200K+ in recoverable edge.

>> Microstructure Diagnostics — one-time audit, 3-5 day turnaround

https://hftadvisory.com/microstructure-diagnostics

... more info about me 👇