Table of Contents

- The Economics of Latency Slippage

- Layer 1: Ingress — The Clock You Trust

- Layer 2: Book State — The Memory Topology Penalty

- Layer 3: Signal Path — The Compute You Do Not Control

- Layer 4: Pre-Trade Risk — The Serialization That Kills You

- Layer 5: Egress — The Protocol Debt You Are Still Paying

- The 2024-2025 Venue Reset: Why Your 2022 Baseline Is Stale

- The 5-Layer Latency Audit: A Diagnostic Checklist

- Every Microsecond Has a P&L Line

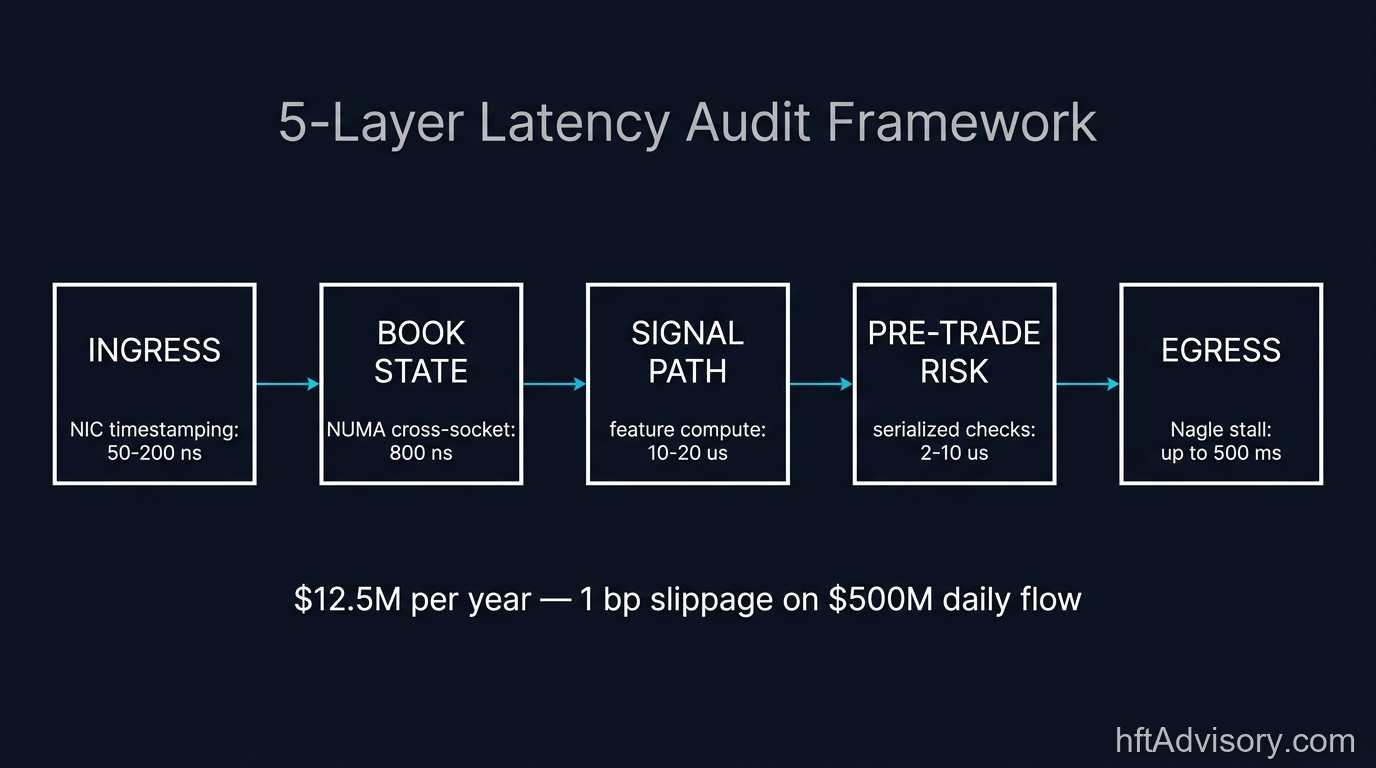

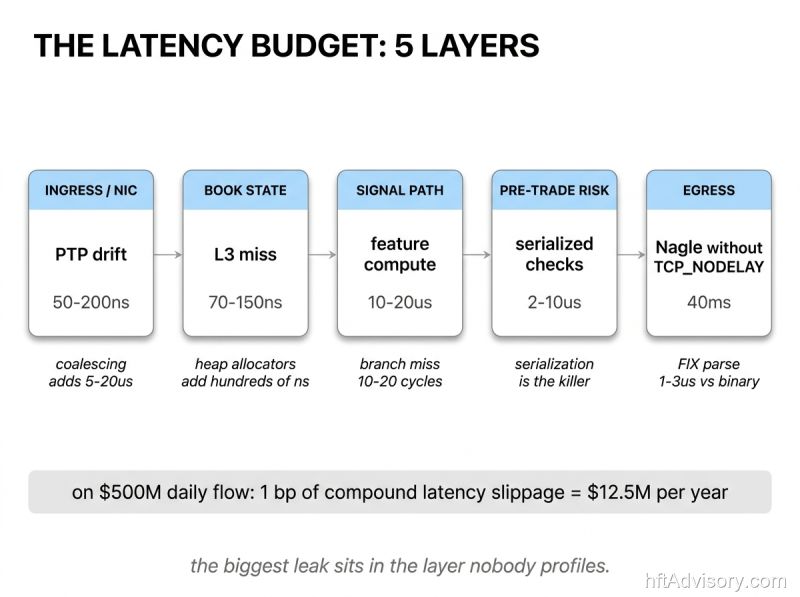

The Economics of Latency Slippage

$12.5 million a year. That figure is what 1 basis point of latency slippage costs on $500M of daily flow at 250 trading days. It is conservative. Published transaction cost analysis studies document 1.3 to 5.2 basis points of slippage on sophisticated algorithmic executions in turbulent sessions. For institutional desks running at that scale, 1 bp/day is a floor, not a ceiling.

Aquilina, Budish, and O’Neill (2022, Quarterly Journal of Economics 137(1)) quantified what they call the HFT arms race at approximately $5 billion in annual costs across global markets. Latency-arbitrage races occur roughly once per minute per FTSE 100 symbol. They last 5 to 10 microseconds. The top six HFT firms win more than 80 percent of those races. Eliminating them entirely, the paper estimates, would reduce liquidity costs by 17 percent. That is not a theoretical outcome. It is the magnitude of the structural tax that latency imposes on every firm that has not optimized its stack.

Thank you for reading this post, don't forget to subscribe!

When CTOs at $500M-plus AUM firms ask me where execution budget is leaking, the answer is rarely in one place. Over two decades in high-frequency trading architecture, the pattern I see across engagements is consistent: the losses are distributed across five layers, each contributing its own drag, and the biggest single source is almost always the layer nobody profiles.

This article maps those five layers. For each one, I identify where time leaks, what the architectural decision point looks like at the CTO level, and what pass/fail looks like in a practical audit. At the end, there is a diagnostic checklist you can run against your own stack today.

Layer 1: Ingress — The Clock You Trust

The ingress layer receives market data from the venue and delivers it to the rest of the stack with a timestamp. Everything downstream depends on that timestamp being accurate. If the clock drifts, the rest of your latency accounting is wrong.

Where time leaks. Hardware timestamping via IEEE 1588 Precision Time Protocol (PTP) at the MAC layer achieves 50 to 200 nanoseconds of drift in typical production environments, with 100 to 200 ns the most common observed range. Software timestamping, where the OS records the arrival time rather than the NIC, pushes that number above 500 ns immediately. Under burst conditions, interrupt coalescing can add 5 to 20 microseconds to the apparent latency of individual packets. That is not a firmware quirk. It is a configuration decision that either got made deliberately or defaulted silently.

The architectural decision point. Hardware timestamping versus OS-level timestamping is a procurement and configuration choice. The CTO-level question is: does your NIC support hardware timestamping, is it enabled, and is your PTP synchronization discipline documented and monitored? Vendors have offered hardware timestamping solutions for years. White Rabbit, developed at CERN and deployed in financial networks, extends synchronization to sub-nanosecond precision over fiber. Whether your infrastructure uses it or a standard PTP implementation, the choice should be deliberate and auditable.

CME Group, Nasdaq, and Eurex all timestamp at nanosecond resolution. If your ingress layer cannot produce timestamps at comparable discipline, you are attributing venue latency to internal latency and misreading your own profile.

What nobody profiles here. Most desks measure tick-to-trade end-to-end. Almost none measure ingress timestamp drift as an independent variable. If you cannot isolate ingress clock error from order book update latency, you do not know what your L1 latency actually is.

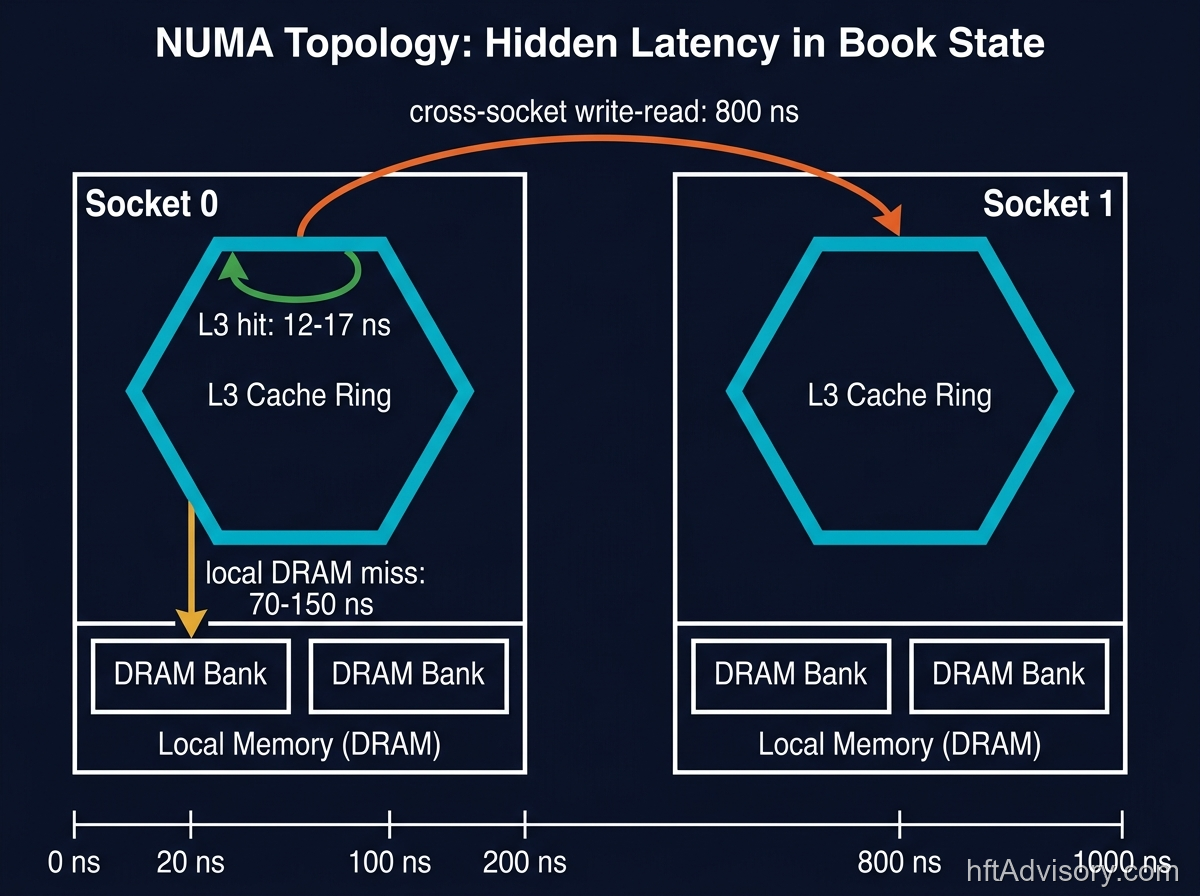

Layer 2: Book State — The Memory Topology Penalty

The book state layer maintains the order book in memory and serves updates to the signal path. Its latency profile is determined almost entirely by memory topology decisions made at procurement and deployment time.

Where time leaks. When a hot-path read misses L3 cache and falls to DRAM, you pay 70 to 150 nanoseconds on local NUMA. Cross-socket reads on Intel Skylake-class architectures add approximately 800 nanoseconds per write-then-read operation across NUMA nodes. An L3 cache hit, by contrast, costs 12 to 17 nanoseconds. The difference between a local L3 hit and a remote-socket DRAM access is not a rounding error. It is an order-of-magnitude penalty that compounds across every book update on the hot path.

Heap allocators on the hot path produce a different class of problem. In patterns I see across engagements, heap allocator contention can stall threads from hundreds of nanoseconds to hundreds of microseconds under concurrent load. The jemalloc allocator shows approximately 35 percent improvement in P99 latency versus the default glibc malloc under high-concurrency conditions. The most latency-sensitive desks avoid heap allocation on the hot path entirely.

The architectural decision point. Memory topology is a procurement and OS configuration decision. The questions at the CTO level are: is the order book process pinned to cores on the socket that owns the memory it reads? Has the OS been configured to prevent cross-NUMA allocation silently? Are hot-path data structures sized to fit within L3? These are not implementation details. They are deployment decisions that a CTO should be able to confirm without reading source code.

What nobody profiles here. Most desks track order book update latency as a single number. Almost none decompose it into L3-hit versus L3-miss versus cross-NUMA profiles. Until you have those three numbers separately, you cannot tell whether the optimization budget belongs in memory layout, CPU affinity configuration, or somewhere else entirely.

Layer 3: Signal Path — The Compute You Do Not Control

The signal path takes order book state as input and produces a trading signal as output. It is the layer where the strategy logic lives. It is also the layer where compute cost is most often underestimated.

Where time leaks. In patterns I see across engagements, feature computation consumes 10 to 20 microseconds before the model sees a vector. Branch mispredictions on modern x86-64 processors cost 10 to 20 cycles per miss. Intel Core documentation shows 15 cycles as the canonical figure. At 3 GHz, 15 cycles is 5 nanoseconds. That sounds small until you account for the frequency of mispredicts in poorly branch-predicted code on market data paths, where access patterns are data-dependent and difficult to predict statically.

The architectural decision point. Model-serving compute is either pinned to latency-optimized cores with known interrupt isolation, or it is not. The CTO-level question is: are your signal computation resources allocated as a dedicated latency budget, or are they shared with other workloads and subject to OS scheduling variance? Core pinning, process isolation, and interrupt affinity configuration are OS-level decisions, not engineering heroics. If they are not documented and auditable, they are not governed.

What nobody profiles here. Feature computation latency is almost never measured independently from the book update that triggers it. In engagements I have run, teams frequently discover that a significant fraction of their apparent order book latency is actually signal computation time that was never separated in profiling. The attribution error leads to the wrong optimization target.

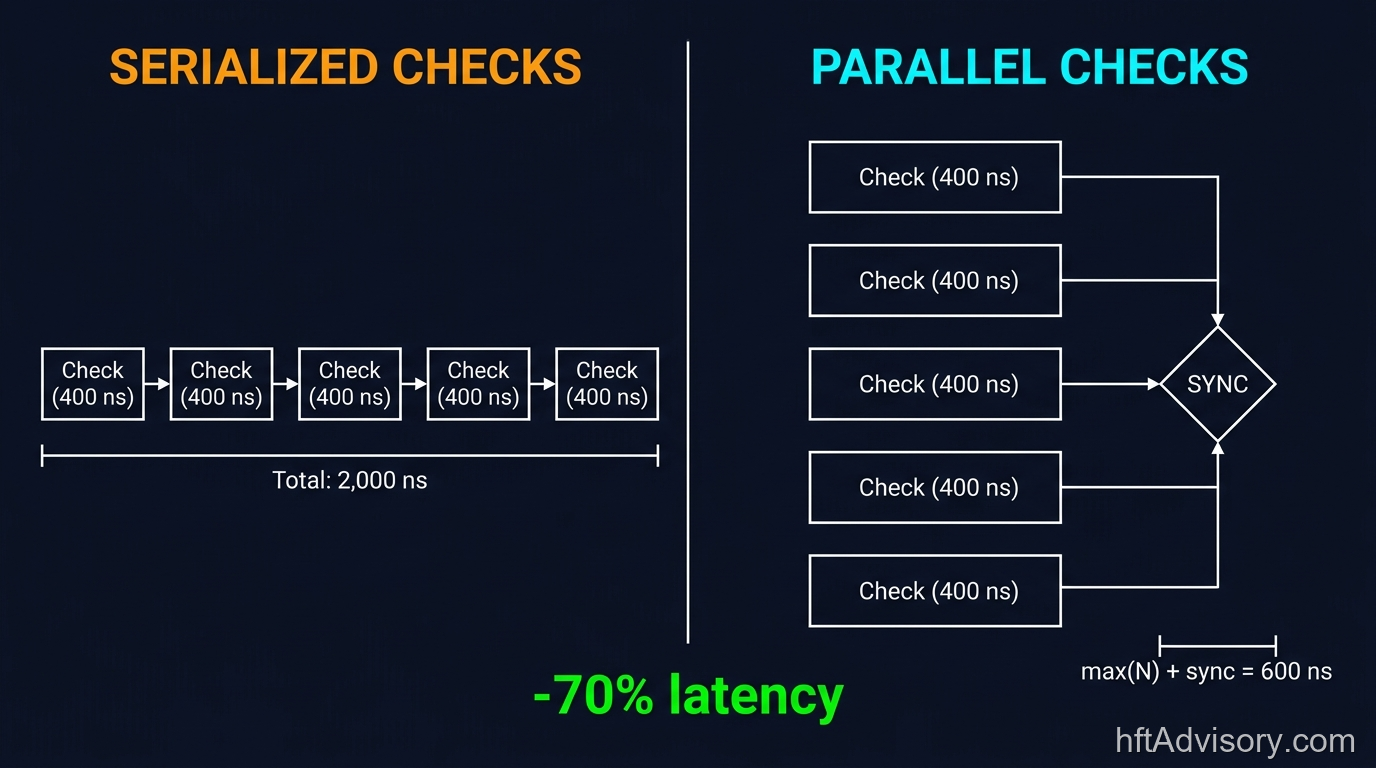

Layer 4: Pre-Trade Risk — The Serialization That Kills You

Pre-trade risk checks sit between the strategy signal and the order submission. They are required. Regulators, exchanges, and internal risk teams all mandate them. The question is not whether to run them. The question is how they are architected.

Where time leaks. In patterns I see across engagements, serialized risk checks add 2 to 10 microseconds to the hot path. The specific check logic is not the problem. The serialization is the problem. When risk checks execute sequentially and block order submission, the aggregate latency of the risk layer is the sum of all individual check latencies plus synchronization overhead. In parallel architectures, it is bounded by the slowest single check plus dispatch cost.

The distinction matters at scale. A desk running 10 independent checks at 200 nanoseconds each incurs 2 microseconds in a serialized design and approximately 200 nanoseconds plus overhead in a parallel design. That is a 10x difference in risk-layer contribution to total latency — a real number that belongs in the hot-path budget.

The architectural decision point. Serialization versus parallelism in the risk layer is a design choice, and it is a choice that should be made explicitly at the CTO level. The compliance requirement specifies what checks must run. It does not specify that they must run sequentially. An architect reviewing a serialized risk design should be able to ask: is this sequential because it has to be, or because it defaulted that way? The answer shapes the remediation path.

After the Knight Capital Group failure in August 2012, where a stale routing flag re-activated on one of eight servers caused $440 million in losses in 45 minutes (documented in SEC enforcement records), the industry consensus shifted toward more extensive pre-trade controls. The latency consequence of that architectural shift is real and must be accounted for in the hot-path budget. The accounting does not require serialization.

What nobody profiles here. In most desks, risk checks are profiled in isolation on test infrastructure. Almost none measure risk-layer latency under production load distributions with concurrent order flow. Under burst conditions, lock contention in serialized check implementations produces latency distributions that look nothing like the isolated benchmark. The tail you see in production is not the check latency. It is the contention latency.

Layer 5: Egress — The Protocol Debt You Are Still Paying

Egress is the path from the order management system to the exchange. It encodes the order, transmits it, and handles the acknowledgment. In 2022, there were desks still running FIX ASCII here as a defensible choice. In 2025, that position is difficult to defend.

Where time leaks. FIX ASCII parsing costs 1 to 3 microseconds per message compared to binary protocols like CME’s SBE (Simple Binary Encoding) or FIXP. As of December 31, 2024, CME decommissioned iLink 2, its text-based FIX interface. iLink 3, which runs on binary SBE and FIXP, is now the only supported path. If a desk was holding onto FIX ASCII on the CME path for any reason, that option no longer exists. The forced migration removes one source of protocol debt.

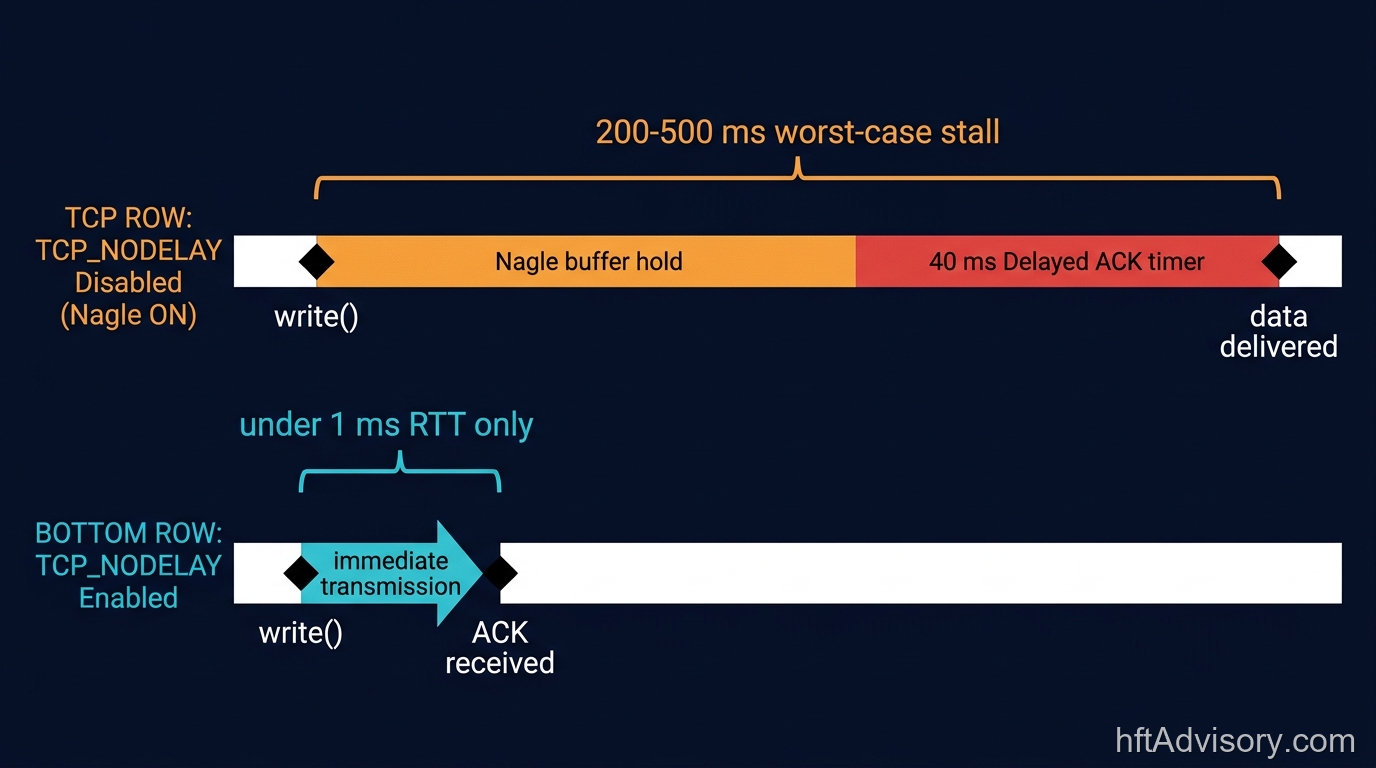

The TCP configuration penalty is a different class of problem and one that is almost always a configuration oversight rather than a design decision. If TCP_NODELAY is not set on the socket, the Linux delayed ACK timer alone defaults to 40 milliseconds. In combination with Nagle’s algorithm coalescing behavior, where small writes are buffered until a full segment can be sent, a write-write-read sequence can produce worst-case stalls of 200 to 500 milliseconds. Either number is catastrophic on a trading path. This is not a kernel programming problem. It is a socket configuration line that either exists in the deployment spec or does not.

The architectural decision point. Binary protocol adoption is no longer optional on CME paths. CME made it mandatory. On other venue paths, the question is whether the latency delta justifies migration. At 1 to 3 microseconds per message, the answer for any latency-sensitive strategy is almost always yes. The TCP_NODELAY configuration belongs in every deployment checklist as a mandatory line item, not a developer tribal knowledge item.

What nobody profiles here. TCP send buffer behavior under burst conditions. Most egress profiling measures steady-state latency. Under burst order flow, TCP send buffer backpressure produces queuing latency that has nothing to do with protocol encoding. Until you have profiled egress latency at P99 under peak-flow conditions, your egress budget is not a budget. It is a steady-state average that ignores the moments that matter most.

The 2024-2025 Venue Reset: Why Your 2022 Baseline Is Stale

Within an 18-month window between 2024 and early 2025, the three largest futures and equities venues made significant infrastructure changes.

CME Group migrated from iLink 2 (FIX ASCII) to iLink 3 (binary SBE/FIXP) with the iLink 2 decommission on December 31, 2024. CME also established a private co-location arrangement with Google Cloud in Aurora, Illinois, announced June 2024, with a disaster recovery target in Dallas planned for 2029.

Nasdaq moved its core trading infrastructure to AWS Outposts in production (2025), reporting low double-digit microsecond round-trip latency and approximately 10 percent improvement in round-trip times relative to prior infrastructure.

Eurex introduced FIFO with passive liquidity protection on options markets in 2024, changing execution priority algorithms per product class. Different priority models have different latency profiles and different strategic implications depending on where in the queue your orders typically sit.

These are not incremental upgrades. Each one changes the assumptions under which a latency audit was valid. If your stack was tuned to the 2022 infrastructure at any of these venues, and if your team has not re-run a systematic audit since these changes took effect, you are running a tuned car on a reconfigured track. The finish line has moved.

Moallemi and Saglam (2010) established the foundational academic case that latency enlarges adverse price moves during execution windows. BIS Working Paper No. 1115 documented 1.33 to 2.36 basis points of adverse selection costs on affected dark pool trades attributable to latency arbitrage. Those structural dynamics are not new. What is new is that the venue infrastructure generating them changed significantly in 2024, and most audit baselines have not kept pace.

The 5-Layer Latency Audit: A Diagnostic Checklist

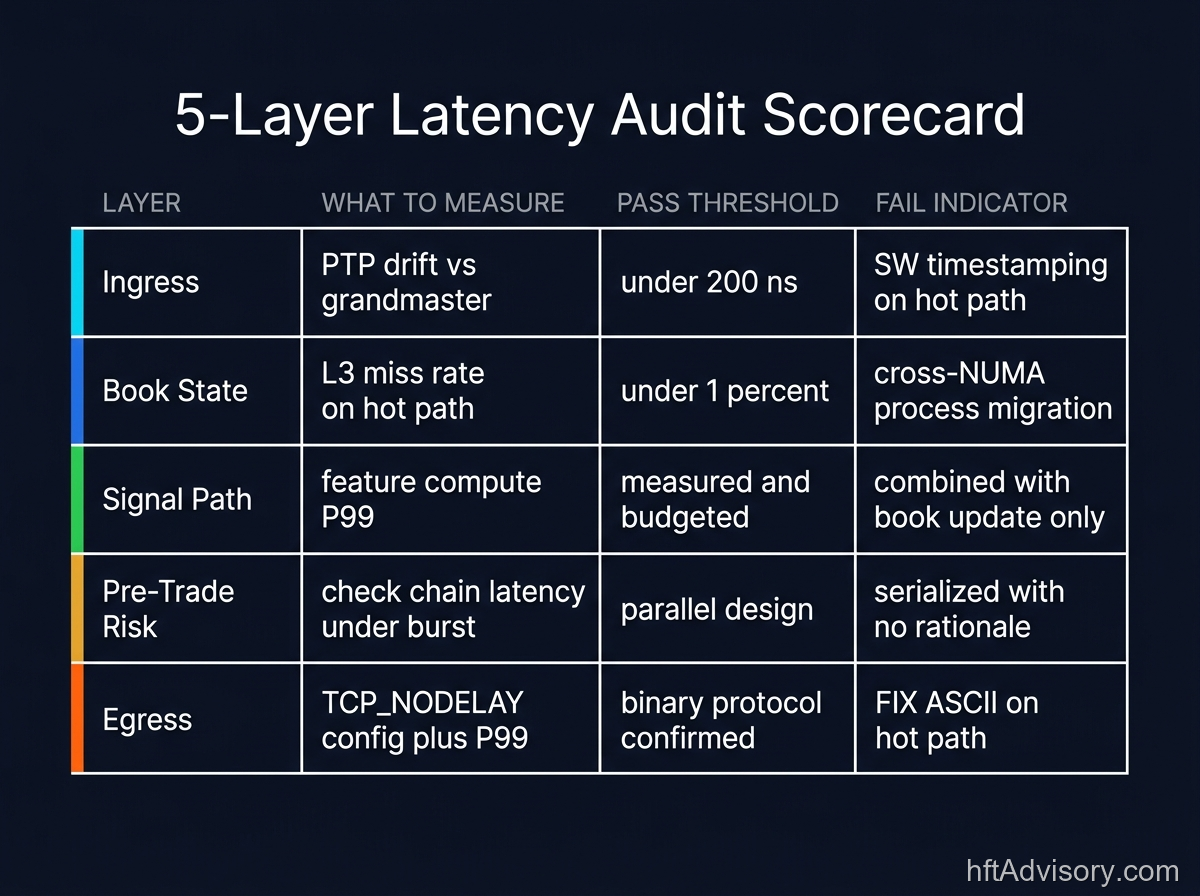

The following checklist reflects the diagnostic framework I apply at the start of every engagement. It is the “Map,” not the “Car.” Each item identifies what to measure, what the measurement approach looks like conceptually, and what pass/fail means at the CTO level.

Layer 1 — Ingress

- What to measure: Timestamp discipline and PTP drift.

- How to measure: Compare NIC-level hardware timestamps against a PTP grandmaster reference. Measure across normal load and burst conditions separately.

- Pass: Hardware timestamping enabled and confirmed; PTP drift documented at under 200 ns under normal conditions; interrupt coalescing settings reviewed and deliberately configured.

- Fail: Software timestamping in use on the hot path; PTP configuration undocumented; ingress timestamp drift never measured as an independent variable.

Layer 2 — Book State

- What to measure: L3 cache hit rate and NUMA locality on order book reads.

- How to measure: Hardware performance counters (CPU PMU) measuring L3 miss rate on the order book process; NUMA statistics showing local versus remote memory allocation ratios for hot-path data.

- Pass: L3 miss rate on hot path below 1 percent; order book process confirmed pinned to cores local to its memory allocation; heap allocation absent from the hot path.

- Fail: L3 miss rate above 5 percent on the hot path; process migrating across NUMA nodes; heap allocations measurable on the hot path under production load.

Layer 3 — Signal Path

- What to measure: Feature computation latency as an independent variable, separate from book update latency.

- How to measure: Instrumentation that timestamps separately the receipt of book state and the delivery of a trading vector to the order router. The delta is feature computation time.

- Pass: Feature computation latency measured and budgeted; cores pinned and interrupt-isolated; compute time documented at P50, P95, and P99 under production load.

- Fail: Feature computation and book update latency measured only as a combined number; no core pinning documentation; P99 tail not measured under production-equivalent load.

Layer 4 — Pre-Trade Risk

- What to measure: Risk-layer latency under production-equivalent concurrent load, not just isolated check latency.

- How to measure: Instrument the risk layer entry and exit timestamps under simulated burst conditions. Separate check execution latency from synchronization and dispatch overhead.

- Pass: Risk checks run in parallel where checks are logically independent; contention latency under burst measured and documented; risk-layer contribution to total hot-path latency budgeted explicitly.

- Fail: Risk checks serialized with no documented rationale; risk layer profiled only in isolation; no measurement of contention latency under burst conditions.

Layer 5 — Egress

- What to measure: Protocol encoding cost, TCP configuration, and send-buffer behavior under peak flow.

- How to measure: Measure message encoding latency separately from network transmission latency. Confirm TCP_NODELAY is set. Profile P99 egress latency under burst order flow, not steady-state averages.

- Pass: Binary protocol in use on all venue paths; TCP_NODELAY confirmed set in deployment spec; egress latency profiled at P99 under peak-flow conditions; no Nagle/delayed ACK exposure.

- Fail: FIX ASCII still in use on any latency-sensitive path; TCP_NODELAY not in deployment checklist; egress profiled only at steady-state averages; protocol configuration maintained as tribal knowledge rather than documented deployment standard.

Every Microsecond Has a P&L Line

The standard is that every microsecond on your hot path has a P&L line. If you cannot produce that line for each of these five layers, your stack is leaking budget somewhere. The biggest leak is almost always the layer nobody profiles. Not because it is the most technically complex, but because it is the one that was assumed to be working.

The 2024-2025 venue infrastructure changes at CME, Nasdaq, and Eurex made baselines from 2022 unreliable. If your last systematic audit predates those changes, the tuning you did to the old configuration is not fully transferable to the current one.

A five-layer audit is not a one-time event. It is the diagnostic baseline every 12 to 18 months, and after any significant venue infrastructure change.

If you want an independent review of where your stack sits against this framework, the Discovery Assessment is designed for exactly that conversation.

This article was originally shared as a LinkedIn post.

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

>> Learn more about what I do:

https://hftAdvisory.com

>> Your execution logs contain $200K+ in recoverable edge.

>> Microstructure Diagnostics — one-time audit, 3-5 day turnaround

https://hftadvisory.com/microstructure-diagnostics

... more info about me 👇