By Ariel Silahian | April 17, 2026

Table of Contents

- What the Benchmark Is Actually Measuring

- Failure Mode 1: Buying the Benchmark Stack, Not the Production Stack

- Failure Mode 2: Architecture B Sold as Architecture A

- Failure Mode 3: IP-Core Overhead Miscalculated

- Failure Mode 4: Recompile Time and the No-Rollback Reality

- Failure Mode 5: PCIe Treated as Free Bandwidth

- Failure Mode 6: Kernel-Bypass Software Paths Left Out of the Comparison

- Failure Mode 7: The Cost-Per-Fill Math Never Reaches the CTO Before the PO

- When FPGA IS the Answer: Threshold Conditions

- The Pre-PO Topology Audit: Three Questions Every CTO Should Answer Before Signing

Introduction

$5.35M to build the first venue. $4.59M per year to maintain 18 markets. The tick-to-trade profile barely moved.

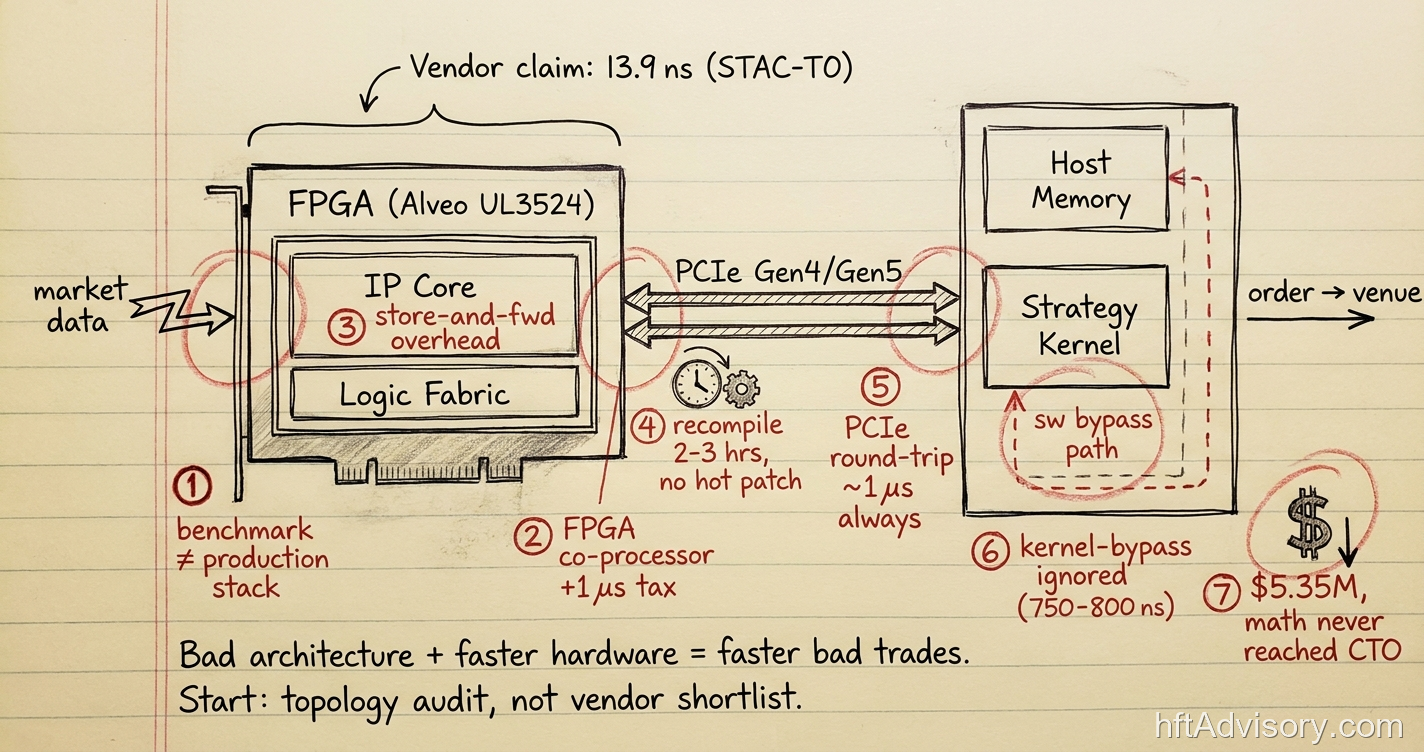

I have sat through seven variants of this conversation. A CTO walks me through a new FPGA stack (AMD Alveo UL3524, Exegy Nexus, vendor benchmarks showing 13.9 nanoseconds on STAC-T0). The hardware landed. The P&L did not follow.

Thank you for reading this post, don't forget to subscribe!

This is not a hardware problem. The silicon is excellent. The problem is that the gap between a vendor benchmark and a production deployment is measured in microseconds and millions of dollars, and that gap rarely appears in any pre-purchase analysis I have been shown.

After 20 years building and auditing HFT infrastructure across Tier 1 institutional trading desks, I have documented the same seven failure modes recurring across desks regardless of the vendor, the asset class, or the capital committed. This article is not about whether FPGA is the right technology for your desk. It is about the specific architectural and procurement errors that cause FPGA programs to deliver hardware latency at benchmark cost while generating production latency at production cost, a combination that destroys the original P&L thesis.

The CTOs who avoid these failures share one common behavior: they conduct a topology audit before the purchase order is signed. Not a vendor shortlist. An audit.

What the Benchmark Is Actually Measuring

Before the failure modes, the benchmark context matters because three of the seven failures trace directly back to misreading what STAC-T0 actually measures.

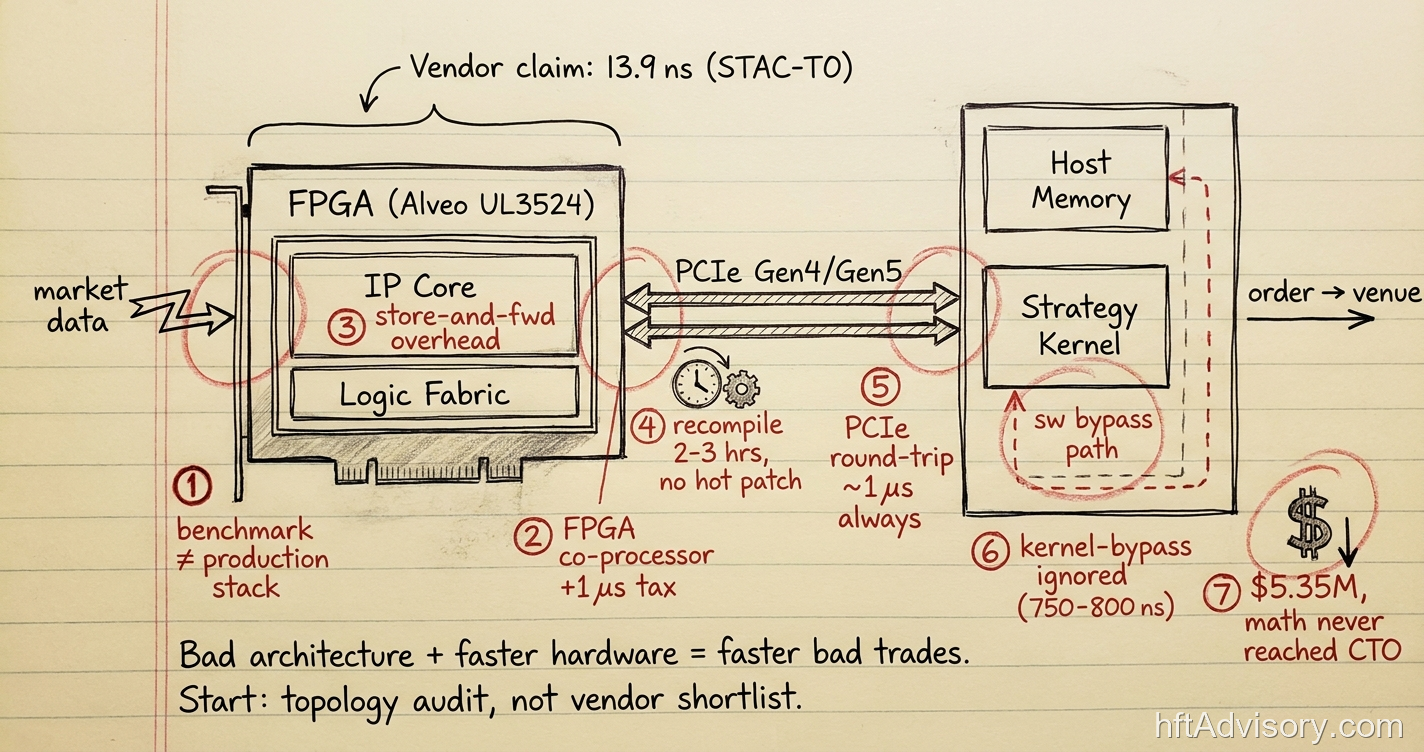

STAC-T0 measures “actionable latency”: the elapsed time from the last bit of inbound market data to the first bit of an outbound order. That definition is precise and intentional. It explicitly excludes host-side strategy logic. The test environment is designed to eliminate every source of latency that exists in actual production deployments.

The 13.9 nanosecond STAC-T0 result posted in April 2024 on an AMD Alveo UL3524 with Exegy’s nxFramework (run on a Dell PowerEdge R7525 with AMD EPYC 7313) represents a 49% improvement over the previous 24.2 nanosecond record. It is a genuine engineering achievement. And it is measured in a configuration that no live production deployment replicates.

The AMD Alveo UL3422, released in October 2024, delivers equivalent STAC-T0 performance in a half-size form factor with GTF transceivers that reduce FPGA transceiver latency from approximately 16 nanoseconds to 2.34 nanoseconds. Again, a real advancement.

None of this is the vendor’s problem. The STAC-T0 specification is public and explicit about what it excludes. The problem is that many purchase decisions are made as if the benchmark number is the production number. It is not. The distance between those two numbers is where the seven failure modes live.

Failure Mode 1: Buying the Benchmark Stack, Not the Production Stack

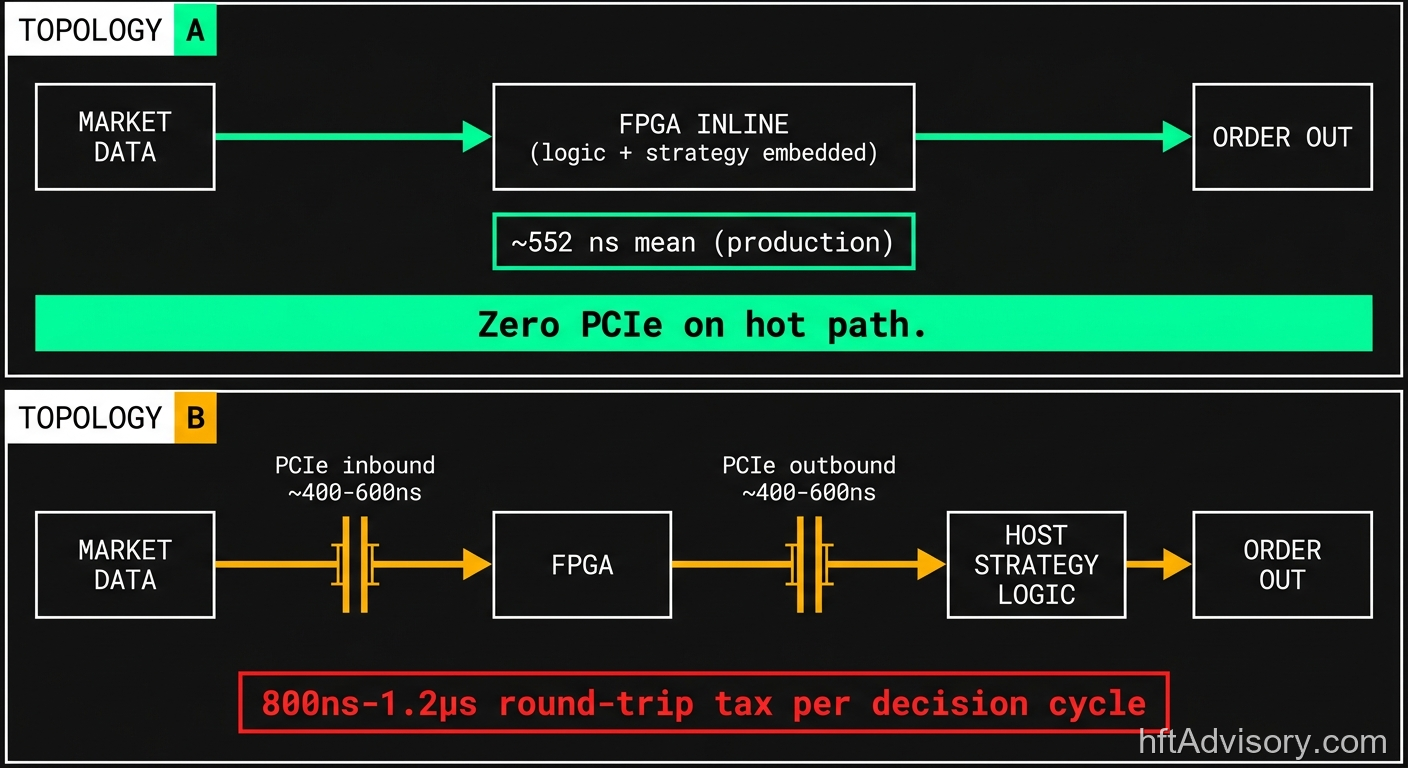

The 13.9 nanosecond STAC-T0 record requires a purpose-built implementation with zero host-side strategy logic. Every firm I have seen try to replicate that number in production has strategy logic that lives on the host. That logic requires a PCIe round-trip, and a PCIe round-trip adds approximately one microsecond to the decision cycle.

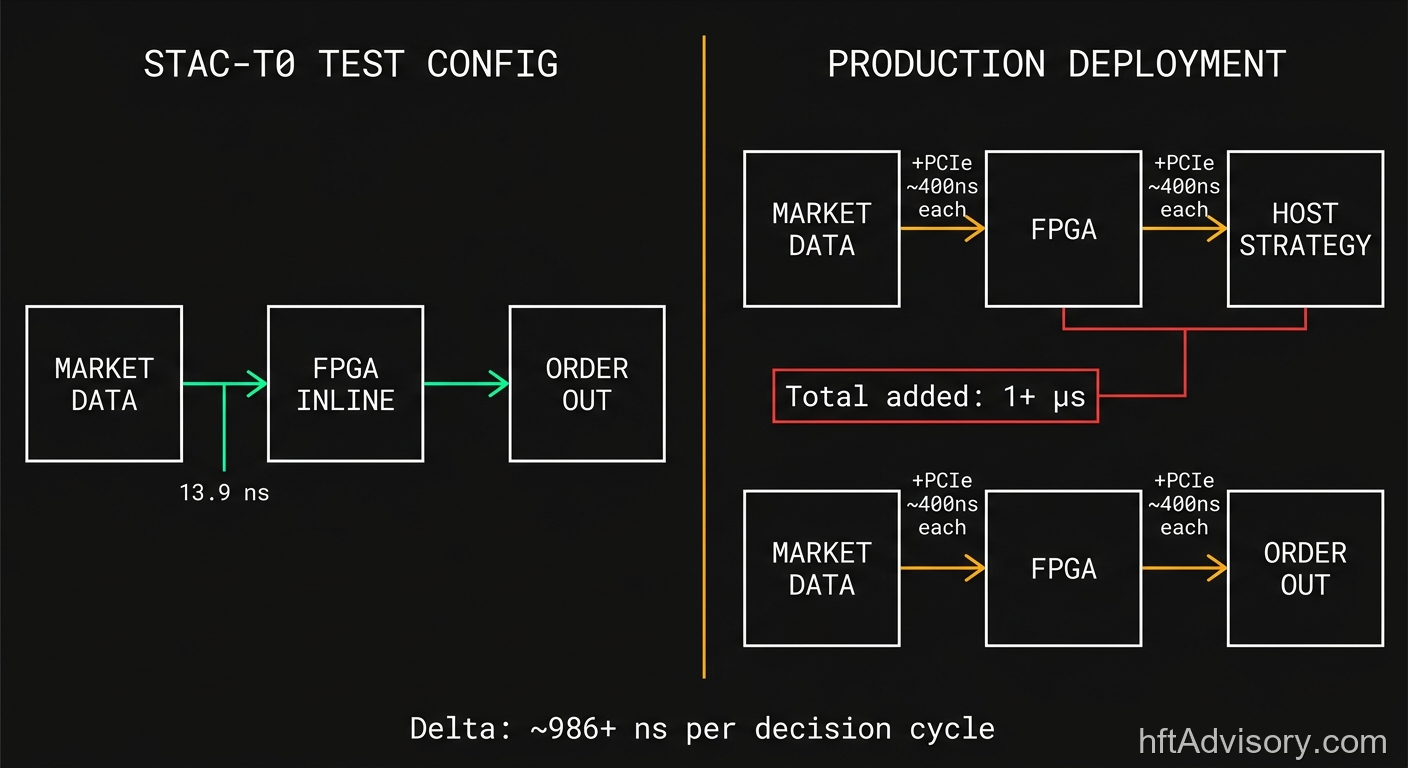

The production number for a well-implemented inline FPGA system with strategy logic embedded in the FPGA fabric is measurable. Exegy’s Xero system at CME posts 0.552 microseconds mean tick-to-trade latency with a P99 of 0.609 microseconds. That is the production benchmark for a correctly architected inline system: sub-microsecond, a significant competitive threshold even if far from the STAC record.

The desk that buys the benchmark stack and deploys it with host-side strategy logic is not buying a sub-microsecond system. They are buying a 1+ microsecond system at the capital cost of a nanosecond-class system. The economics of that trade are straightforward once you model them. They rarely get modeled before the PO is signed.

If your strategy logic lives on the host CPU, the STAC-T0 number is not your number. The topology of your decision path is the only number that matters.

Failure Mode 2: Architecture B Sold as Architecture A

In my experience advising trading desks, I categorize these systems as two distinct topologies. This is my own practitioner taxonomy, not an industry-standard designation. The distinction is operationally critical, but vendor presentations rarely make it explicit.

Topology A is FPGA-as-NIC with embedded logic, inline, no PCIe on the hot path. The FPGA receives market data, executes the strategy, and sends the order without the decision cycle ever touching the host bus. Maven Securities has documented this architecture accurately: even setting aside the gains from FPGA processing itself, removing PCIe transfer delays alone reduces system latency considerably. The hot path is pure silicon.

Topology B is FPGA as host co-processor via PCIe, where the hot path crosses the bus twice: once inbound with market data, once outbound with the order. This architecture is legitimate and appropriate for certain workloads. It is a 1+ microsecond round-trip tax on every decision cycle when used for latency-sensitive order generation.

The issue is that vendors often present Topology B in materials that emphasize the FPGA’s nanosecond-class internal processing speed without clearly stating that the hot path includes two PCIe crossings. The CTO approves the FPGA line item. The architecture review never surfaces which topology was actually purchased.

I have seen this exact situation at multiple desks. The engineering team knows what they bought. The CTO learns the difference when the tick-to-trade profile comes back from the first production audit.

Optiver’s CME iLink FPGA deployment is instructive here. Their first production deployment solved for TCP passthrough only: “initially only solved for a subset of the trading signals, order types, and strategies.” That staged rollout was a deliberate engineering decision to manage deployment risk. It also illustrates how far the initial production scope can be from the benchmark configuration. The gap is architectural, not incidental.

Failure Mode 3: IP-Core Overhead Miscalculated

Not all FPGA implementations are equal inside the chip, and the difference is encoded in a procurement clause that most SOWs do not contain.

FPGA Ethernet MAC implementations operate in two fundamentally different modes. Cut-through mode forwards frames as they arrive, with latency in the single-digit to low double-digit nanosecond range. Store-and-forward mode buffers the entire frame before processing, adding hundreds of nanoseconds to microseconds of overhead per hop.

Off-the-shelf vendor IP cores do not universally default to cut-through mode. The mode must be specified. If the procurement SOW does not specify cut-through mode for the Ethernet MAC, the vendor’s default selection governs, and that default may be store-and-forward for stability or compliance reasons.

I have seen desks receive FPGA deployments where the internal MAC configuration was store-and-forward and the engineering team did not discover this until they ran their own latency characterization. The hardware was exactly what the PO described. The performance was not what the benchmark implied.

The fix is contractual: specify the operating mode regime in the SOW before signing. This is a single clause. It is the difference between nanosecond-class internal processing and hundreds of nanoseconds of overhead per packet.

Failure Mode 4: Recompile Time and the No-Rollback Reality

IMC Trading has published that FPGA compilation takes two to three hours per adjustment. That number understates the operational risk it represents.

In software, a bad deployment has a rollback path. You revert the binary, restart the process, and the system returns to its prior state in seconds. In FPGA deployments without Partial Reconfiguration (PR) built in from day one, a bad bitstream is the system state. There is no hot patch. There is no rollback. There is a two-to-three hour recompile cycle before you can attempt a correction.

AMD Vivado and Intel Quartus do support Partial Reconfiguration, which allows a portion of the FPGA to reconfigure while the remainder continues running. Research from the University of Pennsylvania has demonstrated that PR-based compilation can reduce iteration time to approximately seven minutes for modular designs. That is a meaningful improvement. The critical constraint: PR must be architected in from the initial design. It cannot be retrofitted. The freeze bridges, the controller logic, and the modular region boundaries have to be defined before the first bitstream is written.

The firms that skip PR design on the initial build because it adds scope to the first deployment pay for that decision every time they need to push a strategy adjustment in a volatile session.

To understand the failure signature, consider August 1, 2012. Knight Capital’s $460M loss in 45 minutes originated from a deployment where a technician failed to copy new code to one of eight servers. The old deprecated code path reactivated. The SEC enforcement action is public record. What is operationally significant about that event for FPGA teams is the failure signature, not the dollar figure: a deterministic system, a single deployment error, no hot-patch path, and catastrophic outcome at production speed. That failure signature is architecturally identical to an FPGA bitstream deployment failure. The system executes exactly as programmed. If what was programmed is wrong, the execution is wrong at nanosecond velocity.

The desks that have internalized this do not treat recompile time as a developer inconvenience. They treat it as a risk parameter that requires a formal deployment procedure: staged rollout, bitstream verification against a shadow environment, and a documented rollback protocol even if that rollback requires a two-to-three hour recompile window.

Failure Mode 5: PCIe Treated as Free Bandwidth

The transition from PCIe Gen4 to Gen5 does not solve the latency problem it is sometimes sold as addressing.

Full software-visible PCIe round-trip latency (including DMA, TLB, and protocol layers) runs approximately 800 nanoseconds to over one microsecond. Gen5 reduces serialization delay at the physical layer. It does not materially reduce the protocol and DMA overhead that dominates the round-trip budget. Research published in 2024 confirms this: the effective round-trip tax on a decision cycle is not materially different between PCIe Gen4 and Gen5.

This matters for capital planning because PCIe generation upgrades are marketed with bandwidth and peak throughput improvements that are real and valuable for many workloads. For latency-critical decision cycles, the relevant number is round-trip latency under strategy load at P99, and that number does not move meaningfully between generations.

A CTO who authorizes a PCIe Gen5 upgrade expecting to reclaim a microsecond on the hot path will not find it. The topology has to change for the latency to change. Generation is not topology.

Failure Mode 6: Kernel-Bypass Software Paths Left Out of the Comparison

At the other end of the latency spectrum, there is a comparison that belongs in every FPGA procurement analysis and rarely appears in one.

Solarflare with TCPDirect on the X2522 achieves 828 nanoseconds (STAC-audited, 2018/2019). Standard kernel-bypass Onload sits around 1,000 nanoseconds half-round-trip per Solarflare’s own documentation. DPDK-based paths run 3 to 5 microseconds. The software kernel stack runs 50 to 100 microseconds.

If your strategy latency requirement falls in the 1 to 5 microsecond range, a kernel-bypass NIC at a fraction of the FPGA capital cost may already address the problem. The FPGA cost structure starts at over $100,000 in hardware per venue. The engineering team required to build and maintain a custom FPGA implementation (12 people, multidisciplinary, with base compensation at senior/principal level running $250,000 to $350,000 at top-tier HFT firms and total compensation well above that) is a recurring cost that the kernel-bypass alternative does not carry.

The failure mode is not buying FPGA for a 500-nanosecond requirement. The failure mode is buying FPGA for a 3-microsecond requirement without modeling whether kernel-bypass at one-tenth the capital achieves the same outcome.

The comparison belongs in the architecture decision document before the vendor shortlist is assembled. The fact that it rarely appears there is the failure.

Failure Mode 7: The Cost-Per-Fill Math Never Reaches the CTO Before the PO

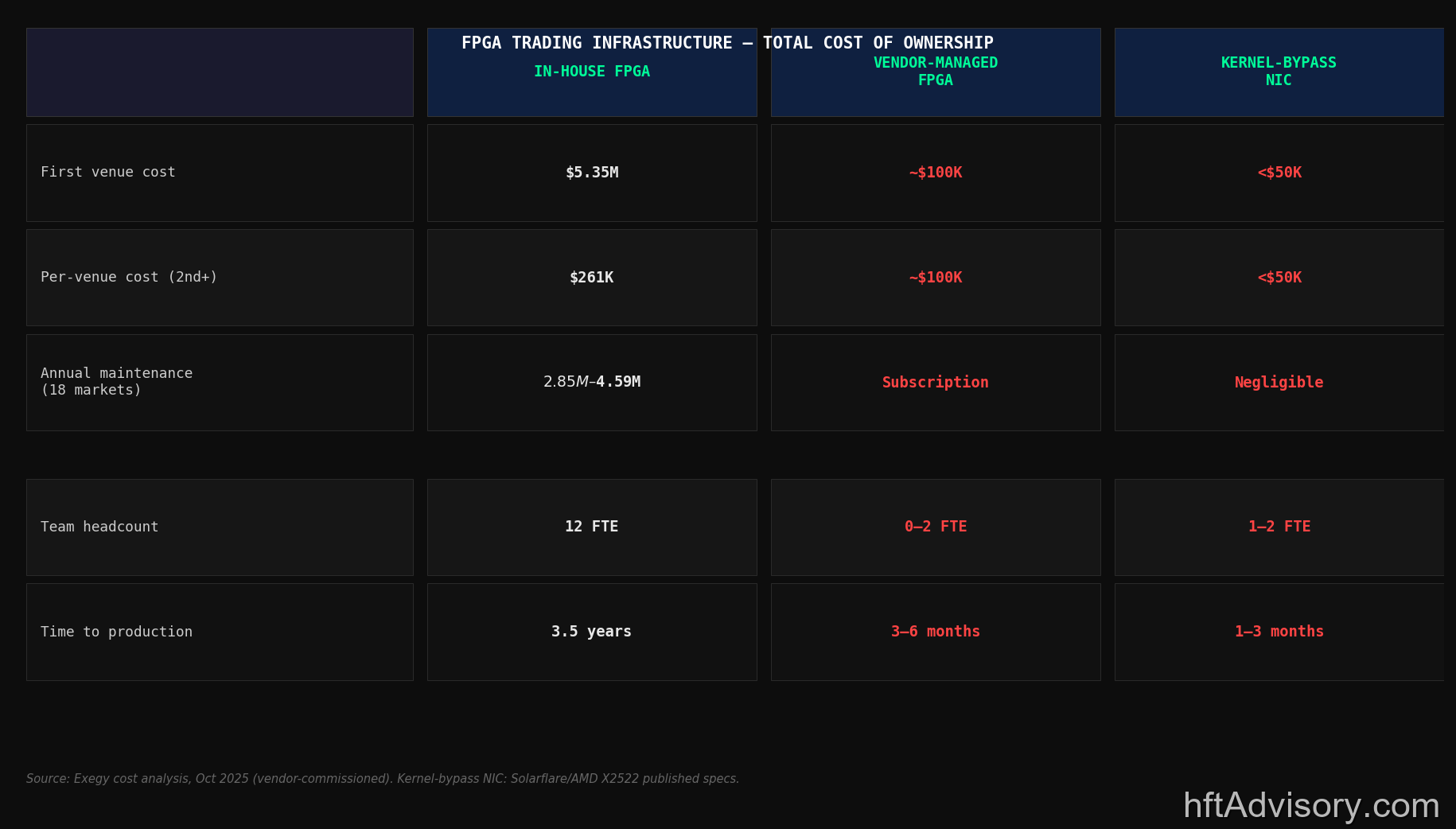

The TCO math for in-house FPGA feed handler development, drawn from Exegy’s October 2025 vendor-commissioned cost analysis, is the number that most CTOs never see before signing.

First venue build: $5.35 million, 3.5 years.

Full North American equities coverage (18 markets): $9.79 million, 6.5 years.

Annual maintenance: $2.85 million to $4.59 million depending on market count and team structure.

Per-venue cost after the first build: $261,000 in-house versus approximately $100,000 vendor per venue.

Team required: 12 people, multidisciplinary. Senior and principal FPGA engineers at top-tier HFT firms command $250,000 to $350,000 in base compensation, with total compensation substantially above those figures.

That math exists. It is buildable before the PO is signed. In my experience, it is assembled after, usually when the program is 18 months in and the maintenance cost model has started compounding against the original business case.

The Selby Jennings hiring market data adds relevant context: most FPGA engineers in trading are on their first or second role. Retention is driven by high compensation, greenfield work, and non-competes. Engineers enter the open market and get placed within six hours. The CHIPS Act has simultaneously created demand competition between HFT, defense, semiconductor, and hyperscalers for the same talent pool. The talent cost and availability assumptions embedded in the original program business case need to be stress-tested against current market conditions, not the conditions that prevailed when the program was conceived.

Throwing more hardware at a bad architecture makes bad trades faster. It does not change the P&L outcome. The architecture review and the TCO model belong before the vendor shortlist, not after.

When FPGA IS the Answer: Threshold Conditions

The seven failure modes above do not constitute an argument against FPGA. They constitute an argument for deploying it correctly against the right problem.

FPGA is clearly the correct architecture when the strategy latency requirement is sub-microsecond and the strategy logic can be embedded directly in the FPGA fabric (Topology A, no PCIe on the hot path). Exegy’s Xero system at CME demonstrates 0.552 microseconds mean and 0.609 microseconds P99 in that configuration. That is a real production benchmark from a correctly architected system.

FPGA also provides deterministic execution guarantees that no software path replicates. The same determinism that creates the deployment risk (no hot patch) also creates the latency predictability (no GC pauses, no OS scheduler interference, no hypervisor tax). For strategies where P99 stability matters as much as mean latency, that guarantee has value independent of the mean latency number.

The talent pipeline is real and growing. Jane Street’s Advent of FPGA competition in 2025 drew 213 submissions from 46 countries, 59% from students. The global FPGA market was $9.93 billion in 2025 with a 9.35% projected CAGR to 2031. The ecosystem is developing.

FPGA is the answer when the TCO model justifies it, the topology matches the latency requirement, and the deployment and maintenance disciplines are treated as first-class requirements from day one of the program. When those conditions are met, it is an excellent answer. The seven failure modes above describe what happens when any one of those conditions is absent.

The Pre-PO Topology Audit: Three Questions Every CTO Should Answer Before Signing

After seven variants of the post-mortem conversation, the pattern is consistent: the decisions that determine the program’s success or failure are made before procurement, and they are rarely documented as explicit decisions. They default into the vendor’s recommended configuration.

Three questions form the core of the pre-PO topology audit I run with every desk considering an FPGA program.

Question 1: Is the architecture inline or co-processor?

Map the decision cycle on a whiteboard before opening a vendor pitch. Where does the strategy logic live today? Where will it live after deployment? If the answer after deployment is “on the host CPU,” trace the PCIe round-trip into the latency model. At 800 nanoseconds to 1+ microsecond per round-trip at P99 under load, two crossings consume between 1.6 and 2+ microseconds before the FPGA’s nanosecond-class processing contributes anything measurable to the outcome. That is your production latency floor, not the STAC-T0 number.

Question 2: What is the modeled PCIe round-trip at P99 under strategy load?

If the architecture requires PCIe crossings on the hot path, the round-trip latency at P99 under realistic strategy load needs to be measured in a pre-production environment, not assumed from Gen4 or Gen5 marketing materials. The protocol and DMA overhead that dominates round-trip latency does not improve materially between PCIe generations. The only path to reducing this number is reducing the number of crossings, which is a topology decision, not a component selection decision.

Question 3: What is the bitstream deployment and rollback procedure, and has Partial Reconfiguration been architected in from day one?

If the answer to the rollback question is “recompile” and the estimated recompile cycle is two to three hours, the desk needs a staged rollout protocol and a shadow environment for bitstream validation before that cycle is acceptable in production. If the program is already past the initial design phase, the PR retrofit question has one answer: it cannot be retrofitted. The operational risk model has to account for the two-to-three hour window as a permanent constraint. If the program is in design, PR support should be in the SOW as a hard requirement.

These three questions do not require a consultant to answer. They require the relevant parties in the room (trading strategy, engineering, and the CTO) before the vendor shortlist is assembled, not after the hardware arrives.

The standard for a correctly architected inline FPGA system is sub-microsecond tick-to-trade at P99. If your architecture cannot produce that number in production, the $5.35 million first venue cost and the $2.85 to $4.59 million annual maintenance structure are funding a topology that may not justify them.

Conclusion

The FPGA market is maturing, the silicon is excellent, and the benchmark records are real. The gap between benchmark performance and production performance is an architecture and procurement problem, and it is solvable.

The desks that close that gap do not start with a better vendor shortlist. They start with a topology audit that answers the three questions above before any vendor conversation begins. The hardware selection is the last decision in that sequence, not the first.

If your tick-to-trade profile did not move after your last FPGA deployment, the architecture review should come before the next hardware cycle.

Originally shared as a LinkedIn post. View original

About the Author: Ariel Silahian has 20+ years of experience building and auditing HFT infrastructure across Tier 1 institutional trading desks. He is the creator of VisualHFT, an open-source real-time market microstructure analysis platform.

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

>> Learn more about what I do:

https://hftAdvisory.com

>> Your execution logs contain $200K+ in recoverable edge.

>> Microstructure Diagnostics — one-time audit, 3-5 day turnaround

https://hftadvisory.com/microstructure-diagnostics

... more info about me 👇