Table of Contents

- The Misdiagnosis Pattern

- What End-to-End Metrics Hide

- The Five Layers of LOB Latency

- What a Proper Independent LOB Profiling Stack Looks Like

- The P&L Translation

- The Exchange Upgrade Context

- Five-Layer LOB Diagnostic Checklist

- Conclusion

Introduction

267 million nanoseconds. That is what a cold-cache limit order book costs you on the hot path. This is not a steady-state figure — it is a benchmark diagnostic condition that reveals where a cold LOB sits before any cache optimization has been applied. Most matching engine rebuilds never surface it, because most teams are not measuring the right layer.

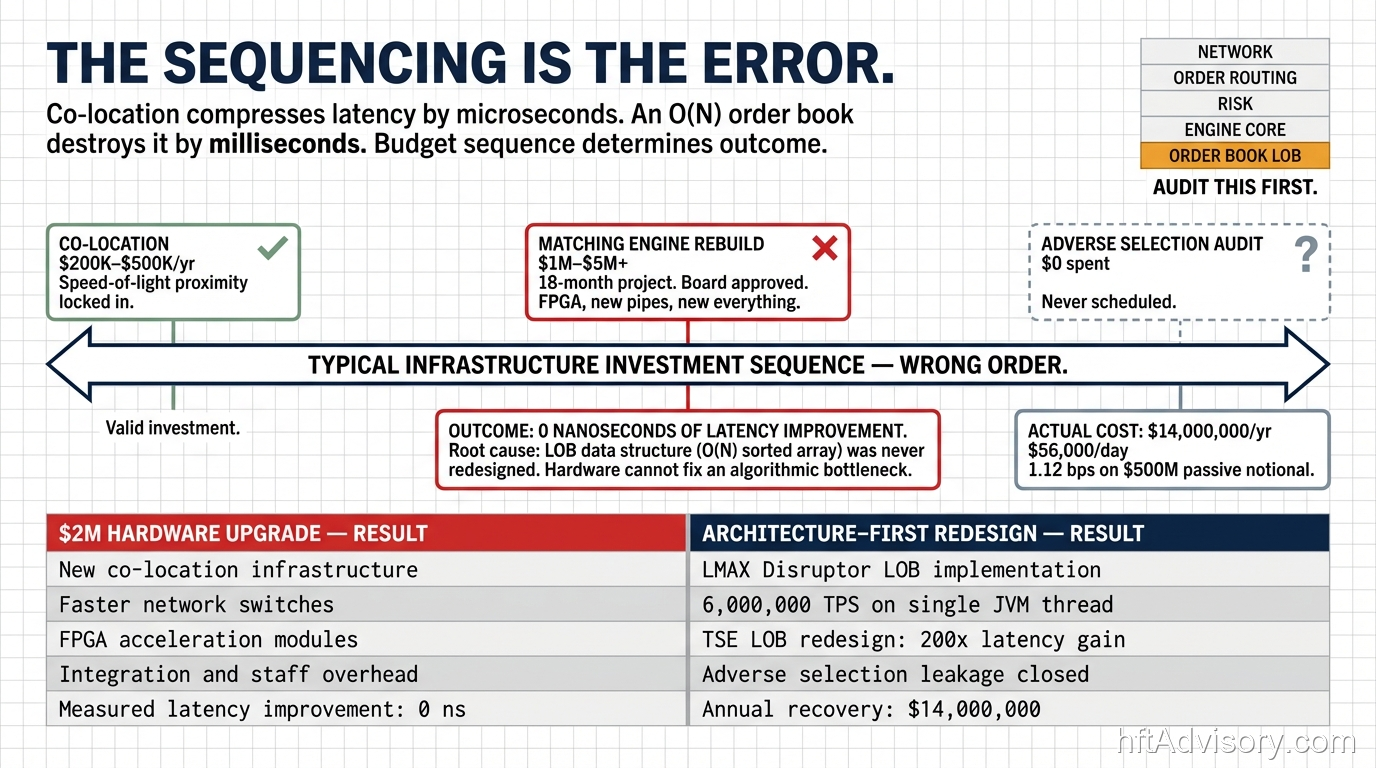

A $700M AUM firm brought my team in after two complete rebuilds. Eight months of engineering. Board approval on both rounds. The latency profile barely moved. When my team profiled the full stack, the matching engine was not the bottleneck. The LOB layer was — and it had never been independently measured.

In 20+ years building production HFT infrastructure, I have watched this exact misdiagnosis repeat at the $500M–$1B AUM range. The matching engine is the most visible component, the most politically defensible rebuild target, and the least likely actual source of the problem once a system reaches production scale. The limit order book layer sits beneath it, unmonitored, accumulating latency that blended metrics can never attribute.

This article breaks down why that misdiagnosis happens, what it costs, and what a proper five-layer LOB diagnostic framework looks like — the one my team applies before any infrastructure recommendation is made.

The Misdiagnosis Pattern

The matching engine is the natural target when fill quality degrades and adverse selection worsens. It is well-documented, architecturally clean to rebuild, and easy to present to a board. The LOB layer is none of those things. It is deeply coupled to memory architecture, thread scheduling, and NUMA topology — and its latency contribution only becomes visible under the exact conditions where it matters most: elevated load, concentrated order flow, and the milliseconds preceding an adverse fill.

The pattern I see at this AUM range follows a predictable sequence. Fill quality degrades. Post-mortem identifies latency. Engineering proposes a matching engine rebuild. Board approves. Rebuild executes. Latency profile does not materially improve. Second rebuild is proposed. Second board approval. Same result.

The $700M AUM firm is not an outlier. It is a case study in what happens when monitoring is designed around the component that is easiest to instrument rather than the component that is actually the constraint.

The matching engine gets rebuilt. The LOB layer stays un-profiled. The adverse fills continue.

What End-to-End Metrics Hide

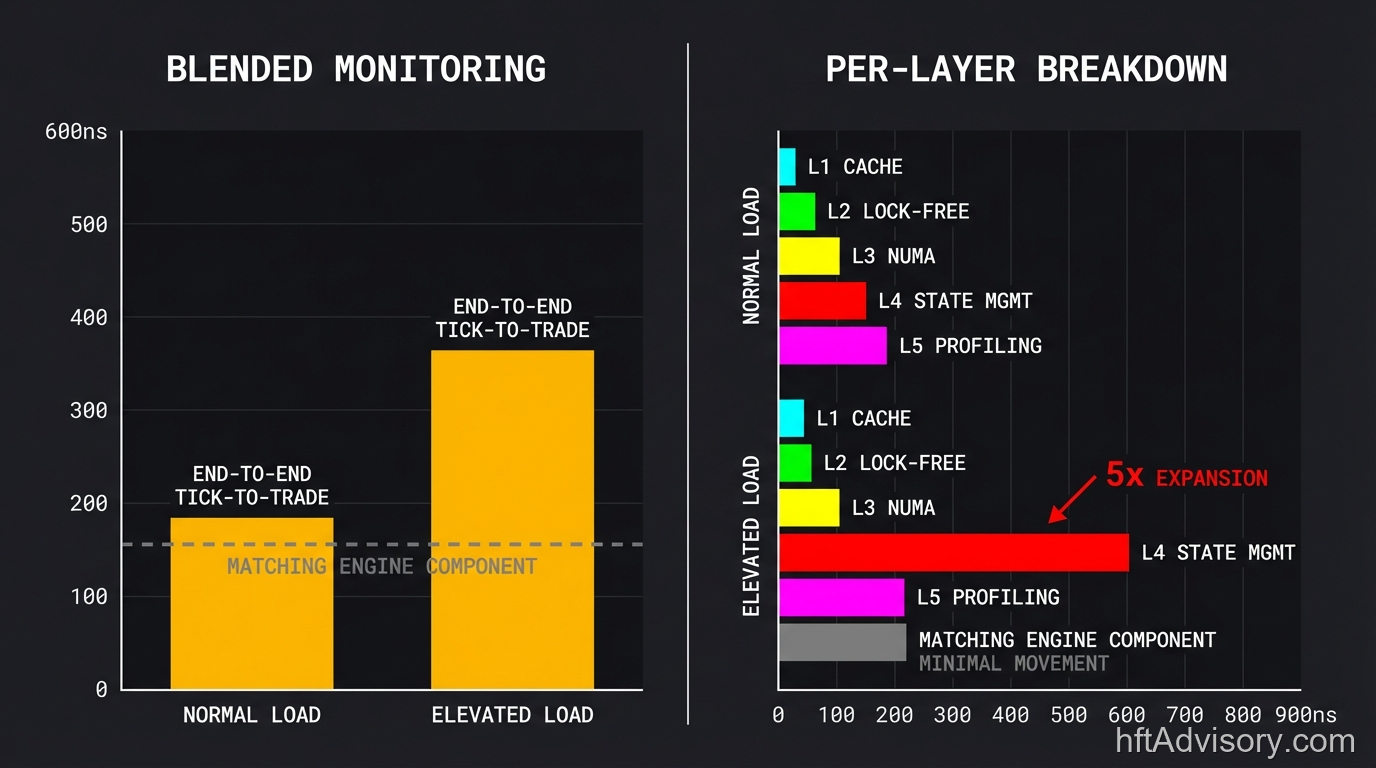

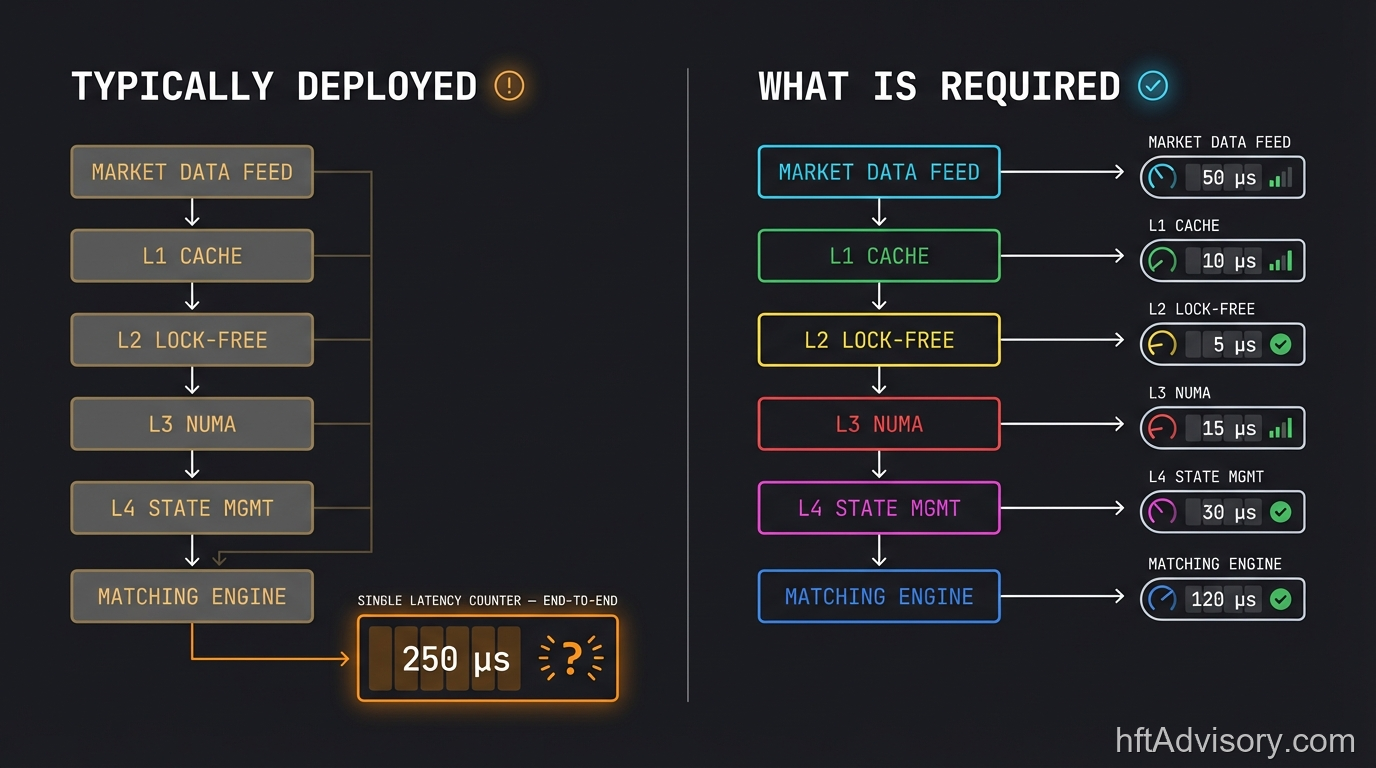

End-to-end tick-to-trade latency is the right metric for regulatory reporting and executive dashboards. It is the wrong metric for diagnosing which infrastructure layer is the constraint.

The problem is averaging. A blended latency number combines matching engine processing time, LOB state management time, network path time, and OS scheduling jitter into a single figure. When LOB contention spikes under load, that spike is absorbed into the aggregate. The matching engine variance line stays clean. The LOB variance is invisible.

CME Globex measures this correctly: median inbound latency sits at 52 microseconds, with a 95th percentile spread of 39 microseconds. CME uses per-operation nanosecond timestamps for LOB operations as independent metrics — not blended with matching engine processing. That separation exists because the LOB layer moves independently of the matching engine, and blending the two destroys diagnostic signal.

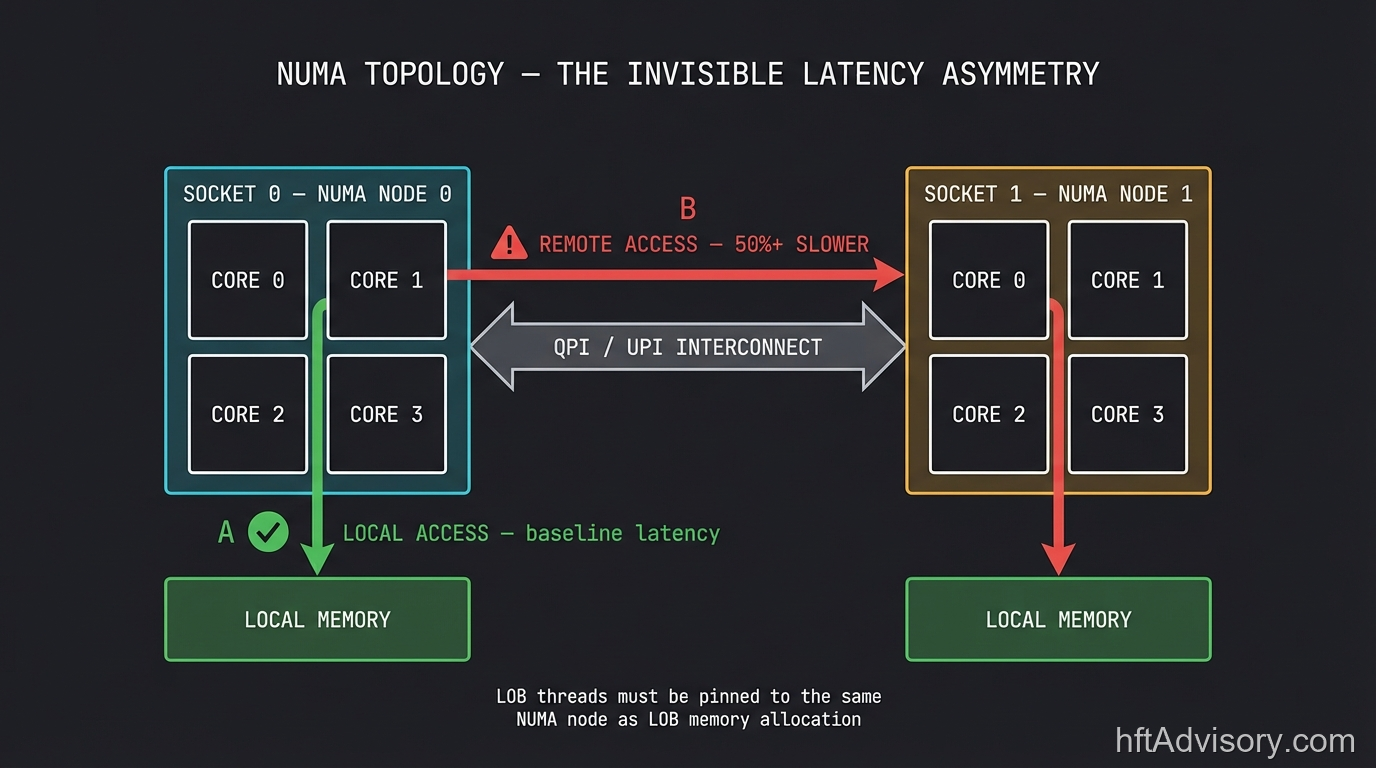

NUMA effects make this worse. NUMA topology creates a bimodal latency distribution — fast local access, slow remote access — that completely disappears inside blended metrics. Remote NUMA access consistently runs 50%+ slower than local. In the majority of systems my team reviews at this AUM range, the NUMA penalty is invisible in production monitoring: it appears as unexplained variance in matching engine performance, misattributed to the matching engine itself, when the actual source is memory access topology the matching engine cannot control.

If your monitoring shows end-to-end tick-to-trade latency but not LOB update latency as an independent variable, you are measuring the symptom, not the source.

The Five Layers of LOB Latency

These five layers each contribute independently to LOB latency. Each one is independently measurable. Each one is invisible in blended matching engine metrics.

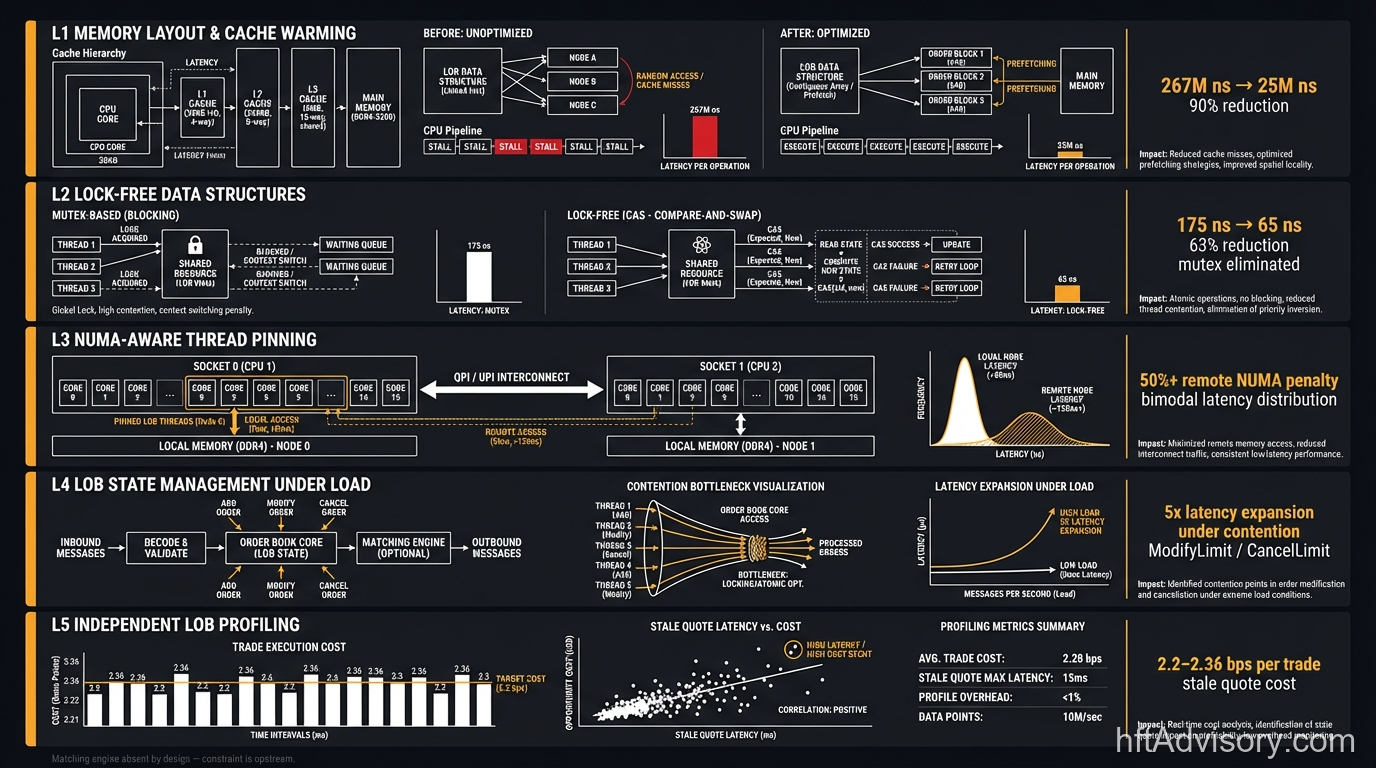

Layer 1: Memory Layout and Cache Warming

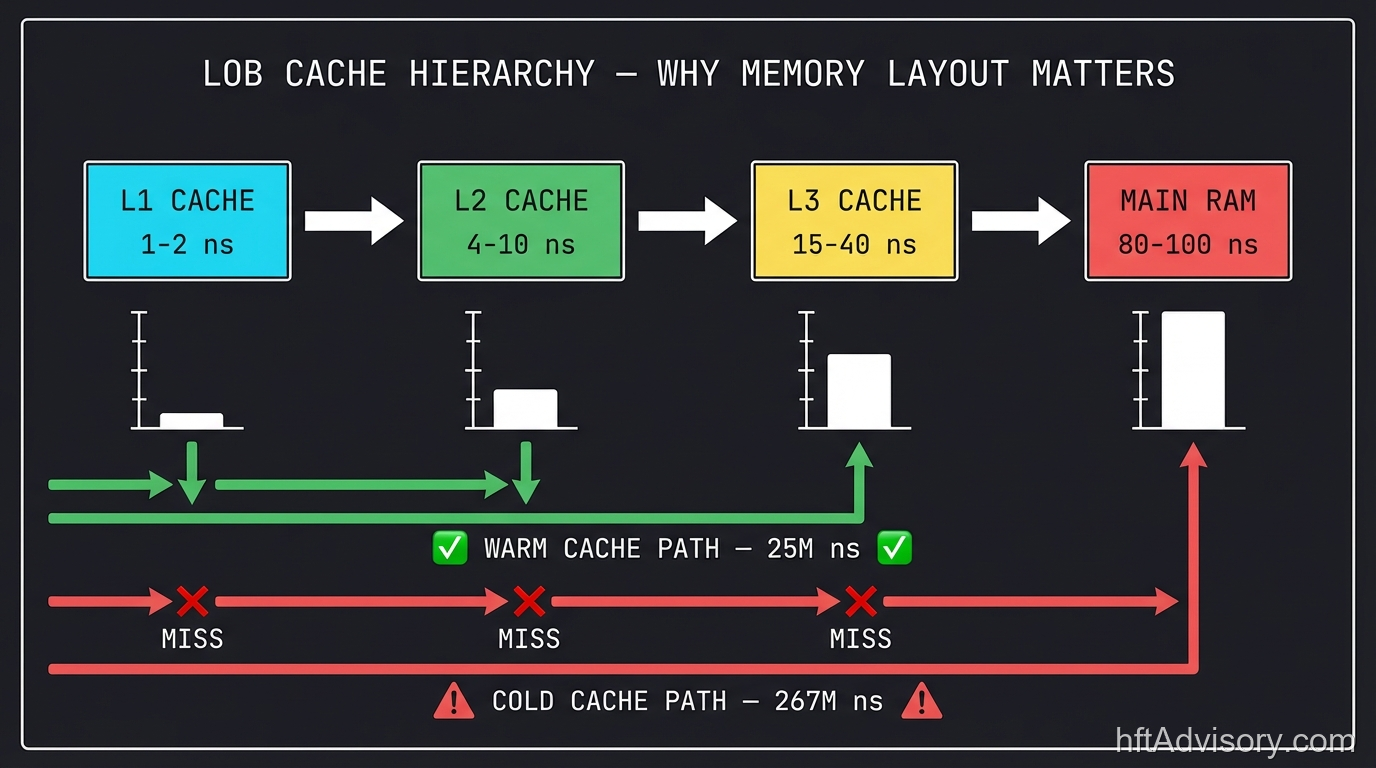

The 267M ns cold-cache figure is a diagnostic benchmark, not a production steady-state number. It represents a fully unwarmed LOB generating cascading RAM fetches — L1 cache at 1–2 ns, L2 at 4–10 ns, L3 at 15–40 ns, and RAM at 80–100 ns mean that a cold LOB traversal is not a single miss; it is a cascade of misses across the entire cache hierarchy.

A properly warmed cache brings that 267M ns figure down to approximately 25M ns — a 90% reduction (Bilokon and Gunduz, arXiv:2309.04259, 2023). That improvement requires no matching engine changes. It requires no architectural rebuild. It requires disciplined cache warming on the hot path and memory layout that keeps the active LOB resident in L3. When the LOB is not resident in cache, the matching engine is a bystander to a memory access problem it cannot see and cannot solve.

Layer 2: Lock-Free Data Structures

Mutex-protected queues in the LOB layer carry approximately 175 ns overhead for a 10,000-increment sequence. Atomic lock-free operations bring that to approximately 65 ns — a 63% reduction (Bilokon and Gunduz, arXiv:2309.04259, 2023). The matching engine can be as fast as the hardware allows. The mutex still wins. Lock contention in the LOB layer originates in data structure design — it surfaces as matching engine variance, but the matching engine cannot fix it.

The distinction matters because mutex contention is not visible in matching engine benchmarks run under synthetic load. Contention emerges under real market conditions — concentrated order flow, rapid quote refresh, elevated cancel-replace activity. The benchmark looks clean. Production degrades.

Layer 3: NUMA-Aware Thread Pinning

NUMA topology creates a hardware-level latency asymmetry that no software optimization above the OS layer can fully compensate for. Remote NUMA access consistently runs 50%+ slower than local access. In a multi-socket server architecture — which describes most production HFT deployments at this AUM range — thread placement relative to memory allocation is a performance determinant, not an implementation detail.

The diagnostic signature is a bimodal latency distribution: two peaks, one for local NUMA accesses, one for remote. Blended tick-to-trade metrics flatten this into a single distribution with unexplained variance. My team’s standard diagnostic includes a NUMA topology map against thread affinity assignments on day one, because the fix — correct thread pinning and memory policy configuration — takes hours, not months, once the problem is located.

Layer 4: LOB State Management Under Load

Under elevated load — precisely when alpha capture matters most — ModifyLimit and CancelLimit operation latencies expand by 5x or more. That variance does not originate in the matching decision. It originates in LOB state management under contention: the cost of traversing and updating price-level data structures when order density is high and concurrent modification is occurring.

This is the layer that directly explains the adverse fill patterns that drive matching engine rebuild proposals. The fills happen under load. The LOB state management degrades under load. The matching engine, which executes correctly and quickly, is handed stale or delayed state to work with. The post-mortem attributes the fills to the matching engine. The LOB layer is never examined.

Layer 5: Independent LOB Profiling

The only way to verify the above four layers is to instrument the LOB independently of the matching engine — separate performance counters, separate latency distributions, separate alerting thresholds. This is not how most firms at this AUM range build their monitoring stacks.

The firm at the top of this article had never profiled the LOB layer independently. When my team isolated it, contention-driven variance was adding 400–500 ns under the conditions that preceded their adverse fills. That number was present in every production run. It was invisible in every post-mortem.

What a Proper Independent LOB Profiling Stack Looks Like

The matching engine and the LOB layer must be instrumented as separate subsystems with separate latency budgets, separate alerting thresholds, and separate variance baselines. This is the prerequisite for any meaningful infrastructure diagnosis.

A proper independent LOB profiling stack includes:

Per-operation timestamps. LOB operations — AddOrder, ModifyLimit, CancelLimit, LOB snapshot — each need nanosecond-resolution timestamps captured at the LOB layer boundary, not at the tick-to-trade boundary. This is the same approach CME uses for Globex LOB instrumentation.

Cache residency tracking. Hardware performance counters (available via perf_events on Linux, VTune on Intel) expose L3 miss rates per thread and per code region. LOB traversal miss rates are a direct indicator of cache warming effectiveness. This data must be captured during production runs, not only during synthetic benchmarks.

NUMA access telemetry. Linux numastat and Intel VTune both provide per-NUMA-node memory access breakdowns. A properly instrumented system tracks the ratio of local to remote NUMA accesses for LOB operations continuously — not as a one-time diagnostic.

Load-stratified latency distributions. LOB operation latency should be measured separately across three load regimes: baseline (below 30th percentile order flow), elevated (60th–90th percentile), and peak (above 90th percentile). The 5x latency expansion under load is not visible if distributions are averaged across all conditions.

Independent variance baselines. The LOB layer needs its own latency baseline established under controlled conditions, independent of matching engine benchmarks. When production variance exceeds the baseline by a defined threshold, the alert fires against the LOB layer — not against tick-to-trade latency.

Without these five instrumentation elements, every post-mortem starts from blended data that cannot isolate the source. With them, the $700M firm’s 400–500 ns contention-driven variance would have been visible in the first production run.

The P&L Translation

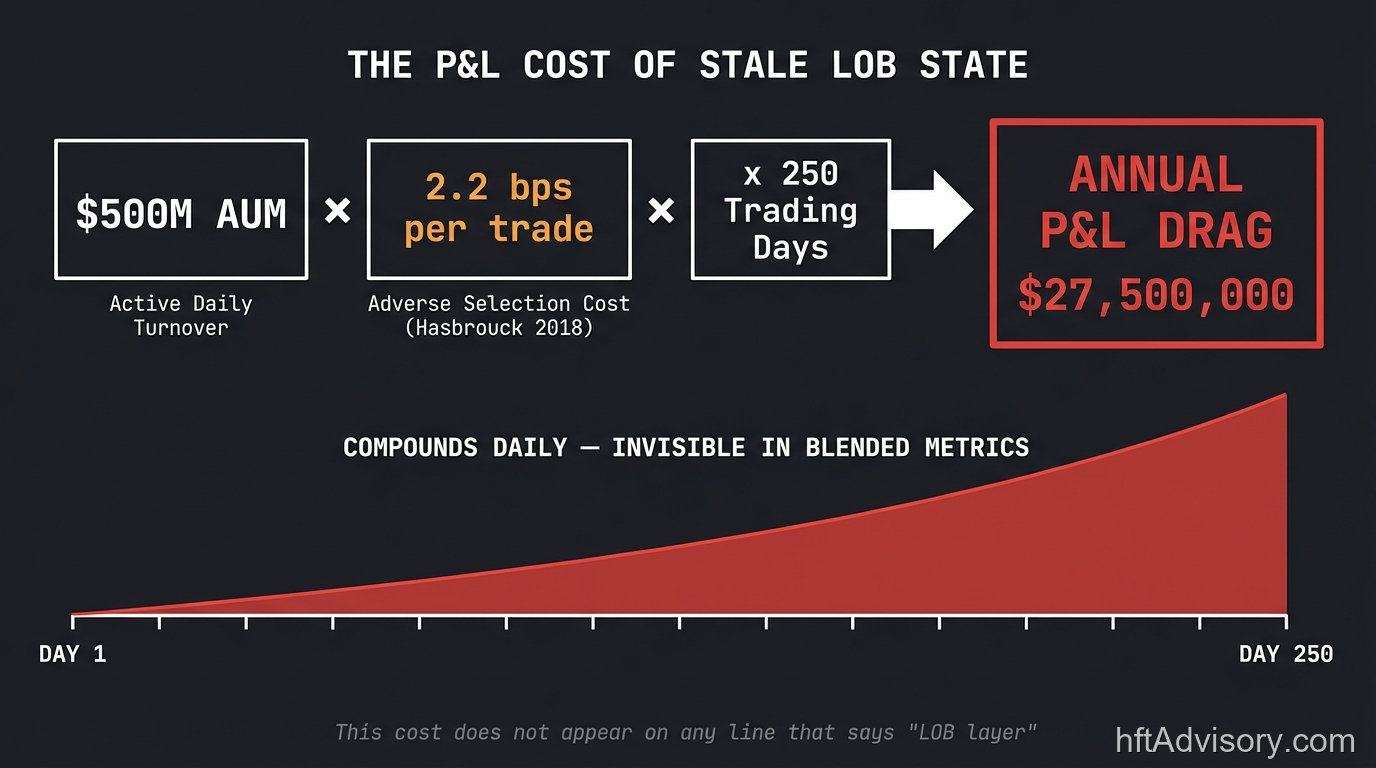

The diagnostic argument above is architectural. The P&L argument is simpler.

Hasbrouck (2018) documented approximately 2.2 basis points per trade in adverse selection costs attributable to stale quotes in lit markets (Hasbrouck, Journal of Financial and Quantitative Analysis, 2018). Aquilina, Foley, O’Neill, and Ruf (2023) found up to 2.36 basis points in dark pool executions (BIS Working Paper 1115, 2023). These are different market contexts with separately documented figures — two independent measurements pointing in the same direction.

At $500M AUM with active turnover, 2.2 basis points per trade is not a rounding error. It is a number with a name on a P&L line. It compounds daily. And it is not the cost of the matching engine being slow — it is the cost of the LOB layer handing the matching engine stale state under the exact conditions where speed matters most.

The matching engine rebuild at the $700M firm cost eight months and two board approvals. The LOB diagnostic — isolating the actual constraint — took my team a fraction of that time. The delta is not engineering capacity. It is measurement architecture.

If the post-mortem does not include LOB update latency as an independent variable, the 2.2 bps cost is structural. It is baked in. The matching engine rebuild will not touch it.

The Exchange Upgrade Context

The Tokyo Stock Exchange arrowhead 4.0 system went live on November 5, 2024. The upgrade addressed processing stability under concentrated order flow — the exact condition that amplifies LOB state management latency at Layer 4 above. The implementation used a three-node synchronized server architecture specifically designed to maintain LOB consistency under peak load conditions.

The result was clean because the diagnostic was clean. TSE knew which layer was the constraint before committing to the architectural solution. The upgrade targeted LOB state management stability, not matching engine throughput in isolation.

That precision comes from independent LOB profiling. When you know which layer is the constraint, the solution is scoped correctly. When blended metrics obscure the source, the solution addresses the wrong component — correctly, efficiently, and to no effect on fill quality.

The HFT technology market is projected at $7.147 billion in 2025 with approximately 15% compound annual growth. Firms at the $500M–$1B AUM range are competing for microsecond advantages against infrastructure budgets an order of magnitude larger than their own. At that scale, rebuilding the wrong layer is not a recoverable error. It is a strategic misallocation measured in months and in basis points.

Five-Layer LOB Diagnostic Checklist

This is the framework my team applies before any infrastructure recommendation is made. Each item is independently verifiable. Each one maps to a measurable latency contribution.

Layer 1 — Memory Layout and Cache Warming

- Measure LOB hit rate at L1, L2, L3, and RAM independently during production load

- Confirm active LOB data structures fit within L3 cache capacity

- Validate cache warming routine executes before hot-path activation

- Establish cold-cache vs. warm-cache baseline (target: warm cache reduces cold-cache latency by 80%+)

Layer 2 — Lock-Free Data Structures

- Audit LOB data structures for mutex-protected paths on the hot path

- Benchmark mutex vs. atomic lock-free operations under production-representative load

- Identify shared data structures accessed by both LOB and matching engine threads

- Confirm lock-free queue implementation under concurrent AddOrder/Cancel/Modify workloads

Layer 3 — NUMA-Aware Thread Pinning

- Map server NUMA topology (socket count, NUMA nodes, core-to-node assignments)

- Confirm LOB processing threads are pinned to cores on the same NUMA node as LOB memory allocations

- Measure local vs. remote NUMA access ratio for LOB operations (target: >95% local)

- Validate numactl memory policy for LOB data structure allocation

Layer 4 — LOB State Management Under Load

- Measure ModifyLimit and CancelLimit latency separately from AddOrder

- Capture latency distributions stratified by load regime (baseline / elevated / peak)

- Confirm 5x latency expansion threshold is defined and monitored as an independent alert

- Profile price-level order density under peak conditions — high density at a price level compounds CancelLimit cost

Layer 5 — Independent LOB Profiling

- Confirm LOB operations have nanosecond-resolution timestamps independent of tick-to-trade measurement

- Verify LOB latency baseline is established under controlled conditions separate from matching engine benchmarks

- Confirm production alerting fires on LOB layer variance, not only on tick-to-trade aggregate

- Validate that adverse fill post-mortems include LOB update latency as a primary variable

Each unchecked box is a measurement gap. Each measurement gap is a potential misdiagnosis.

Conclusion

The matching engine is not the bottleneck. In most cases my team has reviewed at the $500M–$1B AUM range, it never was. The limit order book layer — memory layout, data structure contention, NUMA topology, and state management under load — is the constraint, and it is the layer that blended end-to-end metrics cannot isolate.

Two rebuilds, eight months, and two board approvals did not move the $700M firm’s latency profile. A proper five-layer LOB diagnostic did — because it located the actual constraint before any architectural recommendation was made.

The standard is independent per-layer LOB instrumentation with nanosecond-resolution timestamps, load-stratified latency distributions, and variance baselines that alert on the LOB layer directly. If your architecture cannot guarantee that, your next post-mortem will reach the same conclusion as the last one — and it will recommend rebuilding the wrong thing.

Originally shared as a LinkedIn post by Ariel Silahian. View original post on LinkedIn

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

I have operated on both the Buy Side and Sell Side, spanning traditional asset classes and the fragmented, 24/7 world of Digital Assets.

I lead technical teams to optimize low-latency infrastructure and execution quality. I understand the friction between quantitative research and software engineering, and I know how to resolve it.

Core Competencies:

▬ Strategic Architecture: Aligning trading platforms with P&L objectives.

▬ Microstructure Analytics: Founder of VisualHFT; expert in L1/L2/LOB data visualization.

▬ System Governance: Establishing "Zero-Failover" protocols and compliant frameworks for regulated environments.

I am the author of the industry reference "C++ High Performance for Financial Systems".

Today, I advise leadership teams on how to turn their trading technology into a competitive advantage.

Key Expertise:

▬ Electronic Trading Architecture (Equities, FX, Derivatives, Crypto)

▬ Low Latency Strategy & C++ Optimization | .NET & C# ultra low latency environments.

▬ Execution Quality & Microstructure Analytics

If my profile fits what your team is working on, you can connect through the proper channel.