Table of Contents

- The Three Budget Lines Nobody Sequences Correctly

- The Infrastructure Trap: Proximity Without Data Structure Efficiency

- The Leakage Math: What Passive Fill Adverse Selection Actually Costs

- The Rebuild That Misses the Point: Matching Engine Scope Without LOB Audit

- Diagnostic Framework: The LOB Architecture Sequencing Checklist

- Conclusion

The Three Budget Lines Nobody Sequences Correctly

Three numbers that appear across desk budgets at $500M-plus AUM firms, often approved in the same fiscal year, sometimes in the same quarter:

Thank you for reading this post, don't forget to subscribe!

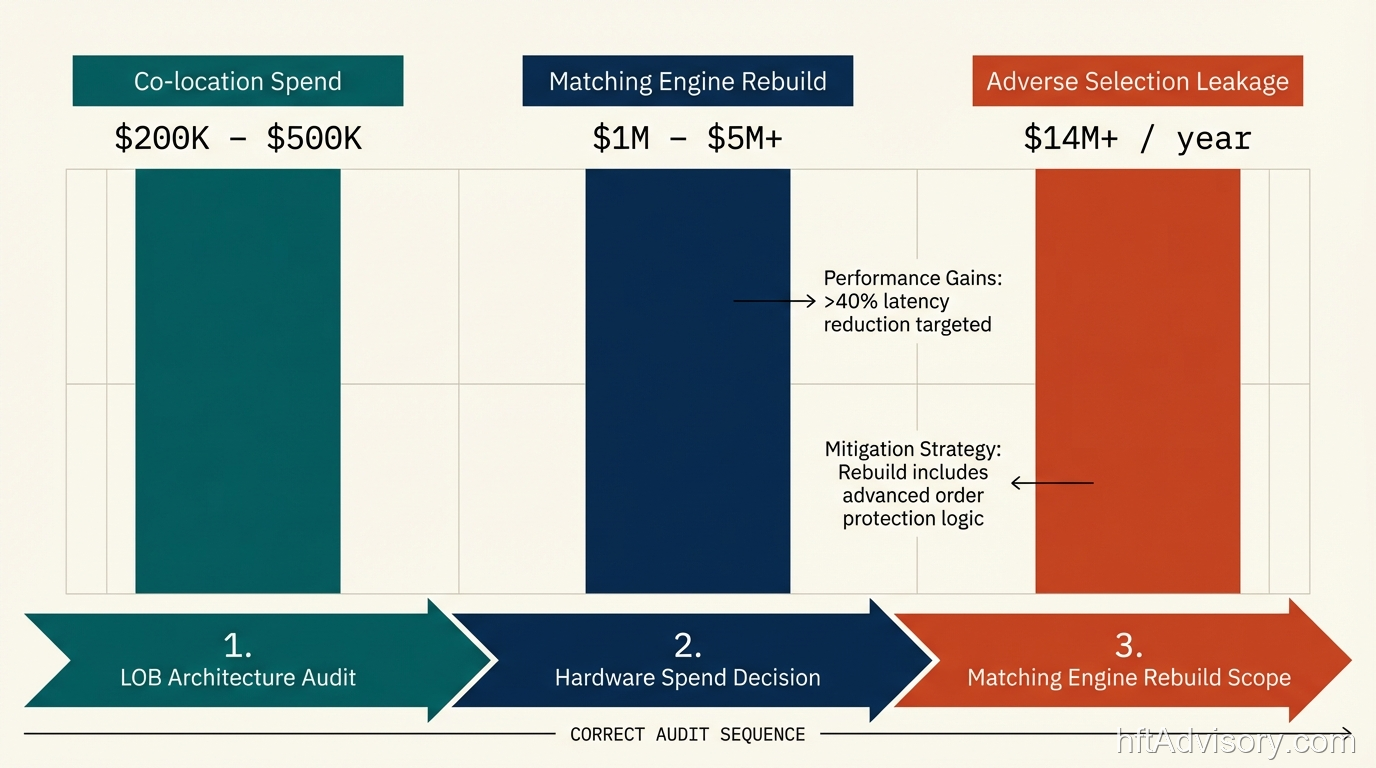

Co-location and venue connectivity spend: $200K to $500K per year in fully-loaded annual venue costs (rack space, connectivity, market data feeds, cross-connects). Matching engine rebuild: $1M to $5M or more in engineering time and infrastructure deployment. Passive fill adverse selection leakage: practitioner estimates for desks at $500M daily notional put this in the range of tens of millions annually, depending on queue position management quality and LOB update latency.

The first two are capital expenditures. The third is invisible on most P&L reporting because it shows up as underperformance against VWAP benchmarks, not as a named line item.

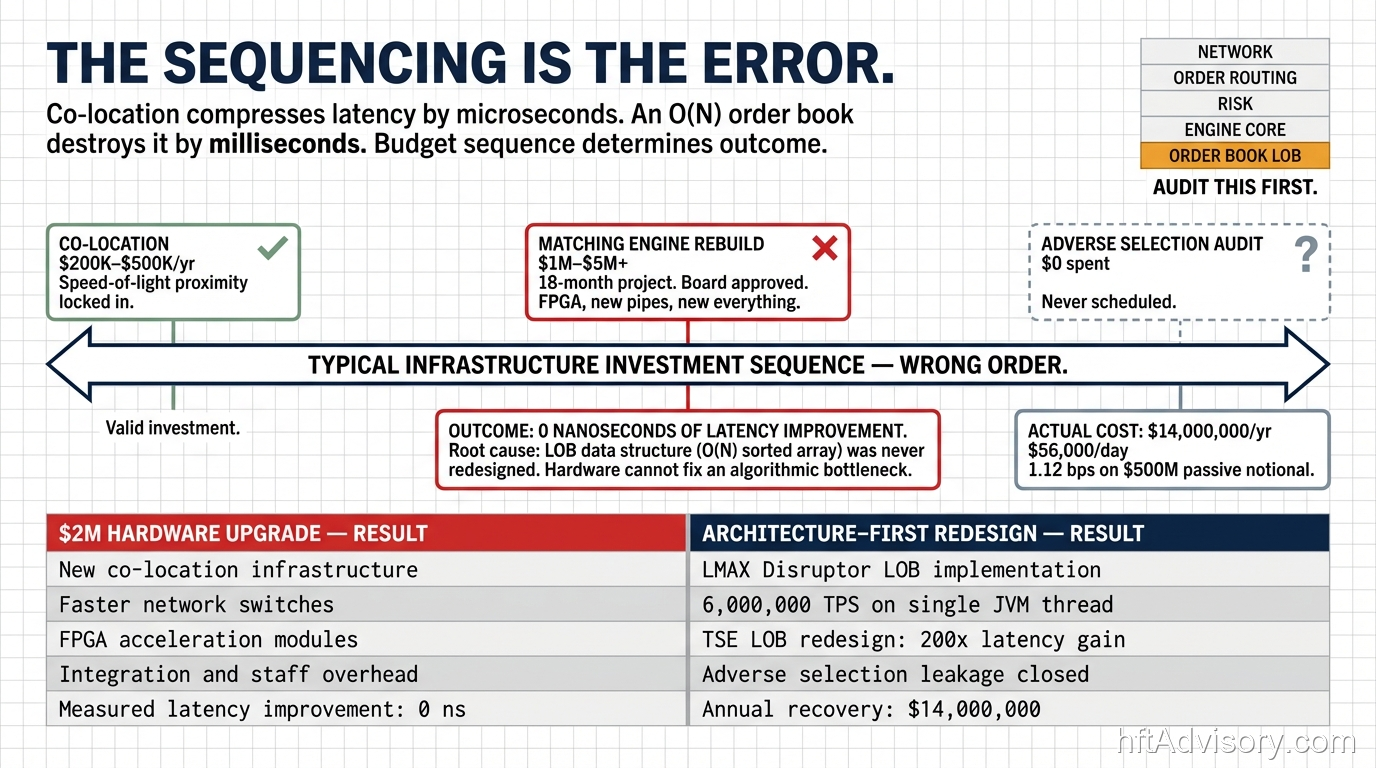

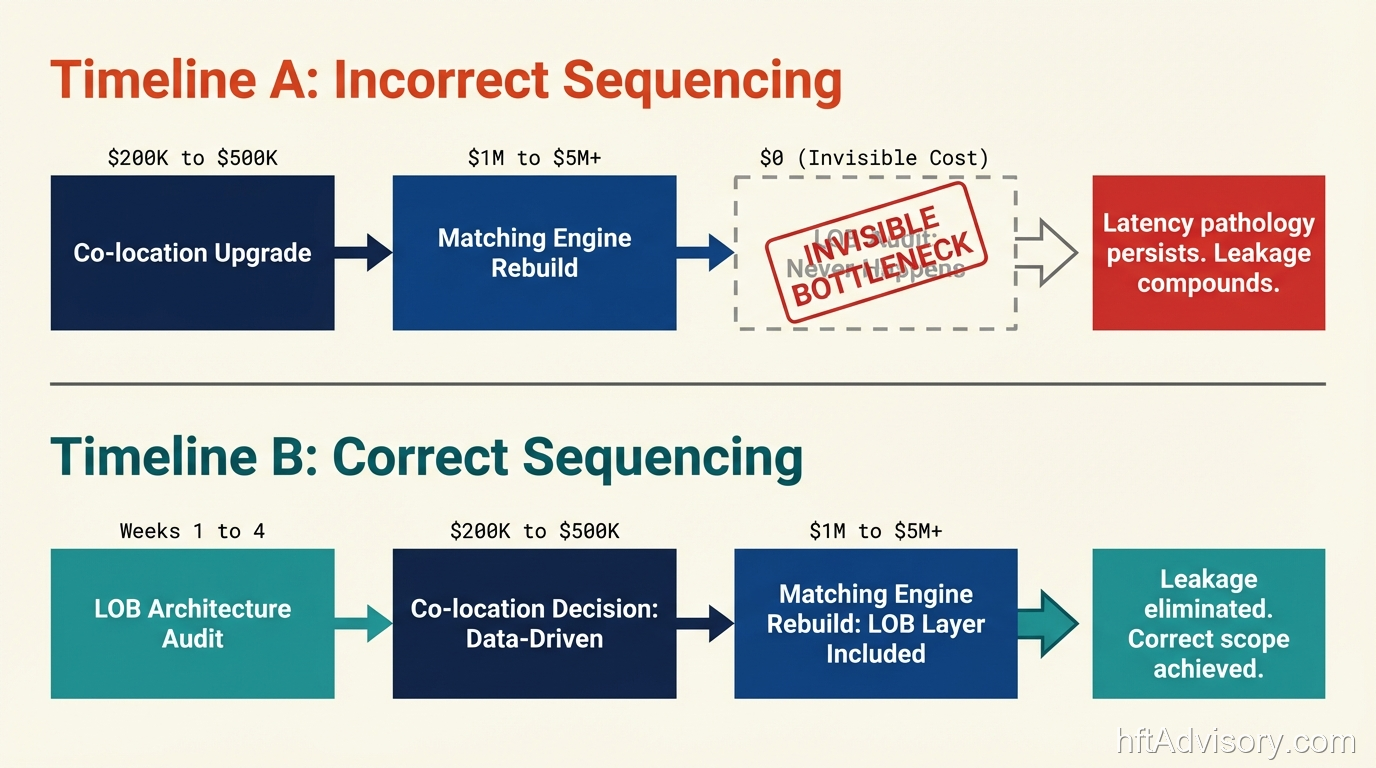

The sequencing error runs like this: a desk approves co-location spend to reduce tick-to-trade latency. Latency metrics improve modestly. Adverse selection on passive fills persists. Engineering diagnoses the matching engine. A rebuild is scoped and approved. The rebuild completes. The leakage persists. Post-mortem analysis eventually surfaces what my team finds consistently when we profile these stacks: the limit order book data structure feeding the matching engine was never touched.

Over 20 years in production HFT infrastructure, I have seen this specific sequencing error at multiple firms. The hardware gets upgraded. The matching engine gets rebuilt. The LOB layer runs the same O(N) sorted array it always ran. The capital gets deployed into incomplete work.

This article works through each budget line, the technical mechanics behind why the sequencing matters, and a diagnostic framework a CTO can use to determine whether their current LOB architecture is the silent variable being excluded from the latency equation.

The Infrastructure Trap: Proximity Without Data Structure Efficiency

Co-location solves a physics problem. The speed of light is fixed. At CME’s Aurora data center, rack rates run approximately $15,000 per month in direct costs alone. Fully loaded (connectivity, market data feed subscriptions, and cross-connects to the exchange included) a single venue slot reaches $200K to $500K per year. That spend buys physical proximity to the matching engine.

What it does not buy is efficiency inside the data structures processing market data within that rack.

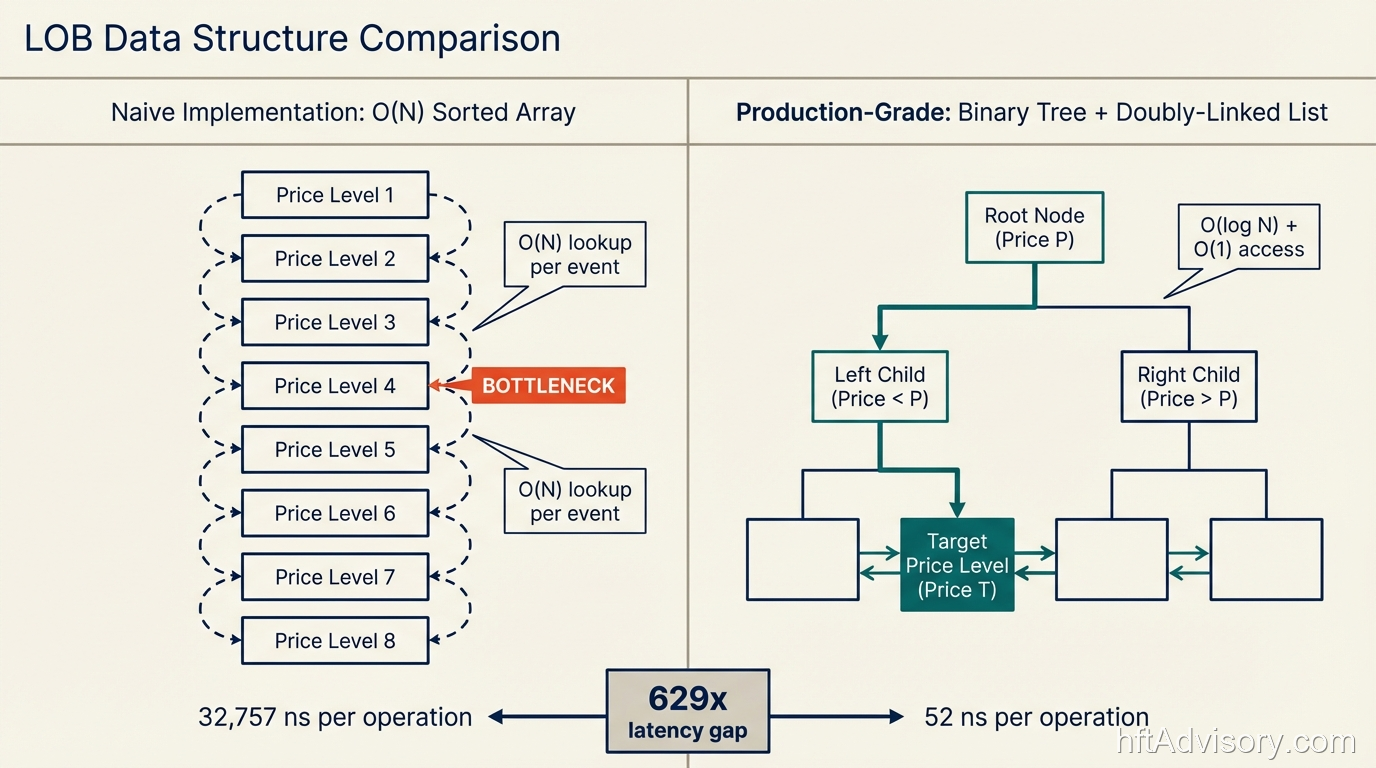

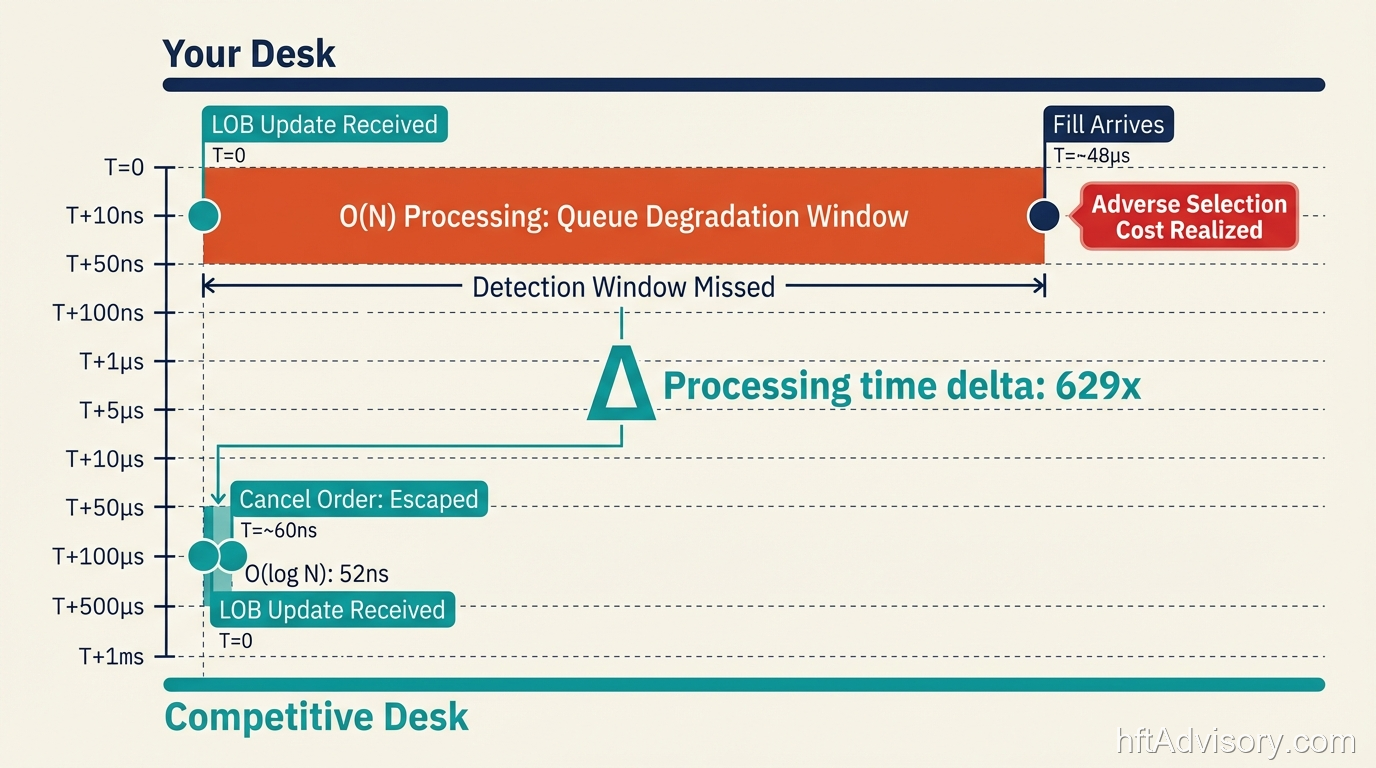

The LMAX Exchange architecture is the clearest public demonstration of this principle. Martin Fowler’s architecture overview (available on martinfowler.com) documents the LMAX Disruptor pattern’s performance: 6 million orders per second on a single JVM thread. The mean latency advantage over queue-based approaches is three orders of magnitude. Benchmarks on a 3GHz Nehalem Dell server showed ArrayBlockingQueue at 32,757 nanoseconds mean latency versus the Disruptor at 52 nanoseconds… a 630x difference. The LOB runs entirely in-memory. The hardware was a standard server that predates the current generation of FPGA deployments most desks now consider baseline.

The architectural choice produced that number. The hardware was not the variable.

This matters because most co-location decisions are implicitly hardware decisions. The assumption is: if the server is closer to the exchange and the NIC is faster, the latency floor drops. That assumption is correct at the network layer. At the data structure layer, it is irrelevant. A cache-unfriendly limit order book running an O(N) sorted array will produce the same algorithmic overhead at microsecond-class latency as it did at millisecond-class latency. The operations per order event do not change because the server moved into the co-location rack.

The TSE arrowhead4.0 launch (November 5, 2024) is instructive here. Fujitsu’s Primesoft Server infrastructure, 462 servers, ultra-high-speed in-memory data management, delivering 5-nanosecond market data. The architecture-first framing is explicit in the TSE design documentation. The hardware is substantial. But the design philosophy centers on in-memory data structure performance, not rack proximity alone.

For context on what the correct data structure looks like: a binary tree plus doubly-linked list implementation provides O(log M) insert complexity and O(1) cancel and execute operations. C++20 lock-free implementations benchmarked against this architecture have been measured at 160 to 171 million orders per second. A lock-free ring buffer against a mutex baseline shows roughly 847% throughput improvement with 92% latency reduction. These are not theoretical gains. They are the consequence of choosing the right data structure before deploying capital on hardware.

The desk that spends $300K on co-location while running an O(N) sorted array in the LOB is paying $300K per year for a fast address and filling the room with slow furniture.

The Leakage Math: What Passive Fill Adverse Selection Actually Costs

Passive fills carry a structural cost that tick-to-trade latency monitoring does not capture.

Hasbrouck and Saar (2013), “Low-latency trading,” published in the Journal of Financial Markets (Volume 16, Issue 4, pages 646-679), documents the market quality dimension of this directly. Their finding: “Our analysis suggests that increased low-latency activity improves traditional market quality measures… decreasing spreads, increasing displayed depth in the limit order book, and lowering short-term volatility.”

Read that in the context of queue position access. The desks accessing tighter spreads and better-displayed depth are operating at LOB-layer speed. A desk that cannot process LOB updates fast enough to detect queue position degradation before a fill arrives is on the wrong side of that microstructure advantage.

The queue position economics have been studied directly. Moallemi and Yuan (2016) measured the value difference between front-of-queue and average queue position at approximately 0.26 ticks. That gap is not theoretical. On a liquid instrument running thousands of passive fills per day, 0.26 ticks per fill compounds into material P&L erosion over a trading session.

For a desk running $500M in daily notional, practitioner-level adverse selection estimates on passive fills (based on patterns I have observed across institutional desks) place the annual leakage in the range of tens of millions of dollars when queue position management is suboptimal. I am framing this as practitioner observation rather than citing a specific academic figure, because the 1.12 basis points number that circulates in industry discussions has not been traced to a primary source I can verify independently. The order of magnitude is directionally consistent with the Moallemi and Yuan queue position data and with Hasbrouck and Saar’s market quality findings. The specific dollar figure on your desk will depend on fill rate, instrument mix, and passive-to-aggressive ratio.

The mechanism is specific: a desk that cannot process LOB updates within the latency window required to detect queue position degradation will receive fills at worse average queue positions than competitors running faster LOB update cycles. Adverse selection increases with depth, per the Moallemi and Yuan findings. The desk sees this as leakage on passive fills. The latency monitoring shows end-to-end tick-to-trade numbers. The LOB update latency, as an independent variable, is not isolated.

The leakage is invisible on the monitoring dashboard. The money is not invisible on the P&L.

CPU cache behavior compounds this at scale. LOB data structures are cache-sensitive. CPU cache lines are 64 bytes. A misaligned LOB data structure forces cache misses on every order event. At 5 million order events per day, the overhead compounds into steady bleed that does not produce obvious latency spikes. It shows up as a degraded baseline that tick-to-trade monitors attribute to market conditions rather than data structure overhead. A correctly aligned LOB (using alignas(128) to reduce false sharing) reduces this overhead by approximately 5%.

The Rebuild That Misses the Point: Matching Engine Scope Without LOB Audit

Matching engine rebuilds run $1M to $5M or more in engineering time and deployed infrastructure. The scope problem is consistent: firms spend that capital rebuilding the matching engine and discover afterward that the LOB layer feeding it was never part of the project.

My team profiled a stack after an engagement where a firm had executed a full co-location upgrade (board-approved, FPGA cards, microsecond-class NICs… the complete infrastructure package). The bottleneck was an O(N) sorted array inside the limit order book data structure. At 5 million order events per day, the overhead had been compounding into a steady performance bleed that was invisible on tick-to-trade monitors. The infrastructure spend was approximately $2M. The measurable latency improvement after the upgrade was negligible.

The firm had solved the network layer problem. The data structure problem had not been scoped.

NSE India’s 2026 architecture initiative targets 100 million transactions per second, up from 5 to 6 million currently, with nanosecond-class latency. NSE is expanding rack capacity from 2,000 to approximately 4,500 as part of this program, with a go-live date of April 11, 2026. The NSE initiative is both architecture redesign and hardware investment (the public reporting documents both dimensions explicitly). The architectural redesign component is what enables the 17x-plus throughput target. The hardware expansion supports it. You cannot reach 100M TPS on current architecture by adding more racks. The LOB design changes first.

Island ECN ran millisecond-class execution on a DOS-based FoxPro matching engine using B-tree indexing in the late 1990s. Three decades later, firms are still sequencing the hardware upgrade before the architecture audit.

The pattern is not malice. It is organizational: matching engine rebuilds are visible engineering projects with defined scope, deliverables, and sign-off criteria. LOB architecture audits are diagnostic work with less obvious scope boundaries. The hardware spend has a procurement order. The data structure optimization does not have a line item until someone profiles the stack and finds the O(N) loop.

This is why the post-mortem on the $2M infrastructure spend described above showed no measurable improvement. The monitoring tracked tick-to-trade. The LOB update latency was not isolated as an independent variable. The O(N) array was processing the same number of operations per order event it always had. The data just arrived at the array slightly faster.

A matching engine rebuild without a preceding LOB architecture audit is roughly equivalent to replacing the engine in a car without checking whether the transmission is the actual failure point. The capital deploys. The symptoms persist.

Diagnostic Framework: The LOB Architecture Sequencing Checklist

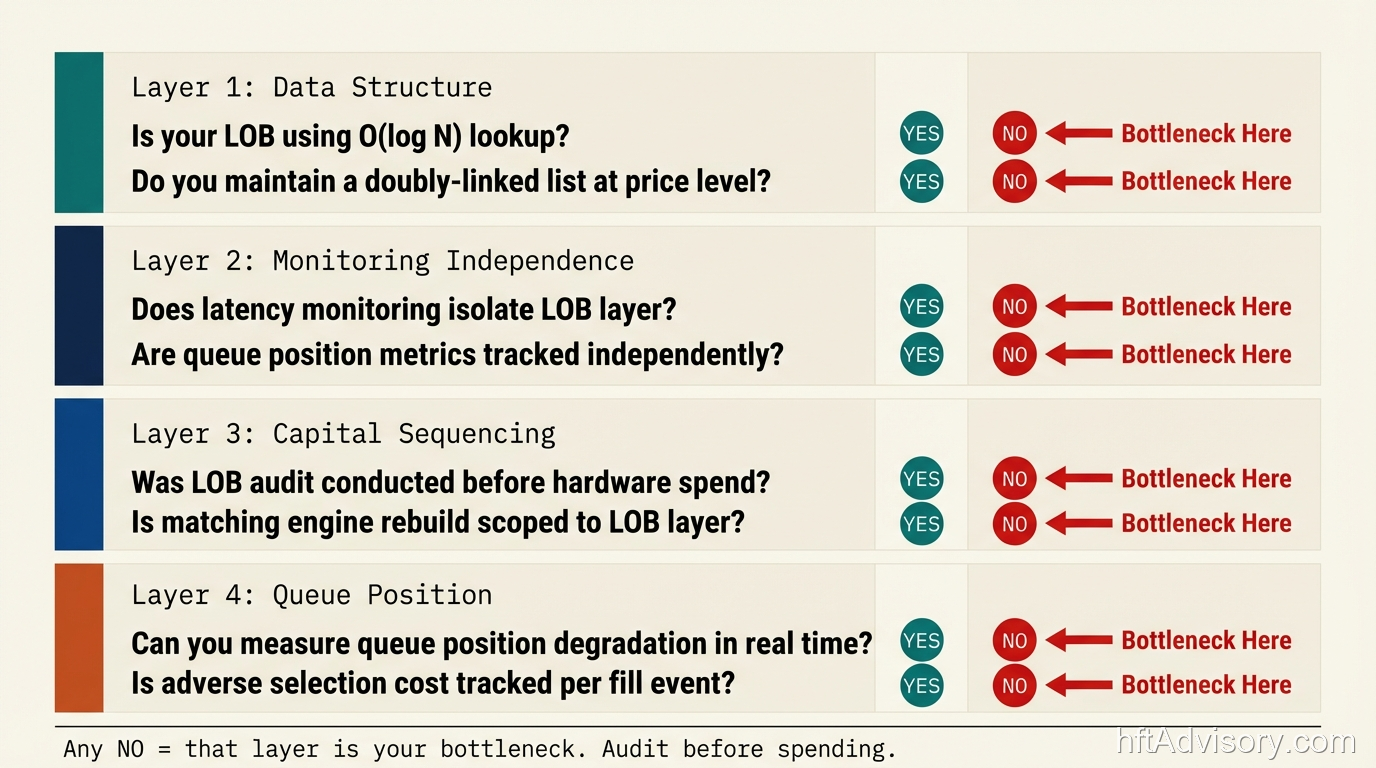

Before the next infrastructure spend decision reaches the board, a CTO can run this diagnostic to determine whether the LOB layer is the unexamined variable.

Layer 1: LOB Data Structure Audit

Identify the current LOB data structure implementation. Specifically:

- Is the price level lookup using a sorted array (O(N) on insert/cancel) or a binary tree / hash map with doubly-linked list (O(log M) insert, O(1) cancel and execute)?

- Is the data structure cache-aligned? Are order book entries packed to 64-byte cache line boundaries? Is

alignas(128)used at the price level struct to reduce false sharing? - Has the LOB data structure been profiled independently from the matching engine? Does your profiling output isolate LOB update latency as a separate measurement from tick-to-trade?

If your team needs to investigate to answer these questions, that is the answer. An optimized LOB data structure is a known quantity. The team that built it can describe it in two sentences.

Layer 2: Latency Monitoring Independence

Does your current latency monitoring:

- Isolate LOB update latency as an independent variable, separate from the full tick-to-trade measurement?

- Flag queue position degradation events before fills arrive, or only after?

- Track per-price-level update latency under peak order event rates (the degradation from O(N) operations compounds at high event rates)?

If the monitoring shows end-to-end tick-to-trade but does not isolate LOB update latency independently, the monitoring architecture is structurally unable to detect the class of bottleneck described in this article. The $2M post-mortem above is a direct consequence of this monitoring gap.

Layer 3: Sequencing the Next Capital Decision

Before approving co-location expansion or a matching engine rebuild:

- Has the LOB architecture been profiled under production order event rates?

- Has the profiling output been used to determine whether the LOB layer is the latency floor, or whether hardware proximity is the binding constraint?

- Does the rebuild scope explicitly include the LOB data structure layer, or is the rebuild scoped to the matching engine above it?

The hardware decision comes after the architecture audit. In the correct sequencing, the LOB profiling output determines whether co-location spend addresses the actual bottleneck. In the incorrect sequencing (the one I see most often), the co-location spend is approved first and the architecture audit happens after the fact, if at all.

Layer 4: Queue Position Monitoring

For passive fills specifically:

- Is queue position tracked per fill, or only in aggregate?

- Is adverse selection on passive fills isolated from overall slippage metrics?

- Is the LOB update processing fast enough that the desk can detect queue position degradation and cancel before receiving an adverse fill?

These questions are answerable with existing data. If they are not currently being tracked, the leakage described in the Hasbrouck and Saar (2013) market quality findings is occurring without attribution.

Conclusion

Three budget lines. One sequencing problem. The co-location spend is legitimate for the network layer problem it solves. The matching engine rebuild may be warranted. Neither of those investments addresses the LOB data structure layer, and neither will surface the bottleneck until the LOB architecture is profiled independently.

The LMAX Disruptor result (52 nanoseconds versus 32,757 nanoseconds for queue-based approaches) was produced on a standard 3GHz Nehalem server. NSE’s 100M TPS target requires architecture redesign alongside hardware expansion. The pattern is consistent across three decades of electronic trading infrastructure: the architectural choice is the primary variable. The hardware multiplies what the architecture allows.

The standard for a production LOB architecture is O(1) cancel and execute operations, cache-aligned data structures, and independent latency monitoring that isolates LOB update latency from tick-to-trade. If your current monitoring tracks tick-to-trade but does not isolate LOB update latency as an independent variable, the $2M post-mortem above is measuring your future, not your past.

To assess whether your current LOB architecture is the unexamined variable in your latency profile, see the Discovery Assessment on electronictradinghub.com.

Originally shared as a LinkedIn post on March 20, 2026. Source post: https://www.linkedin.com/feed/update/urn:li:share:7440860588148178945/

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

>> Learn more about what I do:

https://hftAdvisory.com

>> Your execution logs contain $200K+ in recoverable edge.

>> Microstructure Diagnostics — one-time audit, 3-5 day turnaround

https://hftadvisory.com/microstructure-diagnostics

... more info about me 👇