Why Textbook C++ Optimizations Wreck p99 Latency in HFT: A Three-Root-Cause Post-Mortem

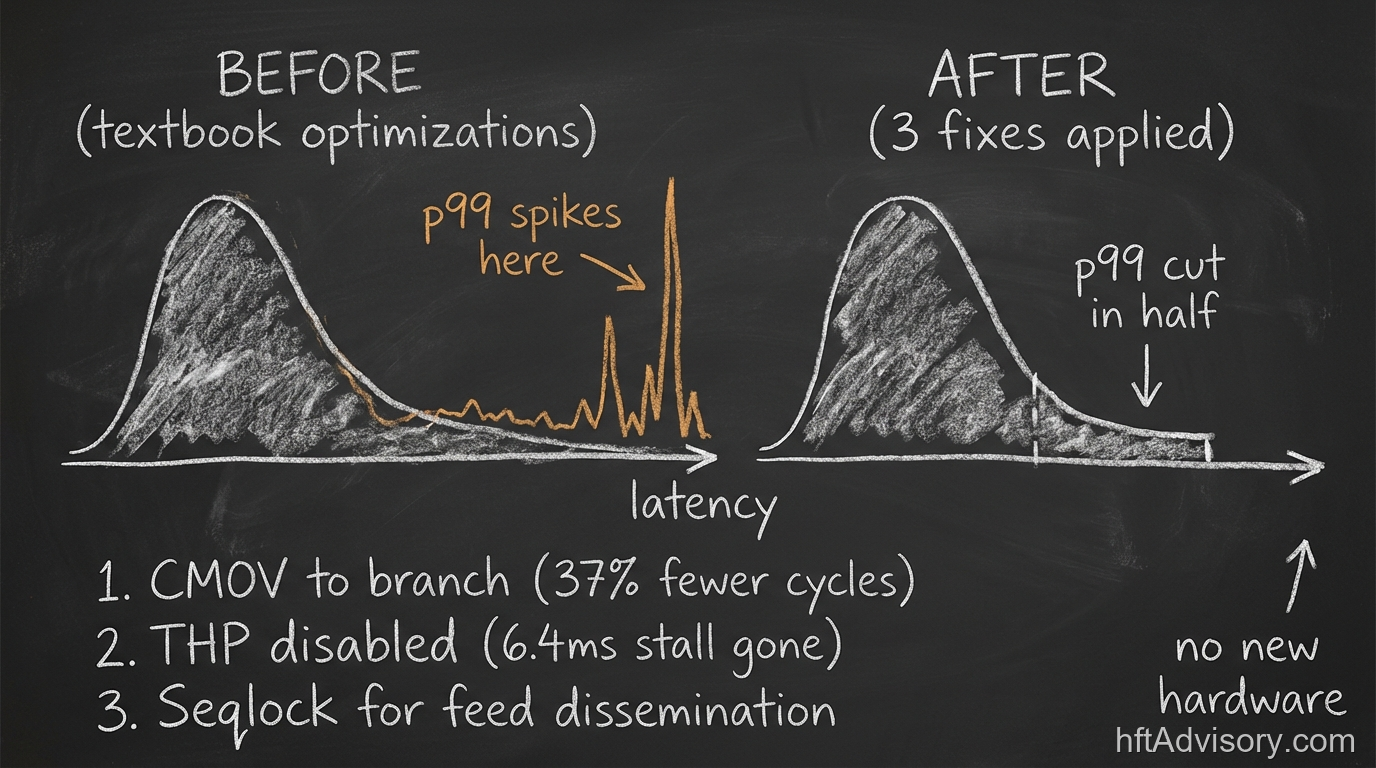

A $500M daily notional desk applied every textbook C++ optimization their engineering team knew. Branchless code. System-wide huge pages. A battle-tested lock-free queue. Their p99 latency got 23% worse.

Their p50 looked pristine. The team had done the work. The code reviewed cleanly. And yet.

My team traced it to three compounding root causes, all simultaneously present in the same codebase. No new hardware. No infrastructure change. Three architectural assumptions that each made sense in isolation and compounded each other into a tail latency disaster.

Thank you for reading this post, don't forget to subscribe!

This post-mortem covers what we found, why each failure mode is invisible under standard monitoring, and what a pre-deployment audit should catch before it ships.

Table of Contents

- The p50 Was Clean. The p99 Was 23% Worse.

- CMOV: When the Textbook Is Correct and the Context Is Not

- Transparent Huge Pages Are Not Huge Pages

- Synchronization Topology: Match the Lock to the Pattern

- How to Diagnose This Before It Ships

- The Pre-Deployment Audit Checklist

- Conclusion

The p50 Was Clean. The p99 Was 23% Worse.

The first question any engineer asks when p99 degrades while p50 holds is: what changed? In this case, the answer was everything the team had deliberately tuned.

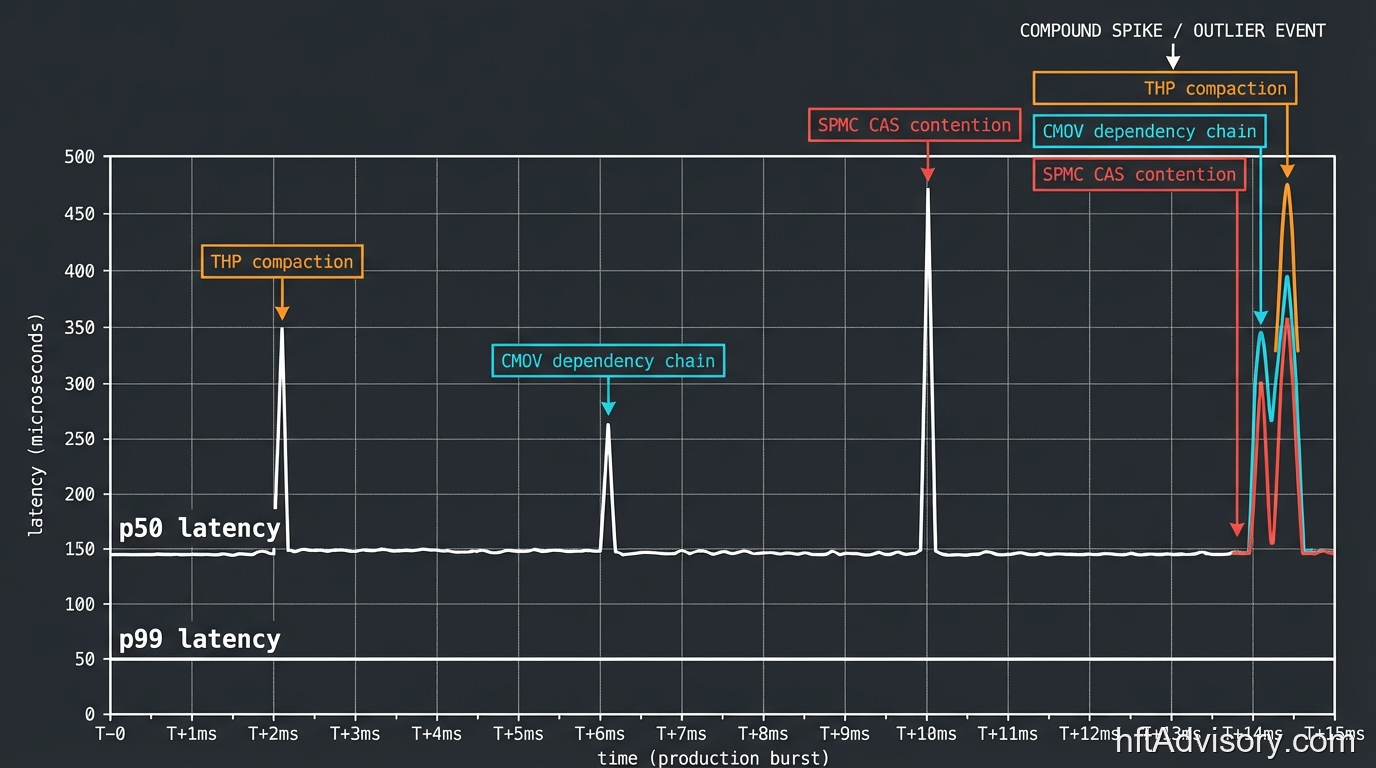

The p50/p99 split is a diagnostic signature, not a coincidence. When p50 is stable and p99 is deteriorating, you are not looking at a throughput problem. You are looking at a compound low-frequency event: one or more mechanisms that fire rarely but absorb latency when they do, and whose probability multiplies when several are present simultaneously.

Jeff Dean and Luiz Andre Barroso documented this in “The Tail at Scale” (ACM, 2013): at 1 million requests per day, your p99 events are not a rounding error. They happen 10,000 times daily. A p99/p50 ratio above 3x is not a statistical curiosity for later. It is the signature that multiple low-frequency failure modes are stacking.

This desk was running all three simultaneously. Each one would have been tolerable in isolation. Together, they compounded.

CMOV: When the Textbook Is Correct and the Context Is Not

The desk had aggressively replaced conditional branches with CMOV instructions. This is textbook advice. Most C++ performance guides frame branchless code as unconditionally better because it eliminates branch misprediction penalties.

The problem is that the textbook assumes you have unpredictable branches. HFT order routing does not.

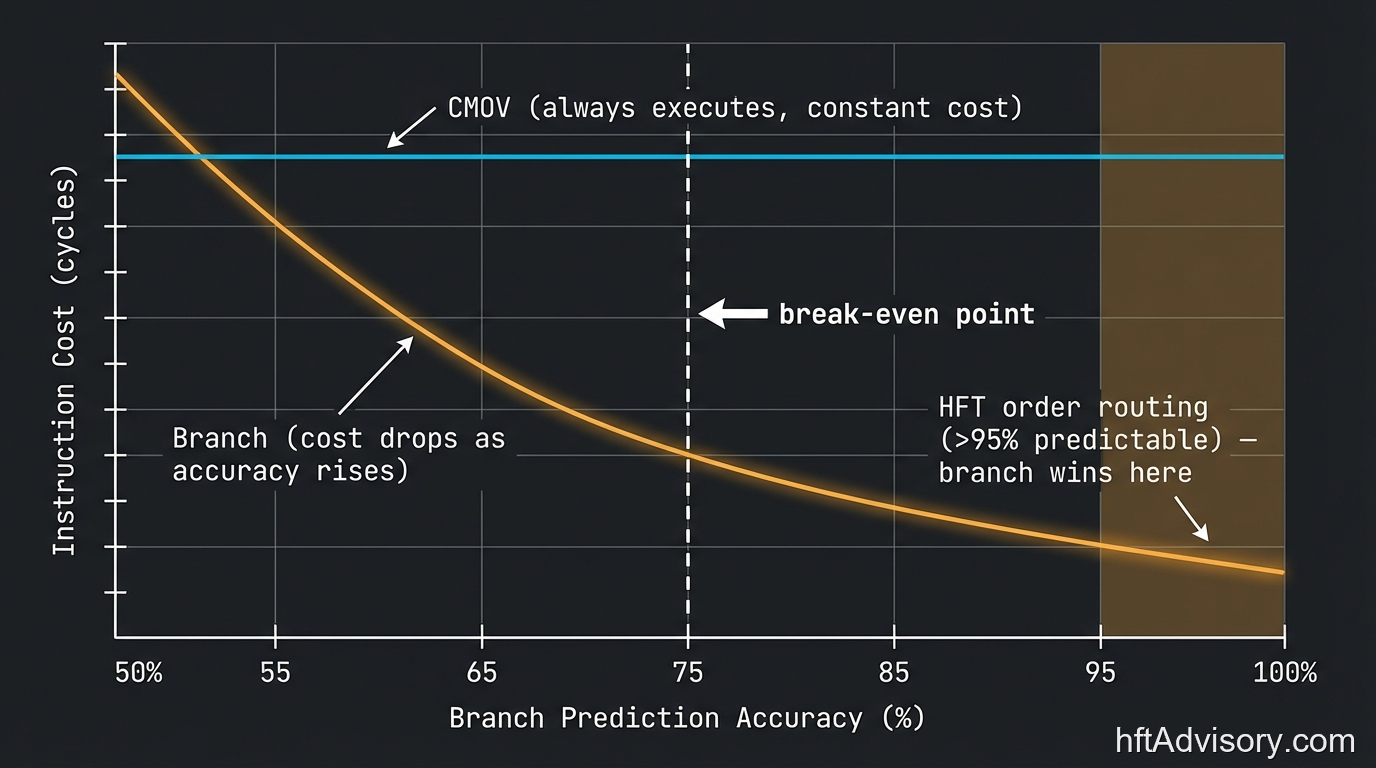

CMOV does not eliminate execution cost. It eliminates the cost of misprediction by forcing the processor to evaluate both execution paths before committing a result. On an unpredictable branch where misprediction probability is high, that trade is favorable: you pay a fixed cost instead of an occasional large one. On a branch the processor already predicts correctly more than 95% of the time, CMOV is pure cost with no upside. You have replaced an occasional penalty with a universal one.

Modern Intel CPUs with TAGE branch predictors achieve above 97% accuracy on structured hot paths. HFT order routing, with its highly regular conditional structure, sustains above 95% prediction accuracy in practice. At that accuracy level, the break-even math is straightforward: branch misprediction costs 10 to 30 cycles when it happens, but at 95%+ accuracy it happens rarely. CMOV’s dependency chain serialization happens on every single execution.

A 2022 LLVM RFC quantified exactly this on AArch64: converting selects back to branches reduced critical path from 21 cycles to 13.25 cycles, a 37% reduction. The underlying principle applies identically to x86 hot paths with equivalent prediction accuracy. Bilokon, Lucuta, and Shermer documented this in “Semi-static Conditions in Low-latency C++ for High Frequency Trading” (arXiv:2308.14185, 2023): branches outperform CMOV on predictable paths in low-latency HFT C++. The semi-static conditions construct they propose gives engineers a language-level mechanism for communicating branch predictability to the compiler rather than forcing branchless rewriting.

The break-even point is approximately 75% branch prediction accuracy. Below 75%, CMOV wins. Above 75%, branches win. HFT order routing is nowhere near 75%.

The practical consequence: before touching any CMOV in a hot path, measure branch prediction accuracy on that specific path. Do not assume. The textbook advice is correct for the textbook’s assumed conditions. HFT hot paths frequently do not match those conditions.

Transparent Huge Pages Are Not Huge Pages

This is the root cause that surprises most engineers because it requires understanding the difference between what THP advertises and what it actually does.

The TLB miss cost problem is real. A TLB hit costs 1 to 2 cycles. A miss triggers a page walk: 10 to 20 cycles minimum, and a full cold miss on a 4KB page requires three dependent memory loads at approximately 70 nanoseconds of DRAM latency each, putting you at 210 nanoseconds before your data arrives. At the scale of HFT order routing, this matters. Meta measured approximately 20% of CPU cycles consumed by TLB misses on 64GB production servers, which is why huge pages are a necessity rather than a preference in high-performance systems.

The issue is not whether to use huge pages. It is which mechanism delivers them.

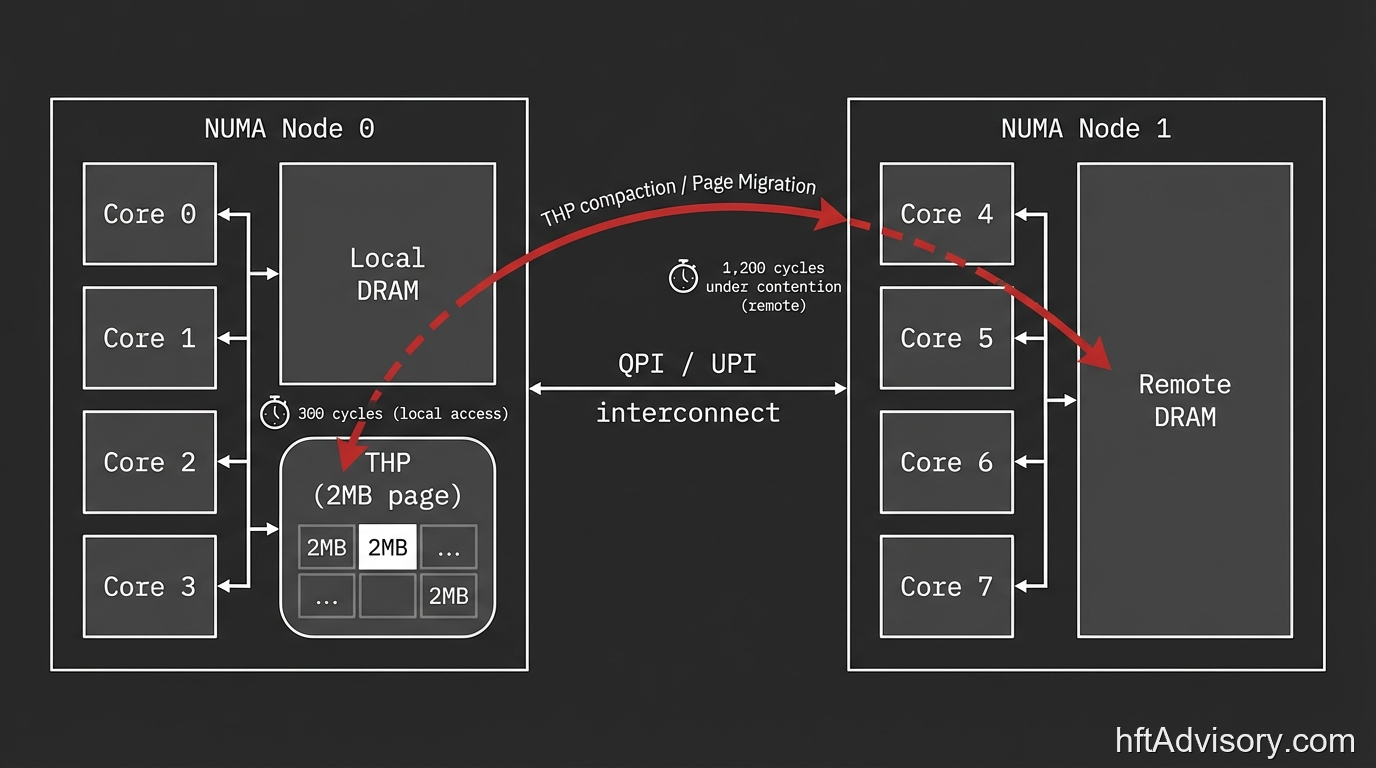

THP (Transparent Huge Pages) enabled system-wide does not pre-allocate large pages. It runs a background kernel thread called khugepaged that continuously scans memory and attempts to coalesce 4KB pages into 2MB pages through compaction. That compaction process can stall for 6.4 milliseconds under no memory pressure at all. Under production load on a NUMA system, compaction events can trigger soft lockups exceeding 22 seconds, as documented in Red Hat kernel engineering records.

The NUMA topology makes this worse. On a NUMA system, THP compaction can move pages across NUMA boundaries. A local memory access that costs roughly 300 cycles becomes a remote access at 1,200 cycles or more under contention. A compaction event does not just pause execution; it can convert your local memory topology to remote for the duration.

Why did p50 look clean? Because khugepaged compaction events are infrequent. p50 never touches them. p99 absorbs every one.

Hudson River Trading documented THP trade-offs in production low-latency environments across a two-part blog series, recommending madvise mode rather than system-wide THP as the safer operating mode. MongoDB versions 7.0 and earlier, Redis, and TiDB all require THP disabled before deployment for related reasons.

The correct approach for HFT is to disable THP system-wide and pre-allocate explicit huge pages at process startup. You get the TLB coverage benefit of 2MB pages without handing the kernel a background compaction thread to fire unpredictably during production hours. On NUMA systems, verify that your huge page allocation is NUMA-local to the cores running latency-sensitive threads — a remote allocation is invisible at p50 and will not show up until the tail.

Synchronization Topology: Match the Lock to the Pattern

The third root cause required identifying the actual access pattern before evaluating the synchronization primitive.

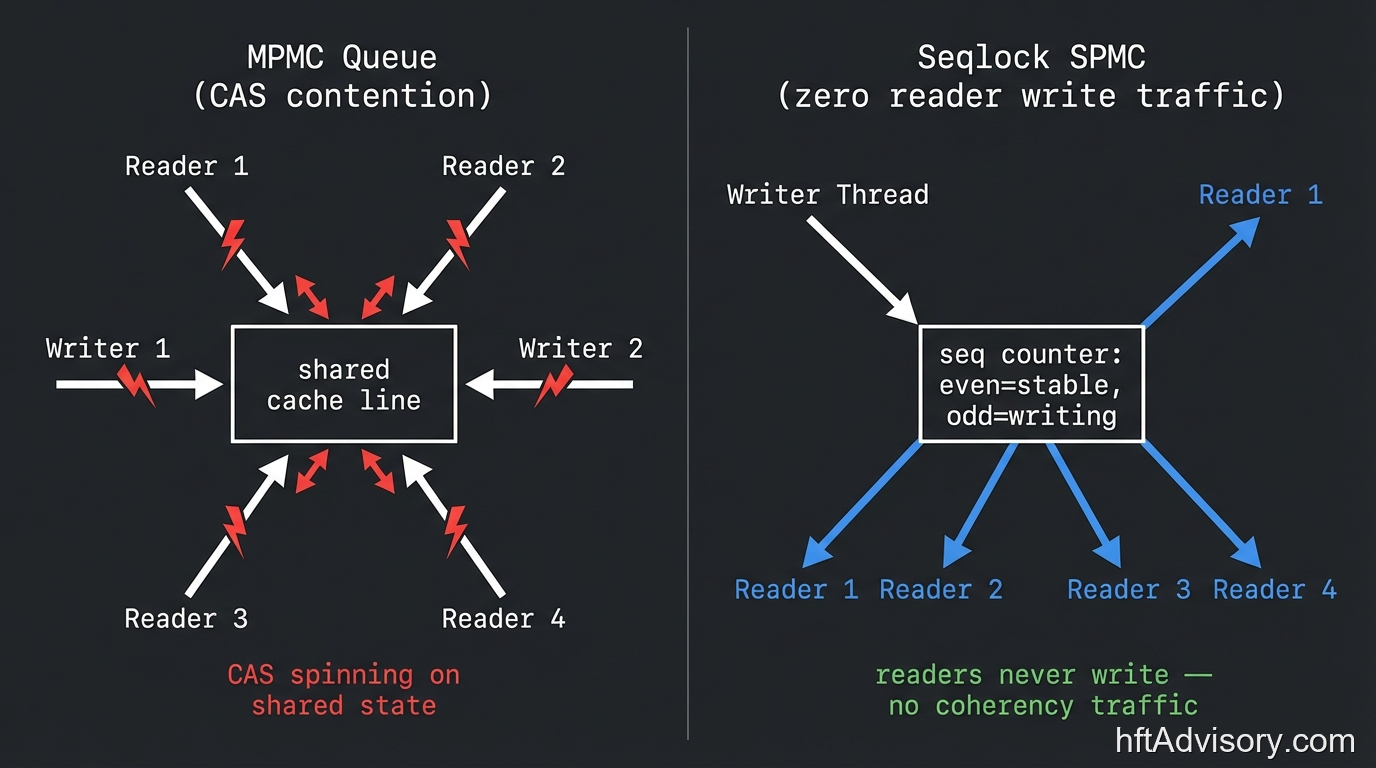

The desk was routing market data from feed to strategy threads through a lock-free queue. Lock-free queues are standard infrastructure. The problem was the topology: one writer, many readers. That is an SPMC pattern (single-producer, multi-consumer). The queue they were using was designed for multi-producer scenarios.

This matters because of how cache coherence works at the hardware level. The MESI protocol governs how cache lines propagate across cores. Every write operation, and every CAS (compare-and-swap) atomic used inside most multi-producer queues, forces a cache line through Modified to Invalid to Shared state transitions. All readers must observe the invalidation and refetch. In a multi-producer queue with many readers, CAS contention scales with reader count: each additional reader adds coherence traffic on the shared cache line.

Seqlocks eliminate this entirely for the SPMC topology. The mechanism is simple: the writer increments a sequence counter, writes data, increments again. Readers check the sequence before and after reading; if both reads see the same even value, the read was clean. Critically, readers issue zero writes. No CAS. No cache line invalidation. No coherence traffic proportional to reader count.

In a read-heavy SPMC configuration, seqlocks have been documented at approximately two orders of magnitude faster than standard RwLock equivalents. The LMAX Disruptor architecture demonstrated what topology-matched synchronization looks like at scale: 52 nanoseconds mean latency on a Disruptor-pattern pipeline versus 32,757 nanoseconds on an ArrayBlockingQueue in an equivalent three-stage benchmark.

The qualifier matters and should not be lost: seqlocks are the correct choice for SPMC dissemination patterns specifically. They are not a universal synchronization upgrade. A multi-producer pattern still requires a synchronization primitive that handles write-side contention. Misapplying seqlocks to an MPMC topology introduces data races. Classify the topology first, then select the primitive.

How to Diagnose This Before It Ships

Standard profiling fails here, and understanding why is important before selecting your tooling.

Sampling profilers work by interrupting execution at intervals and recording the call stack. On HFT systems that use spin loops for latency-sensitive waiting, the profiler captures predominantly spin loop activity. The actual execution hot spots, which occupy microseconds per event, are statistically invisible in a sampling-rate profiler. You end up with an accurate picture of where the CPU spends most of its wall-clock time (spinning) rather than where latency is generated.

Morgan Stanley’s Xpedite is a non-sampling profiler purpose-built for production HFT. It uses PMU (Performance Monitoring Unit) probes placed at specific code sites to capture exact cycle counts, cache and TLB miss rates, NUMA remote access events, and context switches per probe site. Because it is not sampling-based, it is not dominated by spin loop activity. It surfaces the actual execution cost at each instrumented point.

Intel VTune’s Top-down Microarchitecture Analysis (TMA) provides a complementary classification: stall cycles are categorized into Frontend Bound, Backend Bound, Bad Speculation, and Retiring. This directly surfaces whether a regression is coming from branch misprediction (Bad Speculation), memory stalls including TLB misses (Backend Bound, Memory Bound), or dependency chain serialization (Backend Bound, Core Bound). For the three failure modes above, VTune TMA would have fingerprinted all three.

HFTPerformance (open-source, 2024) provides a benchmarking framework designed to reproduce market-event burst patterns rather than steady-state load. This matters because THP compaction events and SPMC contention under reader load are burst-driven phenomena. A steady-state benchmark that never fires khugepaged compaction during the run will show clean p50 and miss the p99 problem entirely.

The diagnostic decision tree is straightforward: p50 stable, p99 degraded. That pattern points to a compound low-frequency event. The correct response is to profile under production-representative burst load using a non-sampling profiler, not to re-examine steady-state benchmarks.

The Pre-Deployment Audit Checklist

This is the framework my team applies before any low-latency C++ system goes into production on a live desk.

Branch prediction and CMOV:

- Measure branch prediction accuracy on every hot path before any CMOV or branchless rewrite. The threshold is approximately 75%: above it, keep the branch.

- Do not apply CMOV to a hot path without measured evidence that the branch prediction rate justifies it. The textbook recommendation is correct for unpredictable branches — verify that yours qualify.

- Document the measured accuracy alongside the optimization decision. The next engineer to review the code needs to know what context produced that choice.

Huge pages:

- The operating mode for a latency-sensitive process is explicit pre-allocation at startup, not the kernel’s background compaction default.

- On NUMA systems, verify that huge page allocation is NUMA-local to the cores running latency-sensitive threads. A remote allocation is invisible at p50 and will not surface until the tail.

- Treat THP as disabled and explicit allocation as confirmed before declaring the memory subsystem production-ready.

Synchronization topology:

- Classify every queue or shared-memory structure as SPMC, MPMC, SPSC, or MPSC before selecting a primitive.

- SPMC dissemination patterns (market data feed to strategy threads) warrant a seqlock evaluation. A generic multi-producer queue carries multi-producer overhead even when used in SPMC patterns.

- Document the topology classification in the code alongside the data structure selection.

p99 monitoring:

- Instrument p99 as a first-class metric in production. p50 alone will not surface compound low-frequency events.

- A p99/p50 ratio above 3x warrants investigation before it becomes a production incident. Do not wait for visible customer impact.

Profiling discipline:

- Use a non-sampling profiler for production latency investigation on HFT systems. Sampling profilers are dominated by spin loop activity and miss the execution hot spots.

- Run benchmarks under production-representative burst load, not steady-state. The failure modes described above are burst-driven — they are invisible to steady-state benchmarking.

- Use Intel VTune TMA classification to fingerprint the category of stall before attempting a fix.

Conclusion

A 23% p99 regression on a $500M daily notional desk is not an engineering metric in isolation. At that flow size, tail latency governs adverse selection exposure in volatile regimes — the moments when p99 events cluster are the moments when market conditions are moving fastest. In my experience advising desks at this scale, the drag from unresolved tail latency is consistently in the range of hundreds of thousands of dollars annually before compounding effects. That is the number that belongs in a pre-deployment audit alongside the cycle count.

The failure on this desk was not an engineering mistake. The team applied recognized, peer-reviewed optimization techniques. The failure was context-mismatch: each technique was correct for the conditions it was designed for, and wrong for the conditions they actually had.

CMOV is correct for unpredictable branches. THP system-wide is not explicit huge pages. Generic multi-producer queues carry multi-producer overhead even when used in SPMC patterns. None of these are implementation bugs. They are architectural assumptions that compound in the tail.

The compound nature of the failure is what made it invisible until p99 degraded 23%. Any one of these in isolation might have produced a measurable but explainable result. All three together created a tail where every rare event from every mechanism stacked into the same latency percentile.

The standard for production low-latency C++ is: p99/p50 ratio below 3x, measured under production-representative burst load, with a non-sampling profiler attached. If your pre-deployment process cannot produce that measurement, it may be time to audit the assumptions underneath your optimization decisions before they compound in production.

Originally published as a LinkedIn post on March 24, 2026. View the original post

Ariel Silahian has 20+ years of experience architecting high-frequency trading systems for institutional desks. Founder of VisualHFT.

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

>> Learn more about what I do:

https://hftAdvisory.com

>> Your execution logs contain $200K+ in recoverable edge.

>> Microstructure Diagnostics — one-time audit, 3-5 day turnaround

https://hftadvisory.com/microstructure-diagnostics

... more info about me 👇