Table of Contents

- Where Backend Experience Stops Working

- The Four Constraints Most Teams Learn Too Late

- The Sequencing Problem Is the Real Budget Leak

- What Timeline Compression Actually Looks Like

- The Counterarguments Worth Taking Seriously

- A Practical Diagnostic Framework for Engineering Leaders

- Why This Gets Harder Every Year

- Capital Efficiency, Not Just Engineering Quality

Put fifteen strong backend engineers on a sub-millisecond trading system build without prior HFT experience, and you’ll watch twelve to eighteen months disappear before anyone realizes the architecture is wrong. The failure is invisible to code review and only becomes obvious when production latency exposes it six months later and the market is already open.

This is the expensive invisible problem: strong engineers forced to discover high-frequency trading constraints in the wrong order without prior HFT experience.

The math is simple. Fifteen senior engineers at a conservative $250,000 fully loaded cost, including salary, benefits, infrastructure, and recruiting overhead, burn $3.75 million annually. If your roadmap shows twelve months minimum before the first production-grade low-latency system ships, the budget problem is already in the room. Every quarter of delay costs $937,500 in loaded engineering time before you count the real damage: missed market windows, architecture rewrites, or shipping the wrong benchmark into production and discovering it only after capital starts leaking.

Where Backend Experience Stops Working {#where-backend-experience-stops-working}

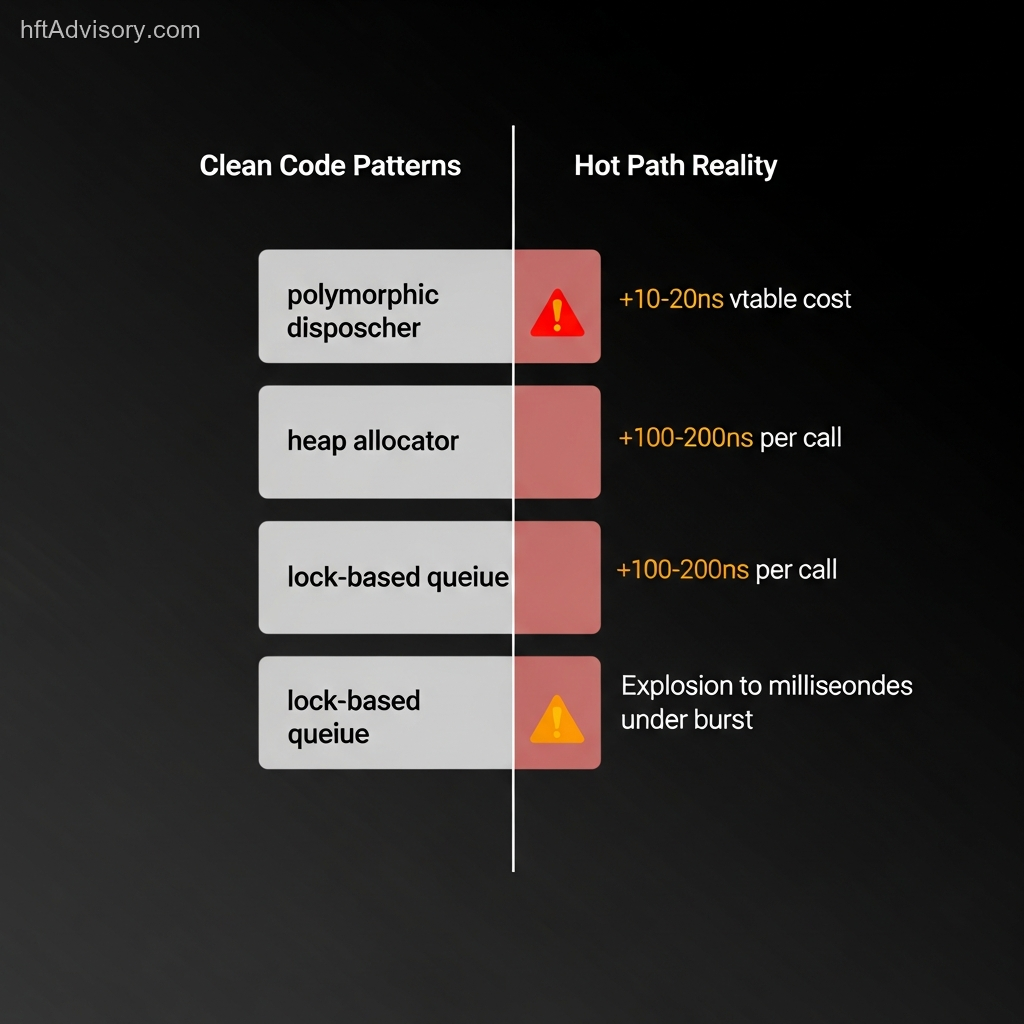

Strong software engineers carry hard-won instincts about what good code looks like. Clean abstractions. Separation of concerns. Testable components. Maintainable architecture. These instincts are correct in the systems they came from. They become expensive traps in HFT.

Low-latency trading punishes the habits general software engineering rewards.

A clean polymorphic message dispatcher with virtual dispatch looks architecturally sound in code review. It passes unit tests. It handles the happy path well. Then it ships to production and the vtable indirection adds ten to twenty nanoseconds per call. On a messaging engine processing 100,000 messages per second, that is one to two milliseconds of pure dispatch overhead sitting in your hot path. At target latencies below 500 microseconds, you have burned a meaningful share of your latency budget on abstraction elegance.

One extra heap allocation in the hot path matters. At modern CPU clock speeds, a dynamic allocation can consume 100 to 200 nanoseconds depending on the allocator. This looks trivial until you realize you are operating in an environment where 200 nanoseconds is a measurable competitive disadvantage. Exchanges reward speed with better queue position and fill quality. Every microsecond of adverse selection costs basis points on flow. Your competitors are not allocating in the hot path. They are using arena allocators and memory pools provisioned at startup.

Queue design is another trap. A lock-based FIFO queue handling 1,000 messages per second in your test environment feels fine. It is clean, it is correct, and it passes load tests at steady-state volume. Then the market opens. Burst traffic hits 50,000 messages per second in the first seconds. Lock contention explodes. Latency degrades from 10 microseconds to 10 milliseconds. The queue folds. The failure mode was invisible until production load arrived because test environments rarely simulate the open correctly.

The Four Constraints Most Teams Learn Too Late {#the-four-constraints-most-teams-learn-too-late}

General software engineering does not prepare teams for the constraint hierarchy that governs trading system architecture. These are not advanced ideas. They are foundational constraints that dictate every architectural decision, but they sit outside conventional backend training.

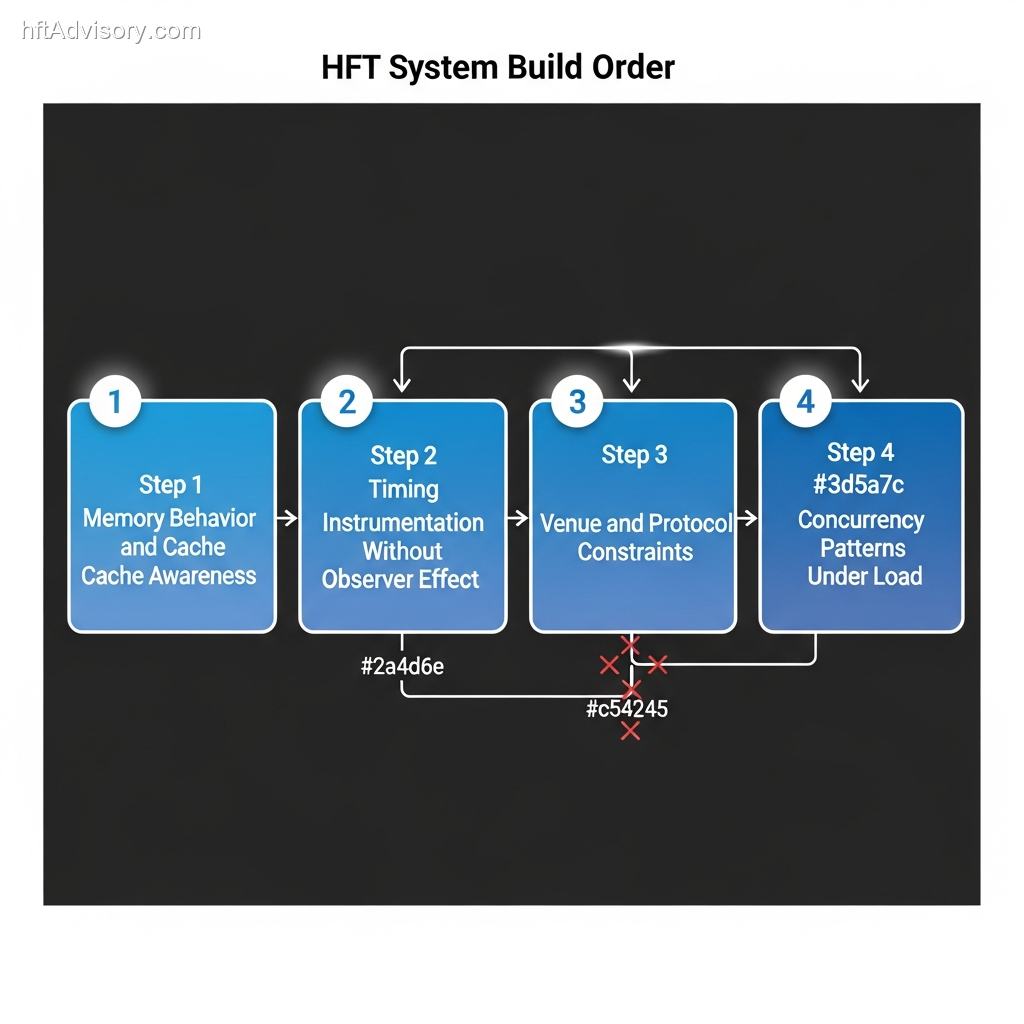

1. Memory Behavior Dominates Architectural Elegance

Modern CPUs are fast. Memory is slow. An L1 cache hit takes roughly four cycles. A miss to main memory can cost more than 200 cycles. That difference is not trivia. It is the performance envelope.

Cache-oblivious algorithm design, which is perfectly reasonable in general software, becomes a liability in low-latency systems. You need cache-aware optimization. Data structures laid out for cache line alignment. False sharing eliminated. Memory access patterns predictable enough that hardware prefetchers work in your favor.

Teams without this background build object-oriented class hierarchies that scatter related data across memory. The code looks clean. The cache behavior is terrible. The gap is invisible in code review and obvious only in production when competitors consistently beat you to the queue by a margin you cannot explain.

2. Determinism Beats Throughput Averages

Backend systems optimize for throughput and average-case performance. High-frequency trading optimizes for tail latency.

The 99th percentile matters. The 99.9th percentile matters more. A garbage collector tuned for throughput might pause for five to fifty milliseconds once every few minutes. That is acceptable for a web service. It is catastrophic for a trading system when the pause lands during a price dislocation event.

Deterministic performance engineering means eliminating jitter and non-determinism from the execution path. CPU pinning. Memory pre-allocation. Lock-free data structures where appropriate. Kernel-bypass networking. These are not optional refinements. They are baseline requirements if low latency is part of the strategy.

3. Venue Reality Defeats Generic Patterns

Exchanges and liquidity venues are not generic order routers. They run different protocols, enforce different rate limits, expose different order types, and have different session rules. Some venues respond to aggressive IOC orders differently than passive limit orders. Some disconnect on throttling. Some force you into data and execution patterns that generic designs never anticipated.

Teams new to electronic trading often build a generic order router abstraction that handles all venues uniformly. It is clean and maintainable in principle. Then production reveals venue-specific behavior that breaks the abstraction. The special cases pile up and the elegance that justified the design disappears.

Venue-first architecture designs around exchange constraints first, not around imported software patterns from unrelated domains.

4. Concurrency Failure Modes Only Show Up Under Real Load

Concurrency in backend systems usually means handling many simultaneous users or requests. Concurrency in HFT means handling market events arriving faster than the system can process them while preserving strict ordering guarantees and latency bounds.

The failure modes are different. Queue saturation under burst load. Priority inversion when low-priority work blocks high-priority order flow. Cache line bouncing when multiple cores update shared state. Scheduler delays when the kernel preempts a latency-critical thread.

These failure modes often do not appear in lab conditions. They appear at the market open when message rates spike and suddenly your queue is thrashing, your thread priorities are ineffective, and latency is spiking into the millisecond range because you did not isolate the right cores.

The Sequencing Problem Is the Real Budget Leak {#the-sequencing-problem-is-the-real-budget-leak}

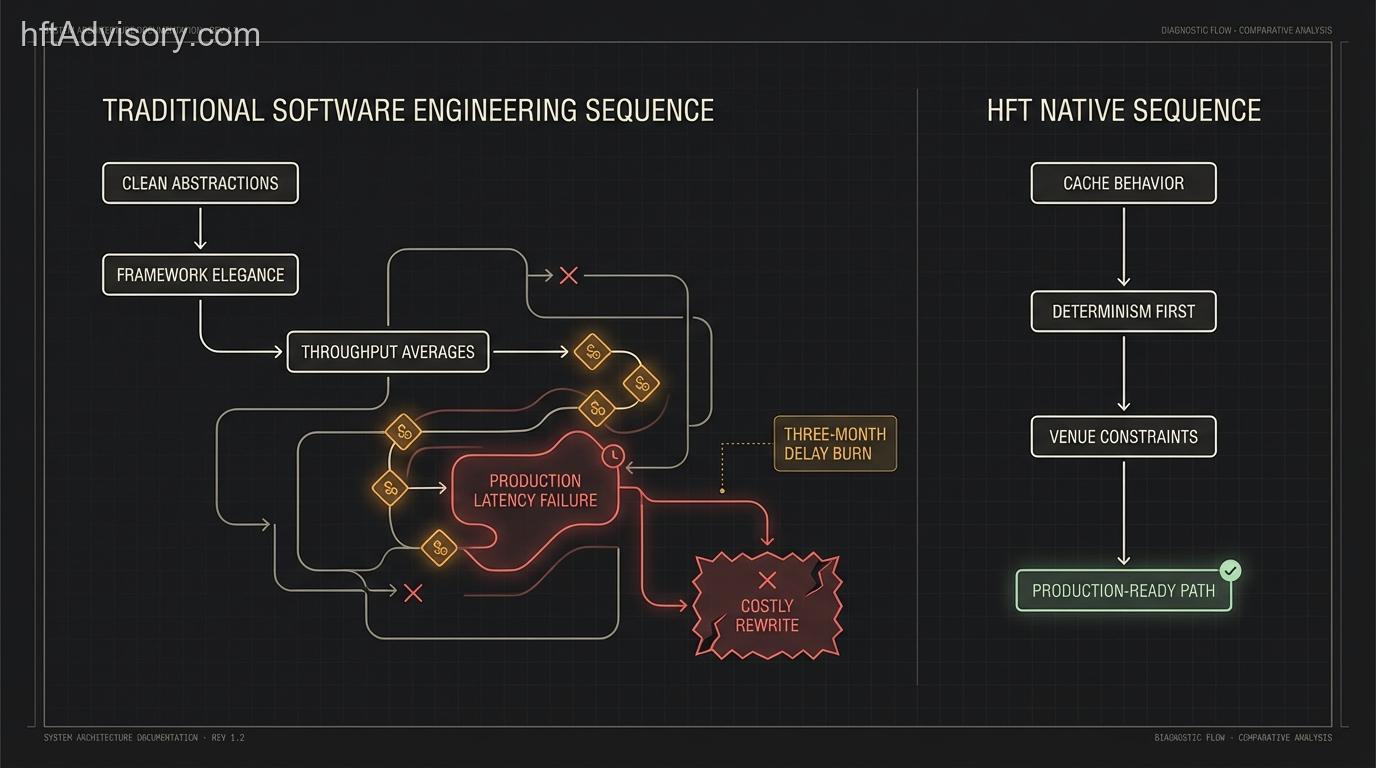

The constraint hierarchy is not just a list. It is a dependency graph. Learning these constraints in the wrong order forces rework.

Teams that start with concurrency optimization before understanding cache behavior build lock-free data structures that still thrash cache lines. Teams that start with framework selection before understanding venue constraints build abstractions that cannot survive real market behavior. Teams that optimize average latency before understanding determinism ship systems that look fast in benchmarks and fail at the tail.

Each rebuild cycle costs months of engineering time. More importantly, it costs market position.

The learning sequence that works is straightforward:

- Memory behavior and cache awareness first.

- Timing instrumentation without observer effect second.

- Venue and protocol constraints third.

- Concurrency patterns under realistic production load last.

This order matters because each layer depends on the one before it. Reversing the sequence guarantees expensive rediscovery.

What Timeline Compression Actually Looks Like {#what-timeline-compression-actually-looks-like}

In one advisory engagement with a Chicago-based quantitative trading firm, the team had fifteen strong backend engineers, strong delivery discipline, and no HFT production experience. Their initial roadmap projected roughly eighteen months to a production-ready low-latency execution system.

The expensive path was visible from the roadmap structure. They planned to start with framework selection and generic architecture, then iterate based on production feedback. That is the self-funded education model.

We compressed that timeline to approximately seven months by front-loading failure mode mapping. Instead of discovering cache thrashing in production six months in, the team identified memory layout issues in the first month through profiling. Instead of rebuilding the order router abstraction after venue-specific quirks broke it, they designed venue-first from the start. Instead of discovering concurrency failure modes at the open, they simulated burst-load scenarios with the correct instrumentation already in place.

Eleven months of timeline compression on a $3.75 million annual engineering base represents roughly $3.44 million in timeline value before counting opportunity cost. Even if you recover only half of a twelve-month delay, six months of compression still removes about $1.875 million in avoidable engineering drag.

That is before counting the harder costs: a slow first production release, lost market windows, or the reputational damage of failing to deliver edge on schedule.

The Counterarguments Worth Taking Seriously {#the-counterarguments-worth-taking-seriously}

The obvious objection is simple: why not just hire experienced HFT engineers instead of training backend engineers?

In theory, that is ideal. In practice, the talent pool is small, geographically concentrated, and heavily competed away by firms that can pay top-of-market compensation. If you could hire a full senior HFT team quickly, you would likely have done it already. The real operational question is what to do with the team you can actually build.

Training strong backend engineers with the right guidance is usually faster than waiting eighteen to twenty-four months for enough proven HFT hires to appear.

The second objection is hardware: just use FPGA acceleration to compensate for software limitations.

That gets the causality backward. FPGAs solve latency, not architecture ignorance. A badly designed system implemented in hardware is still a badly designed system, just with a more expensive debugging loop. Hardware acceleration makes sense when the software architecture is already correct and you are deciding where nanosecond-level gains justify complexity.

The third objection is that AI coding tools can teach the team. They can help, but only if the team already knows what to reject. Large language models are trained on general software patterns, not the actual constraint hierarchy of HFT. Without domain knowledge, AI assistance accelerates the wrong patterns.

A Practical Diagnostic Framework for Engineering Leaders {#a-practical-diagnostic-framework-for-engineering-leaders}

Before funding a multi-quarter low-latency build, ask these questions:

- Can the team explain the latency difference between L1, L3, and main memory access and why it matters to the hot path?

- Are you measuring 99th and 99.9th percentile latency, or only averages?

- Do you have instrumentation that captures tail events without materially distorting the system?

- Have you mapped the protocol, rate-limit, and order-type constraints for every venue you plan to support?

- Have you simulated burst conditions such as the market open rather than only steady-state load?

- Can you trace every allocation, dispatch boundary, and risk-control check in the order execution path?

If several of these produce vague answers, the architecture risk is already present. The budget impact just has not been recognized yet.

Why This Gets Harder Every Year {#why-this-gets-harder-every-year}

The latency bar keeps rising. Major venues continue to invest in faster matching and transport layers, and newer exchange initiatives are explicitly targeting nanosecond-class infrastructure. That means self-funded learning curves age poorly. A team spending eighteen months discovering constraints competitors solved years ago falls further behind during the learning curve itself.

That sharpens the strategic choice. Either compress the learning timeline with correct sequencing and real domain guidance, or accept that latency-sensitive strategies are not where your firm should compete.

Capital Efficiency, Not Just Engineering Quality {#capital-efficiency-not-just-engineering-quality}

Strong engineers are expensive. Using them as a self-funded HFT education program is more expensive.

The firms that move faster usually solve the sequencing problem first. They teach memory behavior before framework elegance. Determinism before throughput averages. Venue reality before generic patterns. Concurrency under real load only after the foundations are solid.

If your roadmap still shows twelve-plus months before the first production-grade low-latency system ships, the learning tax is already on the budget. The issue is not whether the team will eventually learn. The issue is whether you can afford to let production be the teacher while capital, time, and market windows keep moving in one direction.

This article was originally shared as a LinkedIn post.

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

I have operated on both the Buy Side and Sell Side, spanning traditional asset classes and the fragmented, 24/7 world of Digital Assets.

I lead technical teams to optimize low-latency infrastructure and execution quality. I understand the friction between quantitative research and software engineering, and I know how to resolve it.

Core Competencies:

▬ Strategic Architecture: Aligning trading platforms with P&L objectives.

▬ Microstructure Analytics: Founder of VisualHFT; expert in L1/L2/LOB data visualization.

▬ System Governance: Establishing "Zero-Failover" protocols and compliant frameworks for regulated environments.

I am the author of the industry reference "C++ High Performance for Financial Systems".

Today, I advise leadership teams on how to turn their trading technology into a competitive advantage.

Key Expertise:

▬ Electronic Trading Architecture (Equities, FX, Derivatives, Crypto)

▬ Low Latency Strategy & C++ Optimization | .NET & C# ultra low latency environments.

▬ Execution Quality & Microstructure Analytics

If my profile fits what your team is working on, you can connect through the proper channel.