By Ariel Silahian | 20+ years building HFT infrastructure | Creator of VisualHFT

Table of Contents

- The $1.25M Planning Failure

- The Full Stack Is Not What You Are Profiling

- Amdahl’s Law Applied Correctly to Tail Latency

- The Four Layers You Must Measure as Separate Variables

- The Constraint-Layer Audit: Four Steps Before the Roadmap

- The Diagnostic Checklist: Bookmarked and Shared

- Closing Standard

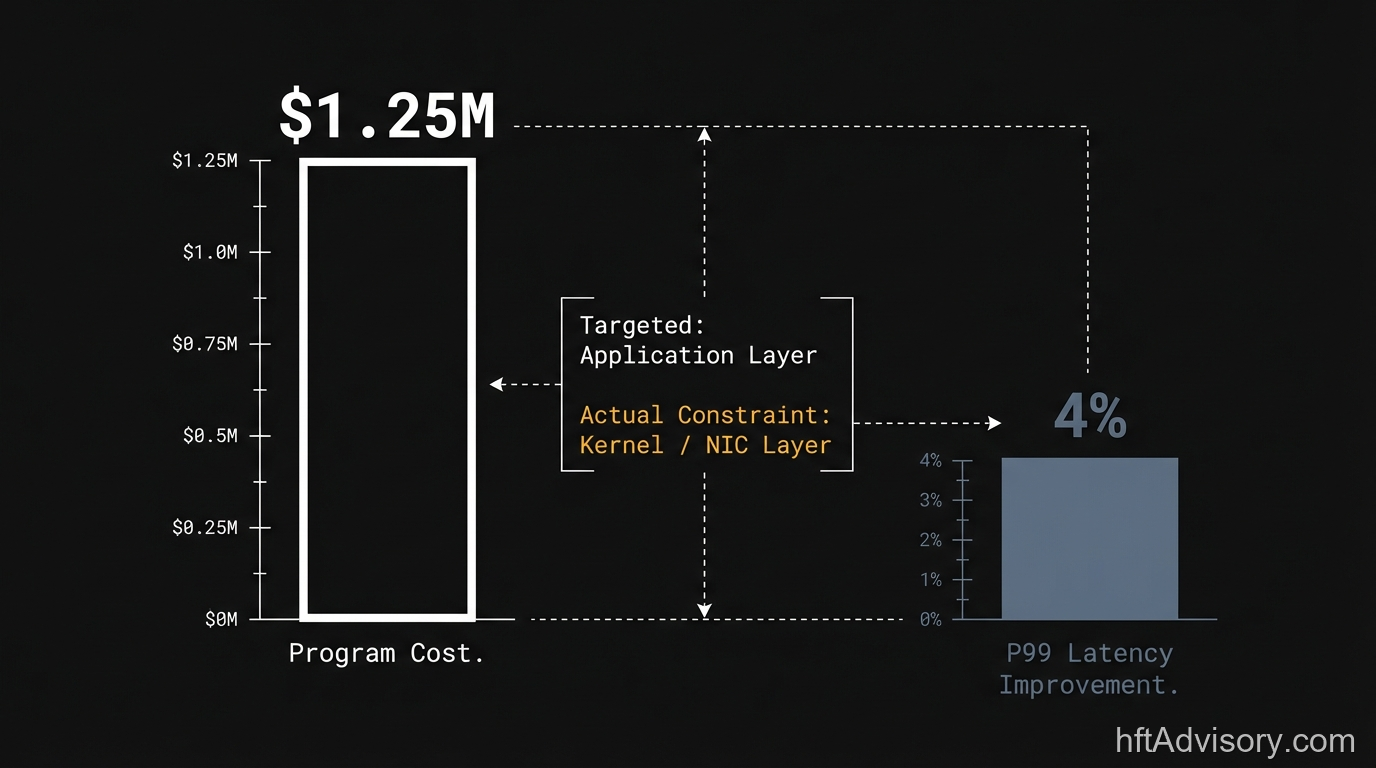

The $1.25M Planning Failure

$1.25M. Six months. A strong engineering team on a desk running $800M daily notional. P99 latency moved 4%.

That is not an engineering failure. I have seen enough of these programs to know the difference. The engineers on that engagement were competent. The C++ changes were defensible. The code review process was rigorous. None of that mattered, because the actual constraint sat two layers below the code, and it never appeared on the roadmap.

When I and a specialized team audited that optimization program, the finding was straightforward: the primary bottleneck was kernel bypass configuration and NIC interrupt coalescing settings. Neither had been profiled before the sprint plan was written. The application layer received six months of focused engineering resources. The kernel and NIC layers received none.

This pattern is not unusual. Across our engagement history, it is the dominant failure mode in fixed-budget HFT optimization programs. The firms that avoid it do one thing differently: they run a constraint-layer audit before the roadmap is written.

The firms that skip it confirm the bottleneck location after the first program fails to deliver.

The Full Stack Is Not What You Are Profiling

The measurement gap in most HFT environments is structural, not accidental.

Standard application-layer instrumentation captures latency from the point your code starts processing to the point your code finishes. Brett Harrison, former Citadel and FTX US, documented what that framing misses: network I/O, hardware operations, and NIC transmission routinely account for 90% or more of total observed latency on the critical path. What your instrumentation shows as “application processing time” is the minority of what your counterparty’s clock is measuring.

This matters because the constraint you are targeting with your roadmap is the constraint your profiling tools can see. If your profiling stops at the application boundary, your roadmap will be limited to the application layer. That is not a tooling problem. It is a framing problem.

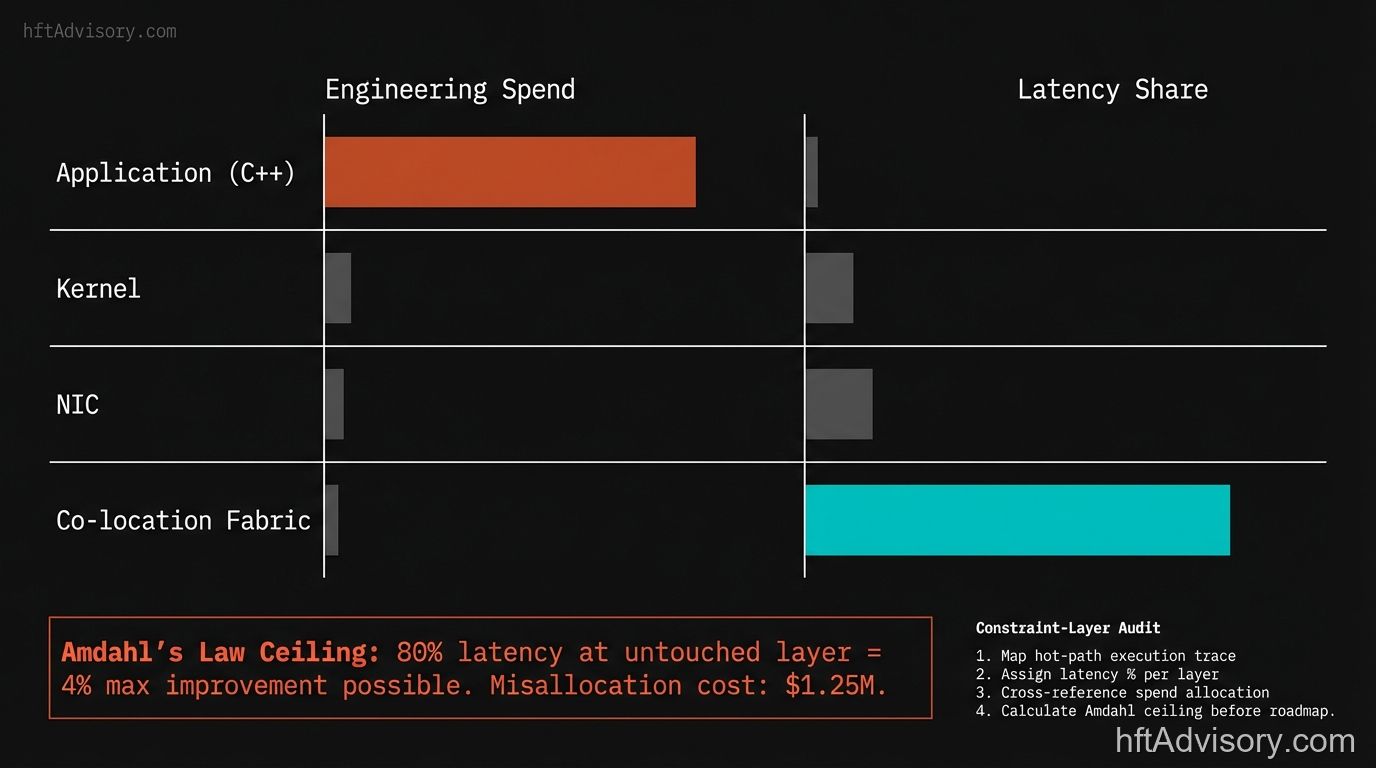

The full critical path in a production HFT environment spans four distinct layers, each of which is a separate variable:

Layer 1: Application. The C++ hot path, lock-free queues, serialization. Instrumented by default. The layer every optimization roadmap addresses first.

Layer 2: Kernel. The Linux network stack adds 20 to 50 microseconds per packet under standard configuration. Kernel bypass via DPDK, RDMA, or OpenOnload reduces that to 1 to 5 microseconds (a 10x to 50x reduction from a configuration change, no application code required).

Layer 3: NIC and Interrupt Coalescing. NIC interrupt coalescing and ring buffer sizes are independent configuration variables that are invisible to application-layer profiling. A mis-configured coalescing setting introduces jitter that looks like application instability. AMD’s Solarflare X4 series (2025) documented significant latency reductions versus prior-generation hardware from NIC-layer changes alone, with nine of the ten largest global stock exchanges running Solarflare infrastructure. Using FPGA-level kernel bypass via ef_vi, tick-to-trade latency floors at 750 to 800 nanoseconds. That ceiling is set at this layer, not at the application layer.

Layer 4: Co-location Fabric. Physical distance is a discrete constraint variable. Fifty meters of additional fiber introduces approximately 200 microseconds of round-trip latency. No software optimization touches it.

Each layer has a measurable latency budget. Each layer has a distinct optimization ceiling. The application layer is the easiest to instrument and the default target of every roadmap written without a prior constraint-layer audit. It is frequently not the binding constraint.

Amdahl’s Law Applied Correctly to Tail Latency

The original framing I used in the LinkedIn post required a correction, and I want to address it directly here because the corrected math is actually the stronger argument.

Amdahl’s Law states that the maximum improvement from optimizing a fraction of a system is bounded by the fraction you can actually change. If only 20% of your total latency lives in the layer your program is targeting, the theoretical ceiling on your optimization (assuming perfect execution) is a 25% reduction in overall latency. Not 20%. The math: 1 / (1 – 0.20) = 1.25, meaning total system throughput can improve by at most 25% by optimizing that 20%.

In the engagement I described, the program achieved 4% P99 improvement. The Amdahl ceiling on the addressable 20% portion was 25%. The team captured 4% of a possible 25%. That means even within the layer they did target, execution underperformed the mathematical ceiling by more than 5x.

There are two failure modes stacked on top of each other: the program targeted the wrong layer (wrong ceiling), and within that wrong layer, it did not capture the ceiling that existed there.

Research by Delimitrou and Kozyrakis at Cornell and Stanford on Amdahl’s Law for tail latency reinforces why this compounds. For P99 specifically, Amdahl’s Law is more consequential than for average performance. Bottlenecks accumulate at the tail. A 5% improvement to a non-bottleneck layer produces effectively zero change in P99 because the tail is dominated by the binding constraint elsewhere in the stack.

The diagnostic question that follows from this: if your last optimization program delivered less than 30% of projected P99 improvement, the most likely explanation is not execution quality. It is that the ceiling you planned against was not the binding constraint.

The Four Layers You Must Measure as Separate Variables

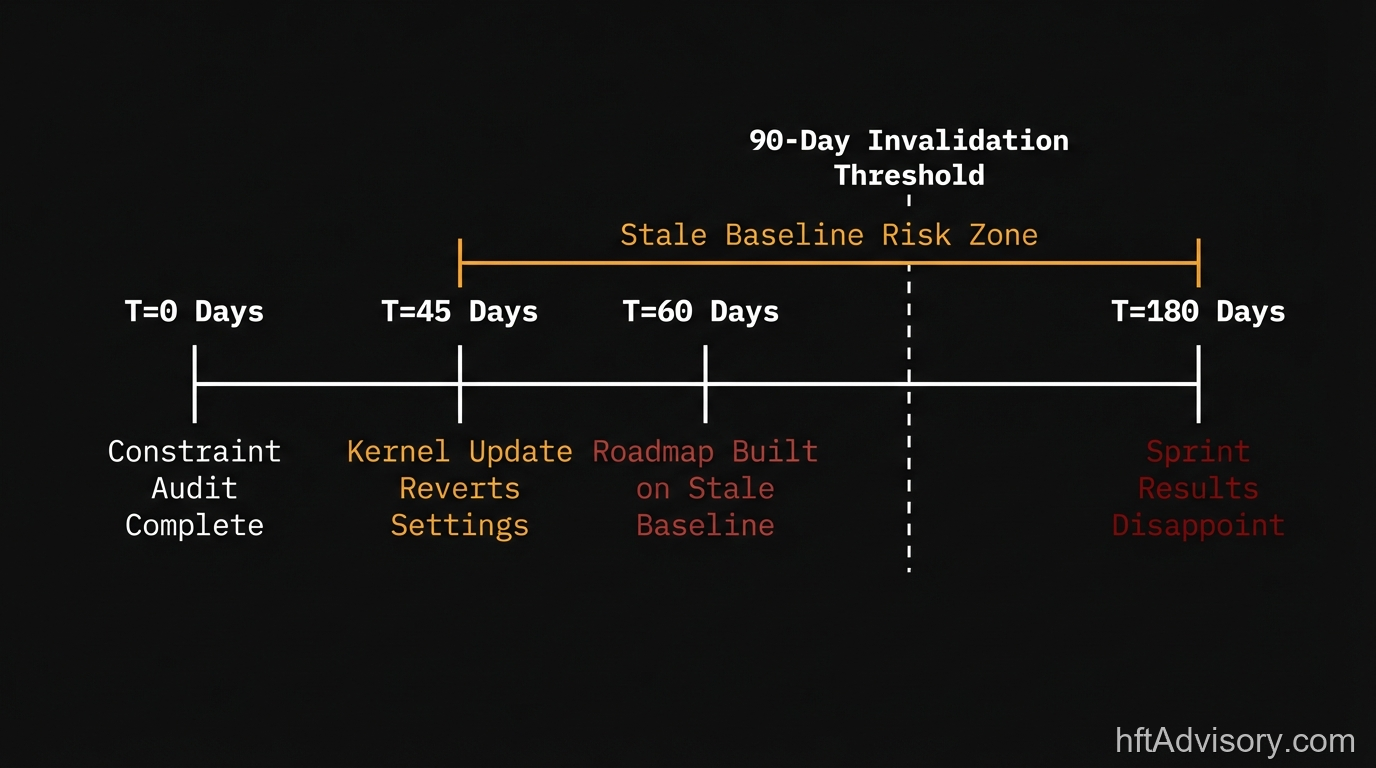

One operational reality worth naming explicitly: OS tuning parameters do not hold indefinitely. Kernel updates silently revert tuning settings. A constraint map validated six months ago may not reflect the current state of the production stack. As a practical standard, if critical-path jitter has not been measured under production-representative load within the past 90 days, that measurement is stale. Any optimization roadmap built on measurements older than 90 days should be treated as a hypothesis, not a baseline.

The instrument-what-is-easy bias is not unique to HFT. Brendan Gregg’s USE Method (Utilization, Saturation, Errors) was designed specifically to counter it: profile each layer independently before drawing conclusions about the system. The methodology exists because the default behavior in infrastructure engineering is to optimize the layer that is easiest to see. That default behavior costs money at HFT scale.

There is also a sequencing trap that appears after kernel and NIC optimization is complete. FIX over TCP carries roughly 3x the round-trip budget of native binary protocols. After hardware and kernel work is done, teams frequently declare program completion and leave the protocol layer untouched. The protocol layer was not the binding constraint before the kernel work. After the kernel work, it often is. A constraint map is not static. It is valid for the state of the stack at the time of measurement.

AWS confirmed hardware-level packet timestamping at 64-bit nanosecond precision on its Nitro NIC hardware in June 2025. The significance: even cloud infrastructure providers now recognize that application-layer profiling is structurally insufficient for measuring actual network latency. The measurement gap I am describing is not a practitioner opinion. It is now reflected in vendor-level infrastructure design.

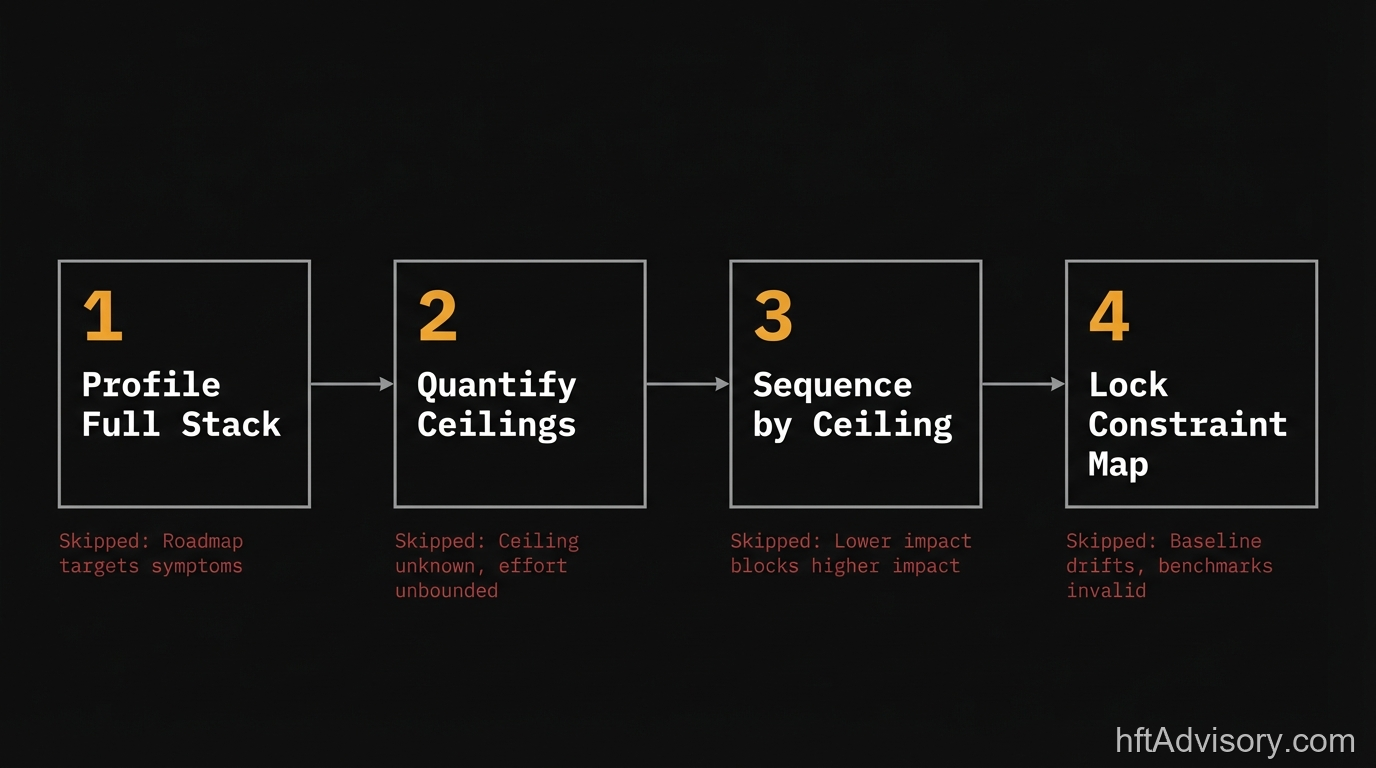

The Constraint-Layer Audit: Four Steps Before the Roadmap

The firms that avoid the $1.25M outcome run a four-step audit before the roadmap is drafted. The sequence is not novel. The underlying methodology draws on Goldratt’s Theory of Constraints (1984), which states that every system has exactly one binding constraint, and that optimizing anything other than that constraint produces no net improvement to system throughput. The five focusing steps Goldratt defined (Identify, Exploit, Subordinate, Elevate, Repeat) map directly to what a constraint-layer audit does for HFT infrastructure. The 40-year theoretical pedigree exists. The gap is in application, not in knowledge.

Step 1: Profile the Full Stack as Separate Variables

Measure application, kernel, NIC, and co-location fabric independently. Aggregate end-to-end latency measurement does not locate the constraint. It confirms one exists. Each layer needs its own measurement pass under production-representative load. If any layer has not been profiled under load in the past 90 days, treat it as unmeasured.

Step 2: Quantify the Ceiling Per Layer

For each layer, calculate the maximum latency reduction achievable if that layer were fully optimized. If kernel bypass alone accounts for 60% of observed latency variance, write that number down: kernel bypass has an optimization ceiling of X microseconds, and every layer above it has a mathematical limit set by Amdahl’s Law. This number must exist in writing before the roadmap is drafted. If it does not exist, the roadmap has an uncontrolled assumption at its foundation.

Step 3: Sequence by Ceiling, Not by Team Comfort

The layer with the highest optimization ceiling gets resourced first. This single resequencing has avoided $400K to $900K in engineering spend across the firms in our engagement history that adopted it. The reason team comfort tends to win over ceiling-based sequencing: application-layer work is easier to instrument, easier to explain to stakeholders, and easier to assign to existing team members. It is also frequently the wrong starting point.

Step 4: Lock the Constraint Map Before the Sprint Plan Is Finalized

Any sprint that targets a layer above the confirmed primary constraint is approved spend that cannot move the metric that matters. The constraint map is the gate. If the sprint plan is finalized before the constraint map exists, every sprint is an uncontrolled experiment.

The Diagnostic Checklist: Bookmarked and Shared

This is the pre-roadmap audit checklist the team applies before an optimization program is scoped. It is structured as a binary pass/fail gate: each item either exists or it does not.

Constraint-Layer Pre-Roadmap Audit Checklist

Application Layer

- Hot-path profiling completed under production-representative load within 90 days

- P50, P99, P999 latency measured independently (not aggregated with network)

- GC pause frequency and duration logged (if applicable to stack)

- Lock contention and queue depth measured as separate variables

Kernel Layer

- Kernel bypass configuration (DPDK, RDMA, OpenOnload) profiled and latency delta measured

- IRQ affinity and NUMA topology validated against current CPU/NIC layout

- OS tuning parameters verified as persistent (not reverted by recent kernel update)

- Kernel bypass vs. standard stack latency delta quantified in microseconds (target: documented as 1-5us vs. 20-50us)

NIC Layer

- Interrupt coalescing settings measured as independent variable

- Ring buffer sizes validated under peak load conditions

- NIC-level hardware timestamping enabled and producing data

- NIC firmware version validated (documented regressions exist across generations)

Co-location Fabric

- Physical distance to matching engine measured in meters and microseconds

- Cross-connect latency measured independently from application latency

- Fiber path validated (dark fiber vs. shared infrastructure distinction documented)

Protocol Layer

- FIX vs. binary protocol round-trip budget quantified for current message types

- Protocol layer profiled as a post-kernel-optimization constraint (not pre)

Constraint Map Validation

- Each layer has a documented optimization ceiling (in microseconds and percentage of total latency)

- Ceilings ordered by magnitude (highest ceiling is the first sprint target)

- All measurements dated within 90 days

- Constraint map reviewed and signed off before sprint plan is written

Scoring: If any of the four ceiling fields (kernel, NIC, fabric, protocol) are undocumented, the roadmap does not have a constraint map. It has an assumption.

Closing Standard

The standard for a defensible optimization roadmap is a dated constraint map with a documented latency ceiling for every layer in the hot path, produced before the sprint plan is written.

If your last optimization program delivered less than 30% of projected P99 improvement and your pre-program documentation does not include a per-layer ceiling calculation… the roadmap was not built on a constraint map. It was built on an assumption about which layer mattered.

The audit takes time. It costs less than confirming the wrong layer six months later.

Originally shared as a LinkedIn post on March 29, 2026. View the original post on LinkedIn.

Ariel Silahian is the creator of VisualHFT and the author of “Building High-Frequency Trading Systems.” He has spent 20+ years building and auditing HFT infrastructure at institutional scale.

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

I have operated on both the Buy Side and Sell Side, spanning traditional asset classes and the fragmented, 24/7 world of Digital Assets.

I lead technical teams to optimize low-latency infrastructure and execution quality. I understand the friction between quantitative research and software engineering, and I know how to resolve it.

Core Competencies:

▬ Strategic Architecture: Aligning trading platforms with P&L objectives.

▬ Microstructure Analytics: Founder of VisualHFT; expert in L1/L2/LOB data visualization.

▬ System Governance: Establishing "Zero-Failover" protocols and compliant frameworks for regulated environments.

I am the author of the industry reference "C++ High Performance for Financial Systems".

Today, I advise leadership teams on how to turn their trading technology into a competitive advantage.

Key Expertise:

▬ Electronic Trading Architecture (Equities, FX, Derivatives, Crypto)

▬ Low Latency Strategy & C++ Optimization | .NET & C# ultra low latency environments.

▬ Execution Quality & Microstructure Analytics

If my profile fits what your team is working on, you can connect through the proper channel.