Table of Contents

- Introduction: The Language Decision That Defines Your P&L

- The Real Problem Is Not Speed — It Is Determinism

- Deterministic Destruction: Eliminating Hidden Pauses from Your Hot Path

- Cache-Friendly Data Layout: The Physics-Level Advantage C++ Gives You

- Predictable Tail Latency: What P99.9 Actually Measures

- Where C++ Sits on the Latency Spectrum: Software to Hardware

- The Rust Question: Why C++ Still Wins on the Critical Path in 2026

- Practical Framework: Critical Path Language Decision Checklist

- Conclusion

Introduction: The Language Decision That Defines Your P&L {#introduction}

I have built trading infrastructure in both C++ and C#. VisualHFT — my open-source real-time market analytics platform — runs on C#/.NET, and I made that choice deliberately. For tooling, developer velocity, and rapid iteration on analytics and visualization, managed languages are the correct engineering decision.

But on the latency-critical path — the order entry pipeline, the matching logic, the market data handler that feeds your strategy — C++ remains a structural requirement. After 20+ years building and advising on production HFT systems at institutional desks, I have seen the same pattern repeat: teams that treat language selection as a “preference” on the critical path discover, in production, that their architecture has a ceiling they cannot optimize past.

The question every CTO running a trading desk at scale needs to answer: “which language guarantees deterministic behavior when every microsecond compounds into P&L?”

That distinction — between average performance and worst-case guarantees — is where the entire conversation about C++ in trading systems begins.

The Real Problem Is Not Speed — It Is Determinism {#determinism}

Most discussions about C++ in trading start with speed. They should start with determinism.

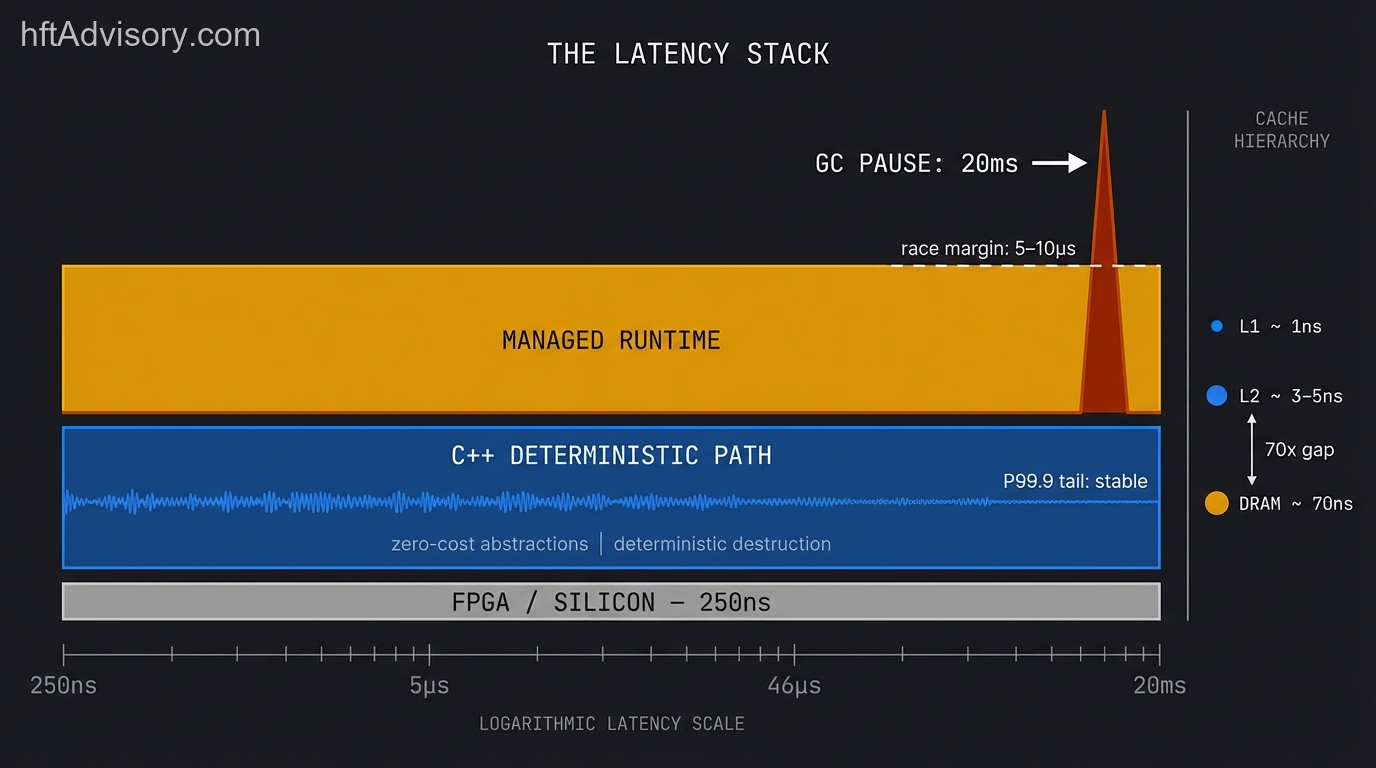

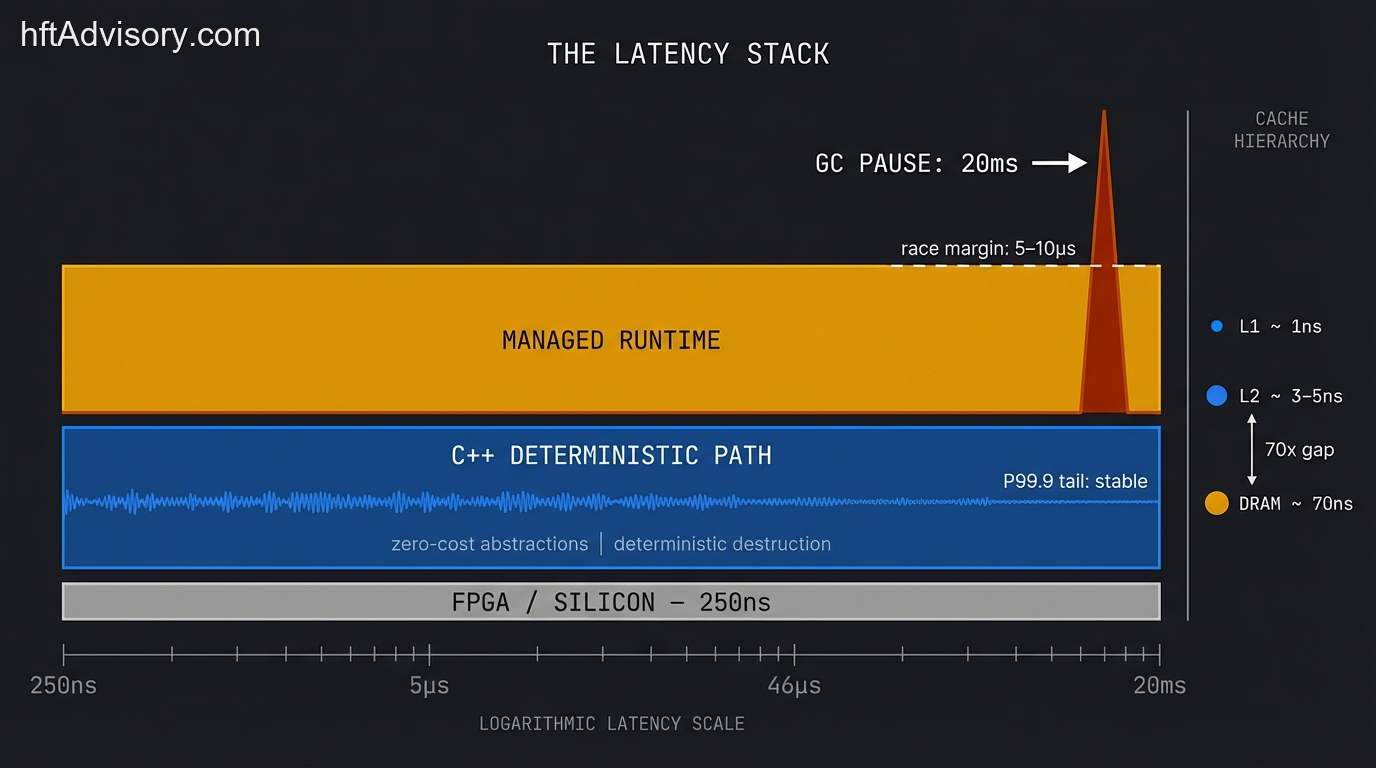

BIS researchers Aquilina, Budish, and O’Neill published their landmark study in the Quarterly Journal of Economics (Volume 137, Issue 1, February 2022), measuring HFT latency-arbitrage races across FTSE 100 stocks on the London Stock Exchange. Their findings quantified what practitioners already knew: the modal race margin is 5-10 microseconds, with a median of approximately 46 microseconds. Across 2.2 billion races tracked over 43 trading days, latency arbitrage accounted for roughly 20% of total trading volume. The global value of these races: approximately $5 billion per year in equity markets alone.

Those numbers reframe the language question entirely. When your competitive margin is measured in single-digit microseconds, the question is not whether your system is fast enough on average. The question is: what is your worst-case latency?

That is where managed languages break. They deliver competitive median latency — and then inject non-deterministic pauses under load that destroy your tail. Java’s G1GC collector produces worst-case pauses of 50-200 milliseconds on production heap sizes. Even ZGC, designed explicitly for low-latency workloads, shows observed worst-case pauses around 50 microseconds — which is still 5-10x the entire race margin that determines whether you capture or forfeit an arbitrage opportunity.

A single 20-millisecond G1GC pause at a desk trading $500 million in daily notional forfeits every race in that window. Not some races. Every race. That is not a performance optimization discussion. That is a P&L structural risk.

Deterministic Destruction: Eliminating Hidden Pauses from Your Hot Path {#deterministic-destruction}

The first architectural reason C++ wins on the critical path is deterministic object lifetime management.

In C++, object lifetimes are explicit. When a scope ends, memory releases — deterministically, on the owning thread, at a predictable point in your execution. There is no garbage collector lurking in your P99.9 latency histogram waiting to fire at the worst possible moment.

This distinction shows up in production constantly. The first sign of GC trouble in a managed trading system is a pattern I have diagnosed repeatedly at institutional desks: inexplicable tail latency spikes that vanish on restart and return in production two hours later. The development team profiles the system, finds nothing wrong in their code, and the problem persists because the root cause lives in the runtime’s memory management decisions, completely outside their control.

Modern C++ takes this further with arena allocators and polymorphic memory resources (std::pmr). Arena allocation provides constant-time, alignment-safe memory operations with zero system heap calls. When the arena resets, all allocations clear at once — no destructor chains, no fragmentation, no unpredictable cleanup cycles. Freelist reuse patterns, proven across production HFT systems, track unused objects for near-zero-cost recycling.

The result: your hot path’s memory behavior becomes provably bounded. You can characterize the worst case, measure it, and guarantee it will not exceed your latency budget. With a garbage-collected runtime, you are always one collection cycle away from blowing that budget in ways you cannot predict or prevent.

Cache-Friendly Data Layout: The Physics-Level Advantage C++ Gives You {#cache-layout}

The second reason is hardware-level memory layout control.

Modern CPU cache hierarchies create a latency landscape that dominates trading system performance. L1 cache hits resolve in approximately 0.7-2 nanoseconds depending on architecture. L2 cache hits cost roughly 3-5 nanoseconds. L3 hits run 10-17 nanoseconds. And DRAM access — a cache miss that hits main memory — costs 60-100 nanoseconds, with 70 nanoseconds being a representative figure for current DDR5 configurations.

That is a 35-100x gap between L1 and DRAM on a path where every nanosecond compounds across millions of operations per second.

C++ gives you direct control over how your data maps to these cache lines. Contiguous arrays ensure sequential access patterns that the CPU prefetcher can predict. Struct packing eliminates padding bytes that waste cache line capacity. Cache line alignment prevents false sharing between threads. Struct-of-arrays layouts enable SIMD vectorization that processes multiple data elements per clock cycle.

You write the memory layout to match your hot path’s access pattern, and the hardware rewards you with L1-resident data on every critical operation.

Managed runtimes abstract all of this away. The runtime decides where your objects live in memory. The garbage collector moves them during compaction. Under sustained load, heap fragmentation scatters your hot data across cache lines, and your carefully profiled median latency degrades as L1 hit rates collapse.

In my experience advising trading desks, cache optimization alone — restructuring data layouts to maximize L1 residency on the critical path — has delivered 3-5x latency improvements without changing a single line of trading logic. That is pure physics-level advantage that C++ makes accessible and managed languages make impossible.

Predictable Tail Latency: What P99.9 Actually Measures {#tail-latency}

The third reason is tail latency control — and this is where the production reality diverges most sharply from benchmark marketing.

Average latency is a marketing number. In production trading, the metric that determines competitive viability is P99.9 — your worst-case behavior across 99.9% of all operations. GC events, JIT recompilation, runtime instrumentation, thread contention on shared runtime resources — they all concentrate in the tail. In a race with a 46-microsecond modal margin, your worst case is what loses trades.

C++ has no JIT compiler injecting recompilation pauses during execution. No garbage collector competing for CPU cycles on your critical thread. No runtime sharing your core with background housekeeping. The tail is yours to control.

This is not academic. When you profile a Java-based trading system’s latency distribution, you see a tight cluster of median measurements — often competitive with C++ — and then a long tail extending orders of magnitude beyond the median. That tail is where the GC, the JIT, and the runtime’s internal bookkeeping live. In a C++ system, the tail distribution tracks much more closely to the median because there are no runtime-injected discontinuities.

For desks operating in markets where the Aquilina et al. research shows race margins of 5-10 microseconds, a tail event that adds even 50 microseconds — let alone the 20+ milliseconds a G1GC major collection can impose — means forfeiting every arbitrage opportunity during that window.

Where C++ Sits on the Latency Spectrum: Software to Hardware {#latency-spectrum}

To frame C++’s position accurately, consider the full latency spectrum for order execution:

FPGA implementations push the tick-to-trade critical path to approximately 100-350 nanoseconds using raw signal triggers. Top firms achieve sub-microsecond wire-to-wire latency with FPGA acceleration, with internal logic executing under 800 nanoseconds. This is the hardware floor — maximum performance, minimum flexibility.

Optimized C++ operates in the 1-10 microsecond range for tick-to-trade on the critical path. This represents the practical ceiling for software-based systems and the sweet spot where most institutional desks operate. You get the determinism and performance of a language with no runtime overhead while retaining the flexibility of a general-purpose programming language.

Managed languages (Java/C#) deliver median latencies that can approach C++ performance — often in the 5-20 microsecond range for well-tuned systems. But the tail extends to millisecond territory, and that tail is structurally unavoidable in any garbage-collected runtime.

C++ is the closest practical language to bare metal. For desks where FPGA development costs and inflexibility are not justified — and that includes the majority of institutional trading operations — C++ provides the best achievable latency with the lowest worst-case variance.

The Rust Question: Why C++ Still Wins on the Critical Path in 2026 {#rust-question}

No serious discussion of C++ in trading systems in 2026 can ignore Rust. Rust offers comparable raw performance, memory safety without garbage collection, and a modern type system. Recent benchmarks show Rust-based order routing implementations achieving approximately 98.7% of equivalent C++ latency performance. Firms including JPMorgan have begun adopting Rust for trading infrastructure components.

So why does C++ still own the critical path?

Three reasons:

Ecosystem maturity. Twenty years of HFT-specific libraries, tooling, and optimization patterns exist in C++. Boost, ZeroMQ, custom allocator frameworks, FPGA integration toolchains — the ecosystem for sub-microsecond trading is built in C++. Rust’s HFT ecosystem is growing but remains years behind in depth and battle-tested production mileage.

Team expertise. The engineers who have spent a decade tuning cache line alignment and lock-free data structures on production trading systems learned those skills in C++. The mental models, the debugging instincts, the pattern recognition for latency anomalies — these are C++ patterns. Retraining that expertise has a real cost measured in months of reduced productivity on latency-critical systems.

Incremental adoption reality. No institutional desk with a functioning C++ stack is going to rewrite their critical path in Rust. The practical adoption pattern is Rust for new non-critical-path components — risk systems, analytics, tooling — while the order execution pipeline stays in C++. This is rational engineering, not language tribalism.

The correct mental model: C++ where determinism is non-negotiable, and the best available language everywhere else. Today, that second category increasingly includes Rust. Tomorrow, it may include Rust on more of the critical path — but only after the ecosystem, tooling, and team expertise catch up.

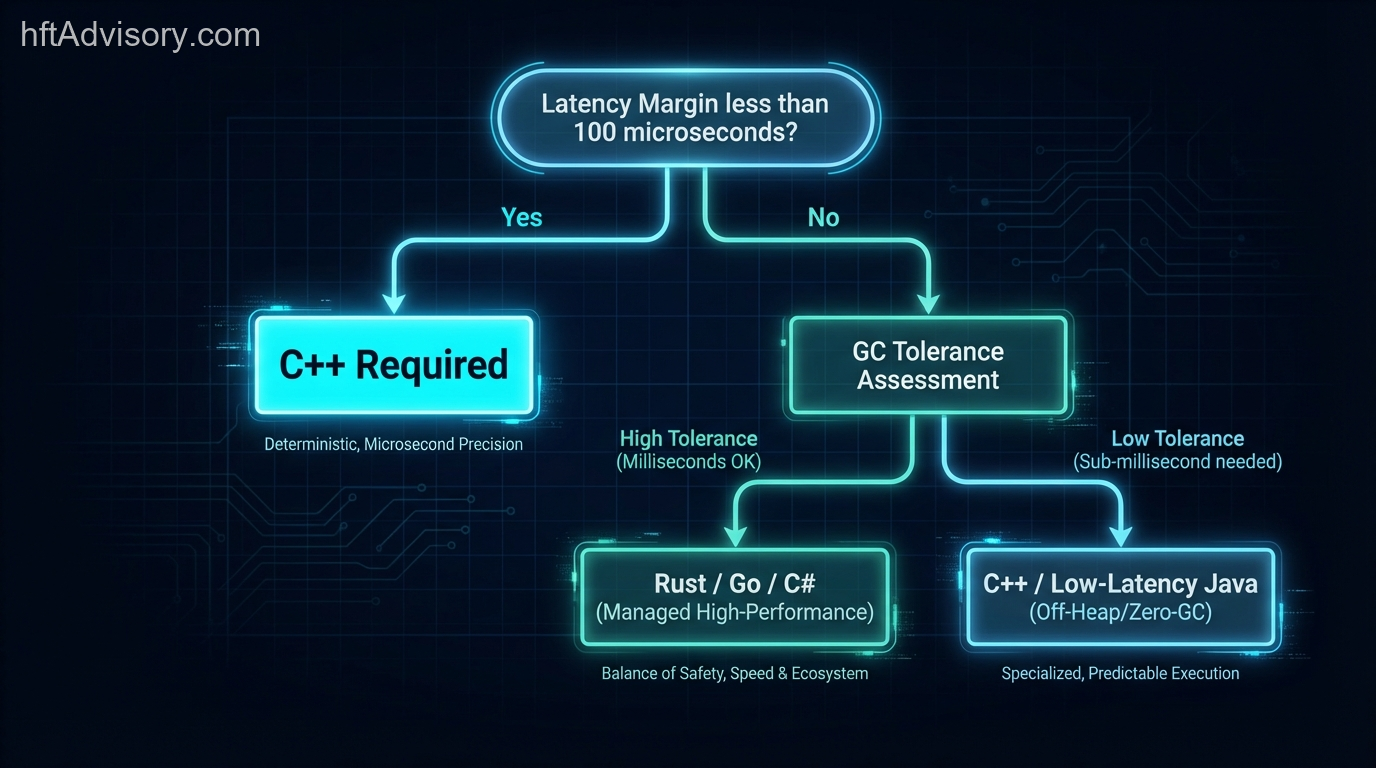

Practical Framework: Critical Path Language Decision Checklist {#practical-framework}

Use this diagnostic to evaluate whether your trading system’s critical path requires C++ or can tolerate a managed runtime:

Latency Budget Assessment:

- Is your competitive latency margin under 100 microseconds? → C++ required

- Does your strategy depend on P99.9 tail latency, not just median? → C++ required

- Are you competing in markets where Aquilina et al. race margins apply? → C++ required

Determinism Requirements:

- Can your system tolerate 20ms+ GC pauses at any point during trading hours? → If no, C++ required

- Does your risk model account for runtime-injected latency variance? → If no, audit your tail

- Are you measuring P99.9 or P50? → If P50 only, you are masking your real latency profile

Architecture Boundary Assessment:

- Have you explicitly defined where the critical path begins and ends?

- Is the critical path isolated from non-deterministic components?

- Can non-critical-path components (analytics, risk, reporting) use managed languages without contaminating the hot path?

Cost-Benefit Calibration:

- What is the daily P&L at risk from tail latency events?

- What is the fully loaded cost of C++ engineering talent vs. managed-language teams?

- Does the latency-sensitive revenue justify the C++ investment?

The answer: C++ where determinism is non-negotiable, managed languages everywhere else. The engineering discipline is in drawing that boundary correctly and defending it against organizational pressure to consolidate stacks.

Conclusion {#conclusion}

The critical path in a trading system is defined by physics — cache hierarchies, memory access patterns, CPU pipeline behavior. C++ respects those physics by giving you deterministic control over object lifetimes, memory layout, and execution predictability. Managed languages abstract those physics away, and the abstraction cost shows up exactly where you cannot afford it: in the tail of your latency distribution, during the races that determine your P&L.

The standard is deterministic, bounded worst-case latency on every operation in the order execution pipeline. If your architecture cannot guarantee that — if a runtime-injected pause can blow your latency budget at an unpredictable moment — it may be time to audit where your critical path boundary actually lies and whether your language choices respect it.

This article was originally shared as a LinkedIn post.

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

I have operated on both the Buy Side and Sell Side, spanning traditional asset classes and the fragmented, 24/7 world of Digital Assets.

I lead technical teams to optimize low-latency infrastructure and execution quality. I understand the friction between quantitative research and software engineering, and I know how to resolve it.

Core Competencies:

▬ Strategic Architecture: Aligning trading platforms with P&L objectives.

▬ Microstructure Analytics: Founder of VisualHFT; expert in L1/L2/LOB data visualization.

▬ System Governance: Establishing "Zero-Failover" protocols and compliant frameworks for regulated environments.

I am the author of the industry reference "C++ High Performance for Financial Systems".

Today, I advise leadership teams on how to turn their trading technology into a competitive advantage.

Key Expertise:

▬ Electronic Trading Architecture (Equities, FX, Derivatives, Crypto)

▬ Low Latency Strategy & C++ Optimization | .NET & C# ultra low latency environments.

▬ Execution Quality & Microstructure Analytics

If my profile fits what your team is working on, you can connect through the proper channel.