Table of Contents

- The Counterintuitive Inversion: When a 50.8% Reject Rate Is Your Best Defense

- The Single-Metric Trap: Why Execution Leaks Survive Standard TCA

- Case Study 1: The 50.8% Reject Rate That Was Protecting the Strategy

- Case Study 2: The 23.4 Percentage Point Fill Rate Gap

- Fix Sequence Matters: Data Feed First, Toxicity Gating Second, Order Sizing Third

- What a Deterministic Diagnostic Engine Actually Does Differently

- What to Look for in Your Own Execution Logs

- Conclusion

The Counterintuitive Inversion: When a 50.8% Reject Rate Is Your Best Defense

A desk drops its log files. The first number that surfaces: 50.8% reject ratio. Every instinct says that number is the problem. Fix the rejects, improve the strategy, move on.

That instinct is wrong.

What the log files actually show, once you cross-reference four signals simultaneously, is a strategy that has been absorbing $182K in measured implementation shortfall while providing liquidity to informed flow it did not know was informed. The 50.8% rejects are not failure. They are the only thing standing between the current shortfall and a larger one.

This is what market microstructure diagnostics surfaces that standard post-trade TCA does not. Not a single metric viewed in isolation, but the interaction between fill distance from top-of-book, VPIN at adverse fills versus favorable fills, P95 slippage distribution, and total implementation shortfall. Each metric, viewed alone, tells an incomplete story. Together they point to a single root cause: a stale pricing feed.

I have spent over 20 years building and auditing electronic trading infrastructure across institutions with significant AUM. The diagnostic engine described in these case studies was built hand-written against real order books starting in 2023. This is not theoretical.

The Single-Metric Trap: Why Execution Leaks Survive Standard TCA

Coalition Greenwich published data in 2024 showing that roughly 90% of buy-side desks run post-trade TCA. Zero commercial TCA vendors surface VPIN or order book toxicity in real time. That gap is not a product roadmap item someone missed. It is the structural reason execution leaks persist despite widespread TCA adoption.

Post-trade TCA tells you what happened. It reports fills, compares to benchmarks, flags outliers. The problem is that execution quality problems at the microstructure level (stale feed displacement, intraday liquidity regime shifts, toxicity clustering at adverse fills) do not announce themselves as single-metric anomalies. They look like noise in any one dimension and only become legible when multiple signals are read simultaneously against real order book state.

The single-metric trap works like this: a desk sees a high reject rate, investigates reject logic, tightens parameters, and achieves lower rejects at the cost of worse fills on the orders that now execute. Or it sees poor fill rates in the afternoon, assumes low liquidity, and routes differently, distributing the problem without identifying it. The metric moved. The leak did not.

Three regulatory mandates are active right now: MiFID II, FINRA Rule 6151 (enforced in the 2025 cycle since going live June 2024), and SEC Rule 605 (amended March 2024, compliance deadline in the Q4 2025/Q1 2026 window). All three require demonstrable, auditable execution quality monitoring. A post-trade TCA report satisfies the letter of that requirement. A diagnostic engine that cross-references live signals satisfies the operational intent. The difference matters when regulators ask not just what you measured but what you could have known in time to act.

Post-trade TCA tells you what happened. A deterministic microstructure engine tells you what is happening, and what to do about it before the next 84 orders go out.

Case Study 1: The 50.8% Reject Rate That Was Protecting the Strategy

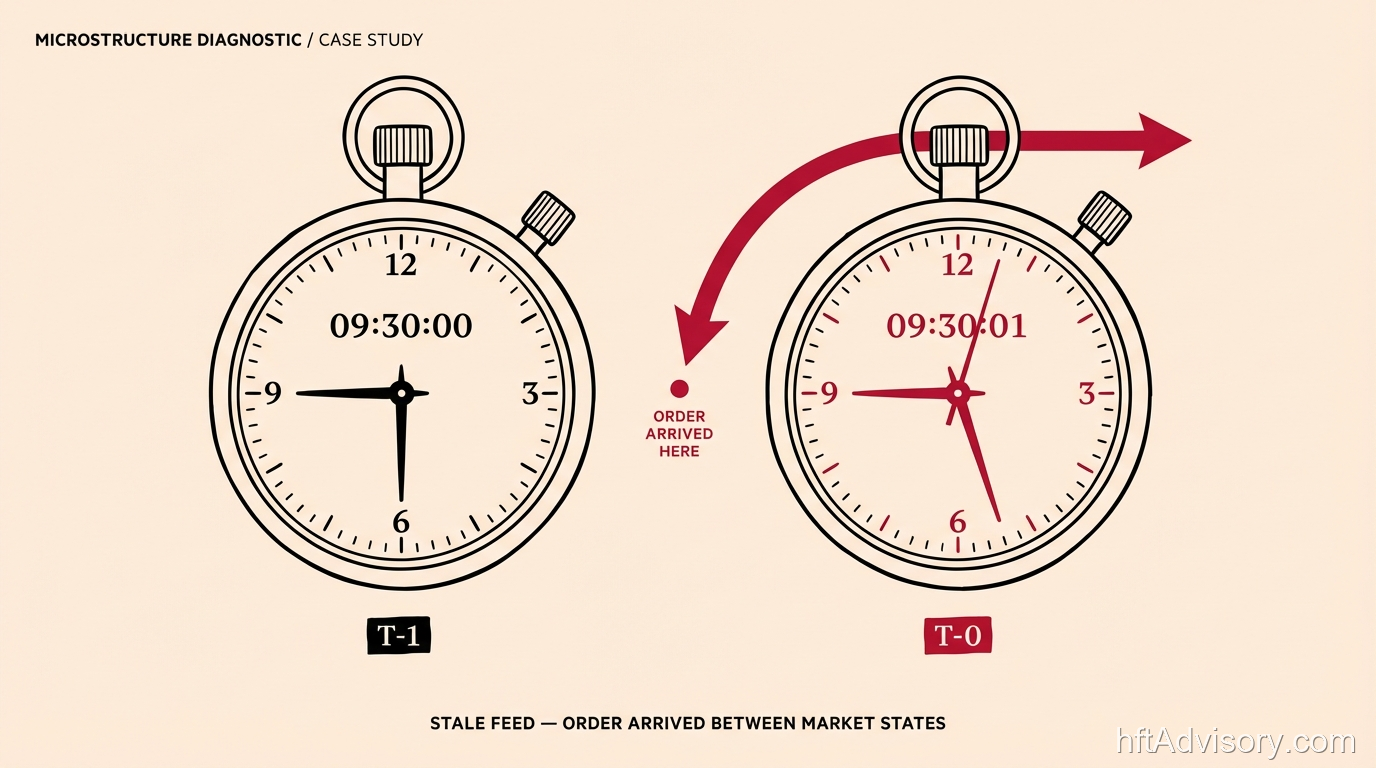

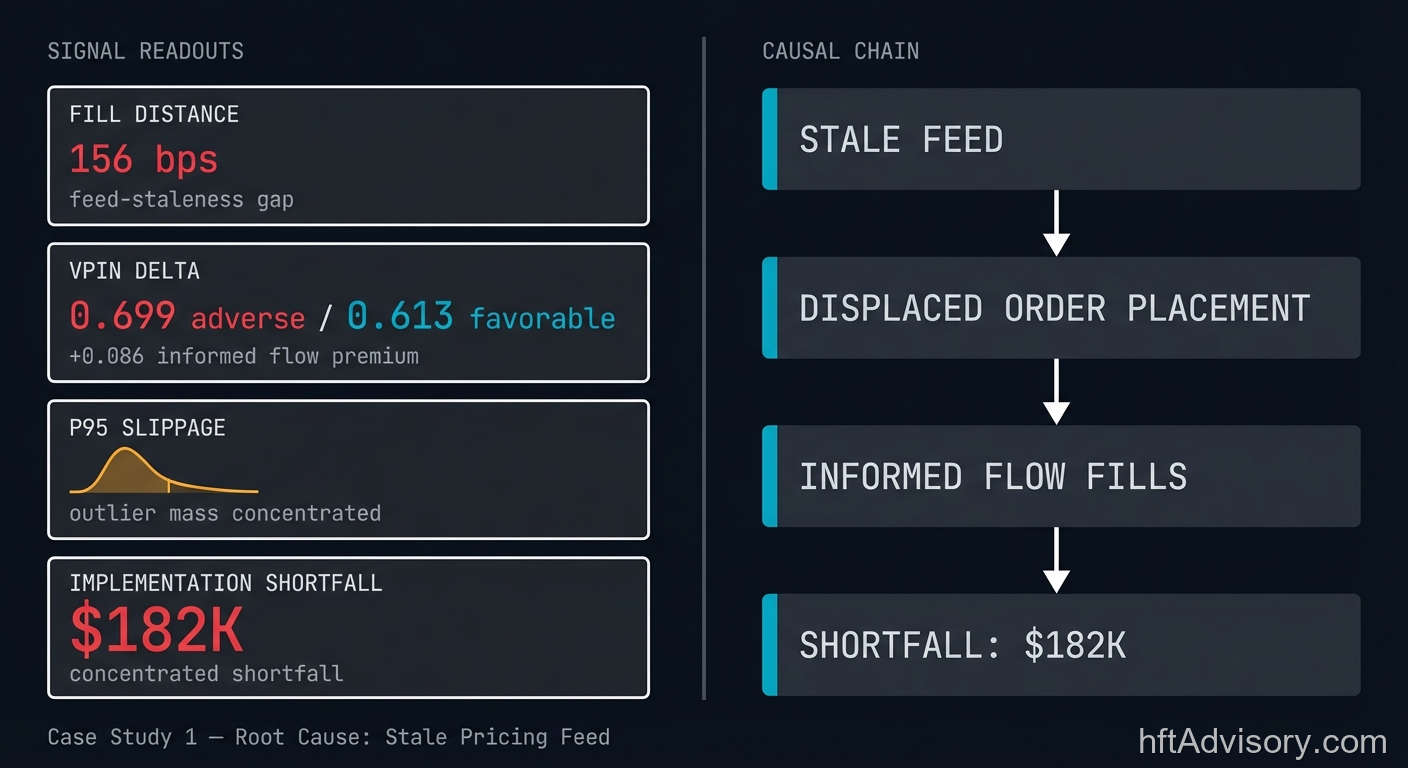

The log file comes in. The diagnostic engine cross-references four signals.

Signal one: Orders landing 156 basis points from top-of-book. The strategy was designed to submit tighter than this. The 156 bps gap is not the strategy’s intended placement; it is the distance between where the strategy believed the market was and where the market actually was when the orders arrived. That distance is the feed-staleness gap.

Signal two: VPIN at adverse fills of 0.699 versus VPIN at favorable fills of 0.613. VPIN (Volume-Synchronized Probability of Informed Trading, introduced by Easley, Lopez de Prado, and O’Hara in their 2011/2012 research) measures the concentration of informed order flow in recent trading volume. A differential this large between adverse and favorable fill windows suggests the strategy is executing consistently in higher-toxicity regimes. Note that VPIN values are instrument-specific and should be read relative to the instrument’s historical baseline. The 0.699 figure sits at the practitioner threshold where sustained elevated readings signal meaningfully elevated adverse selection risk. The 0.613 on favorable fills confirms the pattern is not random.

Signal three: P95 slippage at 9,206 basis points. This figure requires instrument-class context. In the asset class represented by these logs, this P95 magnitude is consistent with the outlier tail of execution in elevated-toxicity windows. It is not a typical outcome; it is the severe end of the distribution, concentrated in windows where the strategy was most exposed. The shortfall is not spread uniformly across execution. It is concentrated.

Signal four: Measured implementation shortfall of $182K. This is measured shortfall on this order set, calculated from the fill versus arrival price comparison. It is not a projection.

No single metric explains the $182K. The 156 bps from top-of-book alone could be a liquidity condition, an aggressive strategy, or a routing issue. The VPIN differential alone could be instrument-regime noise. The P95 slippage alone could be a one-session outlier. Together, they close the diagnostic loop: orders are arriving where the market was, not where it is. Fills that execute catch high-VPIN windows because those are the windows where the market is still available at the stale price. The strategy is providing liquidity to informed flow without knowing it.

The rejects? On this order set, orders where the market moved further away before they arrived. The directional estimate is that if those orders had filled, the $182K would have been higher. They are functioning as unintentional circuit breakers.

The repair sequence that follows is specific: fix the data feed first. Reject rates decline as orders are submitted at current rather than stale prices. There is no longer a gap between where the order is placed and where the market is. Then gate for toxicity. Then address order sizing. In that order, for reasons covered in the next section.

Case Study 2: The 23.4 Percentage Point Fill Rate Gap

A second log file. Different diagnostic signature.

The finding: A 23.4 percentage point fill-rate gap across the trading day. Best execution hour: 37.7% fill rate. Worst execution hour: 14.3%. On 84 orders, the modeled recovery from moving flow out of the worst execution window is approximately $302K. This is a modeled recovery based on historical fill rates on this specific order set, not a guaranteed forward projection. The per-order figure of roughly $3,595 in modeled execution timing inefficiency sits within the empirically validated range for intraday execution timing misalignment.

Why this gap exists: Intraday fill rates are not uniform, and the pattern is not random. Market microstructure research has documented a U-shaped intraday volume and spread pattern across equities, ETFs, futures, and crypto markets consistently from 2000 through 2025. Execution cost at the open is measurably higher than at midday benchmarks. The 23.4 pp gap on this order set is structurally consistent with that literature. The worst execution window is not a bad day. It is the predictable dead window.

Beyond the timing gap, the engine identified a 37 percentage point fill-rate gap between small and large orders in this order set. Two repair paths:

Path one: Split parent orders. Reducing the visible size of large orders changes their position in the adverse selection calculus of counterparties. A large order advertising size invites specific counterparty behavior. Splitting reduces that signal.

Path two: Move limit prices on the large band. Adjusting aggression level on large orders trades execution cost per fill against fill rate.

Which path is correct depends on the instrument, the venue, and the strategy’s alpha decay profile. The diagnostic engine surfaces both. The desk decides.

Three execution fixes identified on this dataset in under a minute of engine processing time. That timing is case-specific and reflects the computational efficiency of a deterministic engine against a defined log file. The $302K modeled recovery is directional. The structural finding is durable: this desk was systematically abandoning value in a predictable dead window.

Fix Sequence Matters: Data Feed First, Toxicity Gating Second, Order Sizing Third

The three fixes identified across these two case studies are individually tractable. Applied in the wrong sequence, they interact in ways that preserve the leak.

Feed latency must come first. VPIN is computed from market flow data. If the data feed is stale, the VPIN signal is stale. Gating for toxicity on a corrupted signal does not protect you from adverse selection; it introduces false confidence that your screen is calibrated when it is not. Fix the feed. Then compute VPIN against current data. Then gate.

Toxicity gating must come before order resizing. If you resize orders before gating for toxicity, you are redistributing adverse selection, not eliminating it. Smaller orders in high-toxicity windows still absorb informed flow. The total adverse selection exposure across the order set does not decline until you stop sending orders into toxic windows. Resize after the gating logic is in place.

Why the sequence is not self-evident from standard TCA. Post-trade TCA aggregates outcomes. A TCA report will show that fill rates improved after resizing. It will not show that the improvement came from redistributed exposure rather than reduced adverse selection, because it cannot cross-reference the VPIN signal at execution time against the feed-staleness proxy at submission time.

Treat the infection before the symptom. Suppressing the fever without identifying the pathogen source produces apparent improvement that leaves the underlying condition intact. The dependency chain between these three fixes is one of the primary reasons execution teams get suboptimal results from individually correct interventions.

What a Deterministic Diagnostic Engine Actually Does Differently

The execution diagnostics space has seen significant interest in applying large language models. The appeal is understandable. The problem is structural.

Order book data is not language. The time series of financial instruments are dynamic, stochastic systems that do not map to the statistical structures LLMs are trained on. An LLM given execution logs can produce plausible-sounding diagnostics. It cannot guarantee that the same inputs produce the same outputs on a second pass. It cannot produce a fill-level audit trail that a regulator can inspect.

A deterministic engine: same inputs, same outputs, every time. The diagnostic logic is explicit and auditable. The cross-referencing rules between VPIN, fill distance, slippage distribution, and implementation shortfall are defined in code, not inferred from training weights. When the engine identifies a stale feed condition, the reasoning path from signal to conclusion is traceable.

“No LLMs. No prompts. Hand-written against real order books since 2023.” That is an architectural requirement for execution diagnostics that can be audited, not a marketing distinction.

The regulatory dimension is concrete. MiFID II, FINRA Rule 6151, and the amended SEC Rule 605 all require firms to demonstrate and document the basis for execution quality decisions. A deterministic engine produces auditable outputs. An LLM produces probability distributions. When a regulator asks for the basis of your execution decisions, the difference is not subtle.

The competitive gap is equally concrete. 90% of buy-side desks run post-trade TCA. Zero commercial TCA vendors surface VPIN or order book toxicity against live fills in real time. The diagnostic gap these case studies illustrate is the standard condition at desks running everything available in the commercial market.

What to Look for in Your Own Execution Logs

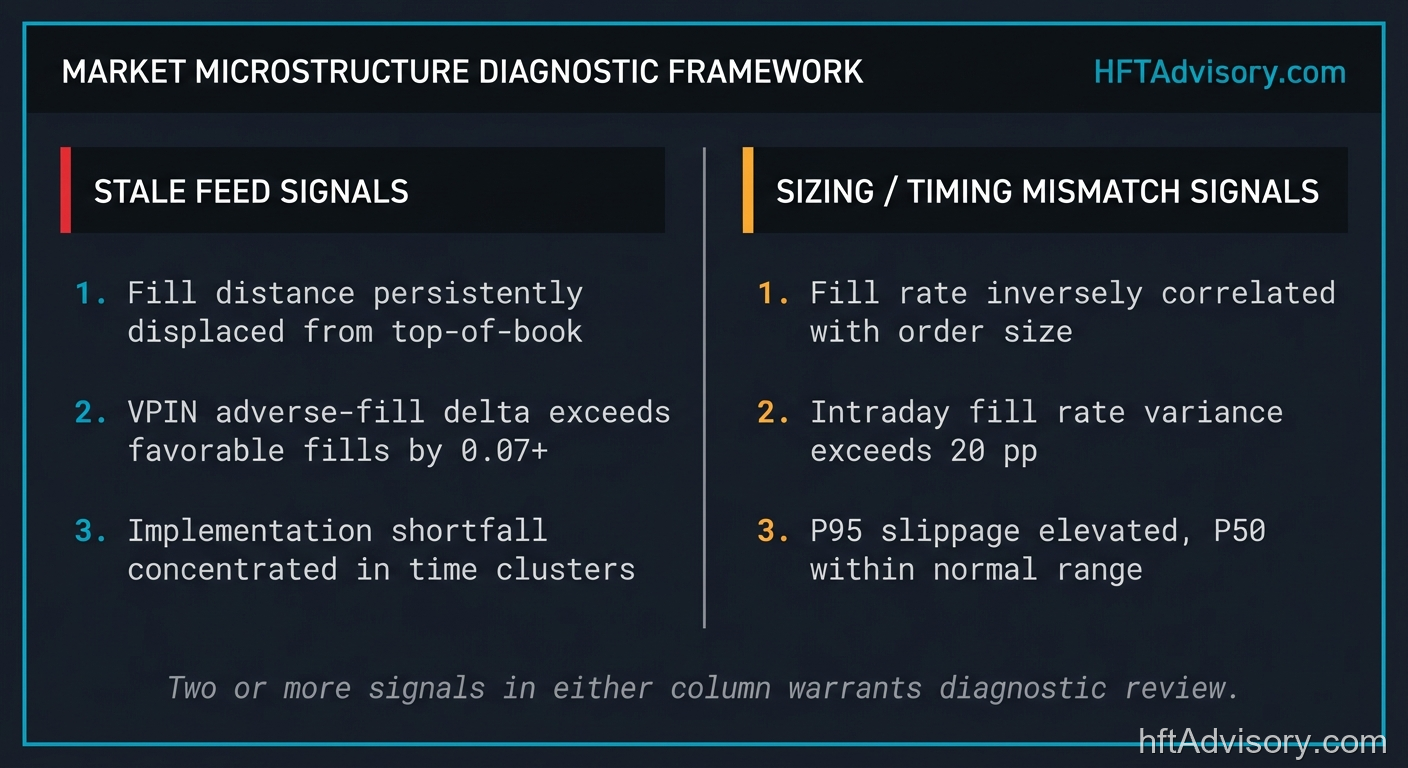

Three Signals That Suggest Stale Feed, Not Strategy Failure

1. Consistent distance from top-of-book across sessions. If orders are landing systematically away from your intended placement and the gap is stable across days and sessions (not correlated with instrument volatility spikes), the offset is more likely a feed-staleness artifact than a strategy calibration issue. A volatile instrument shows variable distance. A stale feed shows a stable, directional offset.

2. VPIN differential between adverse and favorable fills. Pull your fills for a 30-day window. Compute VPIN at each fill timestamp. If VPIN at adverse fills is consistently higher than VPIN at favorable fills by a meaningful margin, you are filling systematically in high-toxicity windows. That is a stale feed signature, not a strategy signature.

3. Directional reject concentration. If your rejects concentrate in windows where the market moved against your order direction after submission, those orders are arriving at stale prices in momentum conditions. A reject rate that is directionally correlated with short-term market movement is a feed diagnostic, not an execution logic diagnostic.

Three Signals That Suggest Order Sizing/Timing Mismatch

1. Fill rate divergence between size bands greater than 20 percentage points. A 37 pp gap between small and large order fill rates on the same instrument in the same execution window is not a market liquidity problem. It is a sizing and visibility problem.

2. Intraday fill rate variation greater than 15 percentage points. A 10 pp variation across execution windows is within normal range for most instruments. A 15 pp or greater variation, consistent across sessions, identifies a structural dead window. The $302K modeled recovery in Case Study 2 came from a 23.4 pp gap. That is not noise. That is a schedule.

3. P95 slippage outlier concentration in specific time windows. If your worst-percentile slippage outcomes cluster in a specific time window rather than distributing across the trading day, you are systematically participating in the worst windows. P95 slippage that is window-specific points to a timing adjustment, not a strategy adjustment.

Conclusion

The standard for execution quality monitoring at a desk running significant order flow is real-time cross-referencing of feed state, toxicity signals, and fill outcomes against order book conditions at execution time. Post-trade TCA satisfies regulatory reporting requirements. It does not satisfy that operational standard.

If your current execution monitoring cannot tell you, at fill time, whether your pricing feed was current and whether you were filling into elevated adverse selection risk, your post-trade attribution is measuring the damage after it has occurred. Two case studies. One stale feed. $182K in measured shortfall on the first. $302K in modeled recovery on the second. The patterns that produced those numbers are not unusual. The infrastructure to identify them in time to act is.

The diagnostic methodology is described in detail at hftadvisory.com/microstructure-diagnostics.

Originally shared as LinkedIn posts on April 6-7, 2026. View post 1 | View post 2

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

I have operated on both the Buy Side and Sell Side, spanning traditional asset classes and the fragmented, 24/7 world of Digital Assets.

I lead technical teams to optimize low-latency infrastructure and execution quality. I understand the friction between quantitative research and software engineering, and I know how to resolve it.

Core Competencies:

▬ Strategic Architecture: Aligning trading platforms with P&L objectives.

▬ Microstructure Analytics: Founder of VisualHFT; expert in L1/L2/LOB data visualization.

▬ System Governance: Establishing "Zero-Failover" protocols and compliant frameworks for regulated environments.

I am the author of the industry reference "C++ High Performance for Financial Systems".

Today, I advise leadership teams on how to turn their trading technology into a competitive advantage.

Key Expertise:

▬ Electronic Trading Architecture (Equities, FX, Derivatives, Crypto)

▬ Low Latency Strategy & C++ Optimization | .NET & C# ultra low latency environments.

▬ Execution Quality & Microstructure Analytics

If my profile fits what your team is working on, you can connect through the proper channel.