Table of Contents

- What VPIN Actually Measures — And What the Academic Debate Gets Wrong for Practitioners

- The LOB Imbalance Signal That Your TWAP Stack Is Ignoring

- Three Incidents That Show What Happens When You Cannot See the Book in Real Time

- The Monitoring Stack That Actually Surfaces Informed Flow Before the Fill

- Why Open-Source Wins the Auditable Analytics Argument

- Practical Framework: What a Real-Time Microstructure Monitoring Stack Must Include

Introduction

A desk running $500M in daily equities flow asked me to look at their execution quality problem. Their TWAP was tuned. Their algo logic was sound. Their fills, on paper, were within tolerance. But their P&L was bleeding during specific volatility windows — and nobody could explain where the leakage was coming from.

I pulled up their monitoring stack. Fill rate, order count, average slippage. All the standard metrics. What they did not have — what the vast majority of desks I have audited in 20+ years of production HFT infrastructure do not have — was any visibility into the composition of the order flow they were trading against. They were monitoring execution. They were not monitoring the market.

The signal that explains that P&L bleed is not in the fill. It is in the order book, in the volume clock, in the proportion of trade-initiated volume that comes from informed participants rather than noise traders. That signal has a name: VPIN. Volume-Synchronized Probability of Informed Trading. And watching it move in real time — not reconstructed post-close, not estimated end-of-day — changes what a desk can act on.

Thank you for reading this post, don't forget to subscribe!

This article is for CTOs and Heads of Electronic Trading who are asking the right question: why does our execution quality degrade in specific market conditions, and is there a structural way to see it coming? The answer requires understanding what VPIN measures, where the academic debate sits (and why most of the debate is irrelevant to the practitioner), what the order book’s imbalance signal tells you before TWAP adjusts, and what three documented market incidents reveal about the cost of monitoring blind spots. Then I will show you what a monitoring stack that actually surfaces this looks like — and why an open-source, auditable implementation changes the calculus against a $30,000/seat terminal.

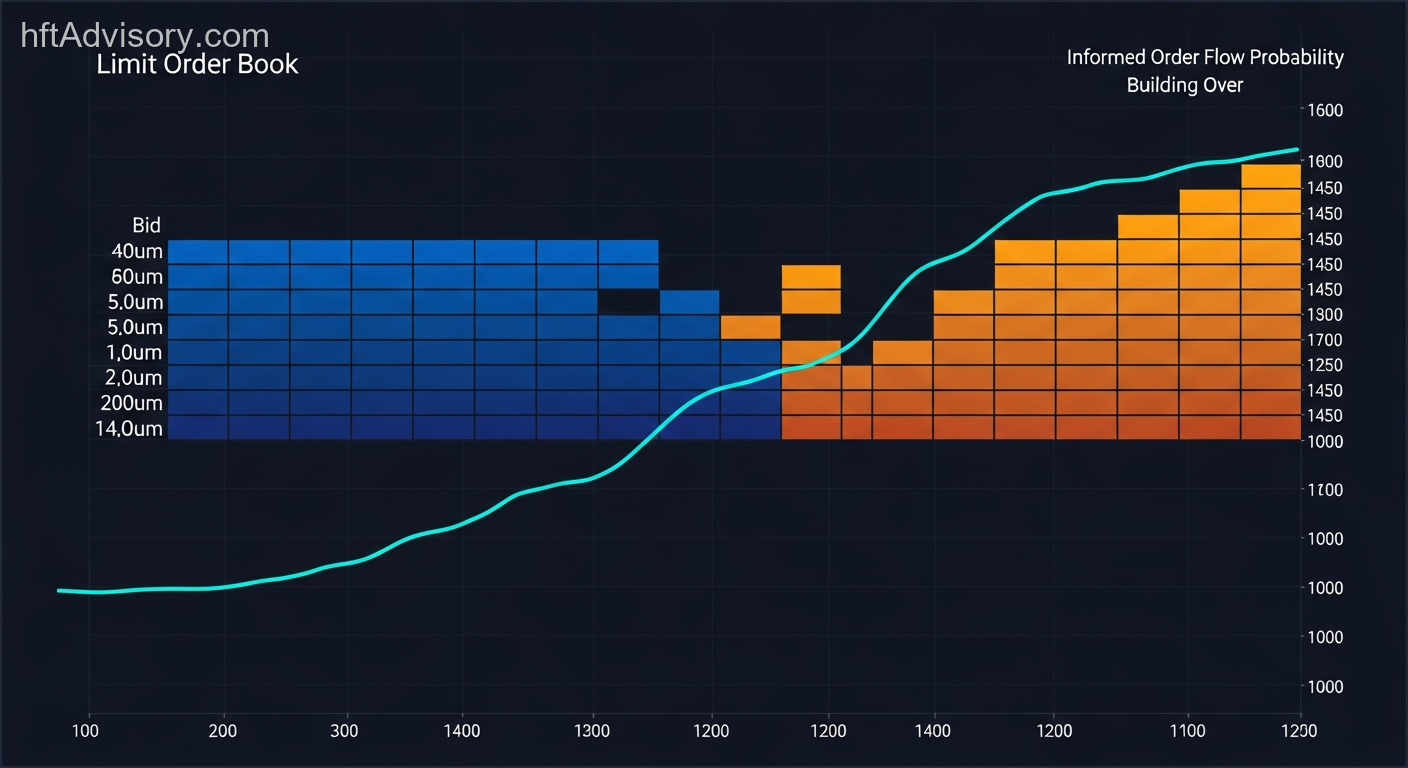

VisualHFT running against a live feed. VPIN updates in real time, not on end-of-day batch cycles. Order toxicity building, bar by bar, before the price print reflects it.

What VPIN Actually Measures — And What the Academic Debate Gets Wrong for Practitioners {#what-vpin-actually-measures}

Easley, Lopez de Prado, and O’Hara published the foundational VPIN paper in 2012 — “Flow Toxicity and Liquidity in a High Frequency World” in the Review of Financial Studies (Vol. 25, No. 5, pp. 1457–1493). The core insight: by segmenting volume into buy-initiated and sell-initiated buckets on a volume clock rather than a time clock, you get a real-time, updating measure of the proportion of informed order flow in the market. When informed participants are concentrating their activity — when they know something the market makers do not — VPIN rises.

The practical interpretation at sustained elevated readings (in our implementation, the 90th–95th percentile of typical VPIN distributions for liquid contracts sits around 0.85 for the instruments we monitor) is that you are looking at a statistically elevated proportion of informed order flow. Market makers facing that composition widen spreads or pull depth entirely. Execution quality for everyone else degrades simultaneously.

Now for the debate, because it is real and you should understand it. Andersen and Bondarenko published a direct critique in 2014 — “VPIN and the Flash Crash” in the Journal of Financial Markets (Vol. 17). Their finding: VPIN peaked after the Flash Crash, not before it. Once trading intensity was controlled for, they found no incremental predictive power for future volatility. This is a legitimate empirical finding on a specific event.

Easley et al. responded — conceding timing nuances on the Flash Crash specifically, but maintaining that sustained elevated VPIN reflects adverse selection conditions for market makers as a structural matter, regardless of whether it leads or lags any specific discrete event.

Here is what the practitioner takeaway should be from that debate: the question of whether VPIN is a leading indicator of volatility or a coincident measure of informed flow concentration is a research question. The operational question is different. At sustained elevated levels, VPIN reflects that the order flow environment has shifted toward informed participants. Whether that shift leads the price print by 30 seconds or moves with it, your TWAP is still being filled into a changed composition. The value is having the number live so you can act — not reconstructing it post-close when the fill is already done.

Low, Li, and Marsh (2018, Journal of Risk) applied BV-VPIN to international equity markets and confirmed that elevated BV-VPIN foreshadows elevated volatility across markets. That is a different empirical question from the Flash Crash timing debate, and it reinforces the practitioner utility of monitoring the metric in real time.

One technical note worth flagging: Andersen and Bondarenko showed that BV-VPIN (bulk-volume classification) and TR-VPIN (trade-rule classification) can behave in opposite directions in specific conditions. Most production implementations use BV-VPIN for reasons of computational tractability. Our implementation uses BV-VPIN, and the threshold parameters we reference (the 0.7 and 0.85 levels) are operationally derived from that distribution — they are practitioner-calibrated parameters, not canonical published thresholds. Any implementation needs to calibrate these against the specific instruments and market conditions in scope.

The LOB Imbalance Signal That Your TWAP Stack Is Ignoring {#the-lob-imbalance-signal}

Cont, Stoikov, and Talreja published “A Stochastic Model for Order Book Dynamics” in Operations Research (Vol. 58, No. 3, pp. 549–563) in 2010. The follow-on work — Cont, Kukanov, and Stoikov’s “The Price Impact of Order Book Events” — established a near-linear relationship between order flow imbalance and short-horizon price changes, with the slope inversely proportional to market depth.

The implication is structural: order book imbalance is a documented short-horizon price predictor. Informed participants are repositioning based on signals that show up in the book’s composition before they show up in the price print.

Here is the monitoring gap. Most execution stacks track top-of-book. They know the best bid, the best ask, the spread. Some desks extend to a few levels. Oxford research on multi-level order flow imbalance (Xu, Cont, and Stavrinou, “Multi-Level Order-Flow Imbalance in a Limit Order Book”) extended the analysis to 10+ depth levels and found that significantly improves short-horizon price prediction compared to top-of-book measurement alone. The book is communicating information across its depth. Monitoring only the top is reading the first sentence of a paragraph.

When your TWAP algorithm is adjusting its pace, it is responding to price. It is not responding to the composition of what is on the other side of the trade. By the time the TWAP adjusts, the informed participants who repositioned into that imbalance have already filled. Your execution is catching the aftermath, not the signal.

The operational threshold we use in our monitoring — sustained VPIN above 0.7 for 8 or more consecutive volume bars — is a practitioner-derived parameter, not a published academic standard. But the underlying logic is grounded in Cont et al.’s framework: at sustained elevated informed flow concentration, execution into that order flow carries structural adverse selection cost. The parameter is calibrated to the specific instruments we monitor; any deployment needs to validate it against its own distribution.

What changes the picture is monitoring both signals simultaneously — VPIN for order flow composition, multi-level LOB imbalance for structural depth — in real time, updating against the live feed.

Three Incidents That Show What Happens When You Cannot See the Book in Real Time {#three-incidents}

The 2010 Flash Crash

On May 6, 2010, the Dow Jones Industrial Average dropped 998.5 points — approximately 9% — in 36 minutes. The E-Mini S&P 500 buy-side liquidity fell from $6.0 billion to $2.7 billion. The CFTC/SEC joint report established that Navinder Singh Sarao’s layering algorithm had been placing spoof orders representing 20–29% of visible sell-side depth. The order book composition was structurally distorted for hours before the crash.

Here is what the forensic read reveals for practitioners: the LOB was not showing depth that reflected genuine liquidity intention. The composition of the book — the balance between resting orders with genuine fill intent and ephemeral orders placed to manipulate visible depth — was deteriorating over a multi-hour window. Desks monitoring at fill-rate granularity had no way to see that deterioration. It was not visible in the price until the cascade began.

Knight Capital, August 1, 2012

Knight Capital’s SMARS (Smart Market Access Routing System) sent approximately 4 million erroneous orders in 45 minutes, producing a $460 million loss. The SEC subsequently charged Knight with inadequate pre-submission controls.

The monitoring failure here was distinct from the Flash Crash but equally structural: no execution monitoring system halted the order bleed as it was occurring. The P&L impact accumulated in real time while the order flow continued uninterrupted. The pattern of order-level activity — what the microstructure of those 45 minutes actually looked like from an order flow composition standpoint — was not being surfaced anywhere that a Head of Desk could act on it. The loss was visible only in the position ledger, not in the execution stream.

Sarao Spoofing, 2009–2015

Sarao’s manipulation spanned 400+ trading days. The total artificial sell-side pressure has been estimated at $170–200 million. It took years of post-trade forensic reconstruction because no desk was monitoring real-time book composition. The $38 million in sanctions came after the damage was done, reconstructed from data that was always there — just never rendered live.

The pattern across all three events is the same: the signal was in the order book. The infrastructure gap was real-time rendering of what that book was actually showing about the composition of the flow.

The Monitoring Stack That Actually Surfaces Informed Flow Before the Fill {#the-monitoring-stack}

CME Group’s 2025 liquidity framework — “Reassessing Liquidity: Beyond Order Book Depth” — explicitly argues that order book depth alone is insufficient for execution risk assessment. When the exchange itself is publishing documentation arguing for multi-metric dashboards, the standard has shifted.

Coalition Greenwich’s 2024 TCA research found that nearly 90% of buy-side desks conduct transaction cost analysis. The dominant model remains post-trade. No standard commercial TCA tool surfaces VPIN or LOB toxicity in real time. That gap exists across S&P Global, Tradeweb, ACA Group, TALOS, and Quod Financial — none of them surface these metrics live.

Bloomberg Terminal pricing is above $30,000 per seat per year (single-seat pricing moved to $31,980 post-2025). Beyond the cost, Bloomberg does not publish its microstructure analytic computation methodology. When VPIN spikes on a Bloomberg terminal (to the extent that metric is available at all), you cannot trace what it is actually computing.

Research-grade open-source VPIN tools exist — VPIN_HFT and jheusser/vpin are examples. They are not production-ready. They lack exchange connectors, real-time feed integration, and the rendering layer that makes the metric actionable at the desk level.

FINRA Rule 6151, enacted in June 2024, increases order routing disclosure requirements — raising the regulatory burden on execution quality documentation. Desks that cannot demonstrate real-time monitoring of execution quality conditions are increasingly exposed on compliance as well as P&L dimensions.

The arXiv February 2025 research on TWAP execution found that TWAP is being systematically front-run by informed RL-based market makers that detect predictable order splitting patterns. This is not a theoretical vulnerability. Predictable execution mechanics are being exploited by participants who can see the pattern. The defense is monitoring the market condition you are executing into, not optimizing the algorithm in isolation.

Why Open-Source Wins the Auditable Analytics Argument {#why-open-source-wins}

VisualHFT — which I built after 20+ years in production HFT infrastructure specifically because I kept encountering this monitoring gap — is the only production-grade open-source tool that surfaces VPIN, multi-level LOB imbalance, Market Resilience, OTT Ratio, and TTO Ratio simultaneously in real time against live exchange feeds.

The current state: 1,100+ GitHub stars, 215+ forks, 500+ commits, Apache-2.0 license, C#/.NET 8.0. Plugin architecture with 8 exchange connectors.

The cost comparison is real but secondary. Bloomberg is $0 vs. $31,980 per seat per year — that arithmetic is straightforward. The more important argument is auditability.

Bloomberg does not publish its microstructure analytic computation methodology. When a metric moves on a terminal, the computation is opaque. For an institution that needs to demonstrate to regulators, auditors, or its own risk committee exactly what a metric is computing and why a threshold triggered an action, opacity is a liability.

VisualHFT is 500+ commits of readable, forkable, auditable code. When VPIN spikes, you trace exactly what it is computing — the bucket size, the volume clock calibration, the classification logic. You can extend it. You can validate it against your own instruments. You can modify the threshold parameters and document why.

The auditability argument is becoming a compliance argument. As FINRA Rule 6151 disclosure requirements tighten and execution quality documentation demands increase, the ability to explain your monitoring stack — not just assert that it exists — is a competitive and regulatory differentiator.

Practical Framework: What a Real-Time Microstructure Monitoring Stack Must Include {#practical-framework}

After 20+ years in production trading infrastructure, and having audited monitoring stacks across institutional and prop environments, here is the minimum architecture for a desk that needs to see informed order flow before it becomes a fill quality problem.

1. Order Flow Composition Layer (VPIN)

- Volume-Synchronized Probability of Informed Trading, updating in real time against the live feed — not reconstructed end-of-day

- Threshold calibration specific to the instruments in scope (the 90th–95th percentile for your distribution)

- Sustained-reading detection: a single elevated bar is noise; 8+ consecutive bars above a calibrated threshold is a signal worth acting on (operationally derived parameter — validate against your own data)

- Feeds into execution algorithm parameter adjustment: when VPIN is sustained-elevated, TWAP pacing and aggressiveness should reflect the composition change

2. Multi-Level LOB Imbalance (10+ Depth Levels)

- Top-of-book imbalance is insufficient — Cont et al.’s framework and the Oxford multi-level imbalance research establish this clearly

- Monitor bid/ask volume imbalance across 10+ depth levels on both sides

- Track depth weighted by level to separate ephemeral top-of-book signals from structural repositioning at depth

- Surface the imbalance directionally: consistent directional pressure building across levels is a different signal than a single-level spike

3. Market Resilience Indicator

- How quickly does the book recover depth after an aggressive order? Recovery speed is a measure of genuine liquidity vs. synthetic depth

- Fast recovery = deep genuine interest; slow recovery = thin book with resting orders that step back when tested

- Deteriorating resilience under sustained VPIN elevation is a compounding risk signal

4. Execution Timing Metrics (OTT and TTO Ratios)

- Order-to-Trade (OTT) Ratio: the ratio of orders submitted to trades executed. Elevated OTT ratio is a signature of order book manipulation — layering and spoofing create order flow that never fills

- Trade-to-Order (TTO) Ratio: the inverse measure of execution efficiency

- Sustained abnormal OTT in the instruments you are executing into is a manipulation-risk signal, not a statistical curiosity

5. Real-Time Rendering — Not Post-Trade Reconstruction

- The entire value of these metrics is temporal. Post-trade TCA tells you what happened. Real-time rendering tells you what is happening.

- The stack must update on the volume clock, not on a time clock, to remain synchronized with the VPIN computation

- Dashboard rendering must be at a granularity a Head of Desk can act on: bar-by-bar, not session-summary

6. Auditability

- Every metric’s computation must be traceable to its source logic

- Threshold parameters must be documented with their calibration rationale

- The monitoring stack itself must be auditable — not because regulators are watching today, but because they will be

Conclusion

The standard for execution quality monitoring has moved. CME Group’s own 2025 framework says that order book depth alone is insufficient for execution risk assessment. That is the exchange making the case for multi-metric dashboards. If your monitoring stack is still aggregating fills and reporting average slippage, you are measuring the outcome of informed flow, not its presence.

The 2010 Flash Crash, Knight Capital’s 2012 loss, and Sarao’s six-year manipulation all share the same structural gap: the signal was in the book. Real-time visibility into that signal — order flow composition, multi-level imbalance, market resilience — existed in the data. The infrastructure to render it actionable did not.

The diagnostic question is specific: when VPIN holds above a calibrated threshold for 8+ consecutive volume bars — and if your monitoring stack does not have a VPIN layer, that threshold does not exist — what does your current stack tell you about the order flow composition you are executing into before it becomes a fill quality problem?

If the answer is that your TCA runs post-trade, the gap is not theoretical.

Stack diagnostics at this level — VPIN threshold calibration, multi-level imbalance computation, real-time resilience rendering — are the scope of HFT consulting architecture reviews, not implementation sprints.

Originally shared as a LinkedIn post by Ariel Silahian. View the original post.

Ariel Silahian has 20+ years of experience in production HFT infrastructure. VisualHFT is open source — Apache-2.0. GitHub repository: github.com/visualhft/VisualHFT

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

>> Learn more about what I do:

https://hftAdvisory.com

>> Your execution logs contain $200K+ in recoverable edge.

>> Microstructure Diagnostics — one-time audit, 3-5 day turnaround

https://hftadvisory.com/microstructure-diagnostics

... more info about me 👇