A $2M infrastructure upgrade produced no measurable latency improvement. The hardware was not the problem. A sorted array in the order book was.

Table of Contents

- The $2M Lesson: Hardware Waits on Algorithms

- Island ECN and the 1996 Proof of Concept

- The Data Structure That Decides Everything

- Modern Evidence: LMAX, Tokyo Stock Exchange, and NSE India

- Five Questions Before Your Next Hardware Budget

- Conclusion: The Standard Has Been Set

The $2M Lesson: Hardware Waits on Algorithms {#the-2m-lesson}

$2M co-location upgrade. FPGA deployment. New server racks. Microsecond-class NICs. Board-approved. The latency profile didn’t move.

My team profiled the stack. The bottleneck was not the switch, the NIC, or the fiber run. It was a sorted array in the order book.

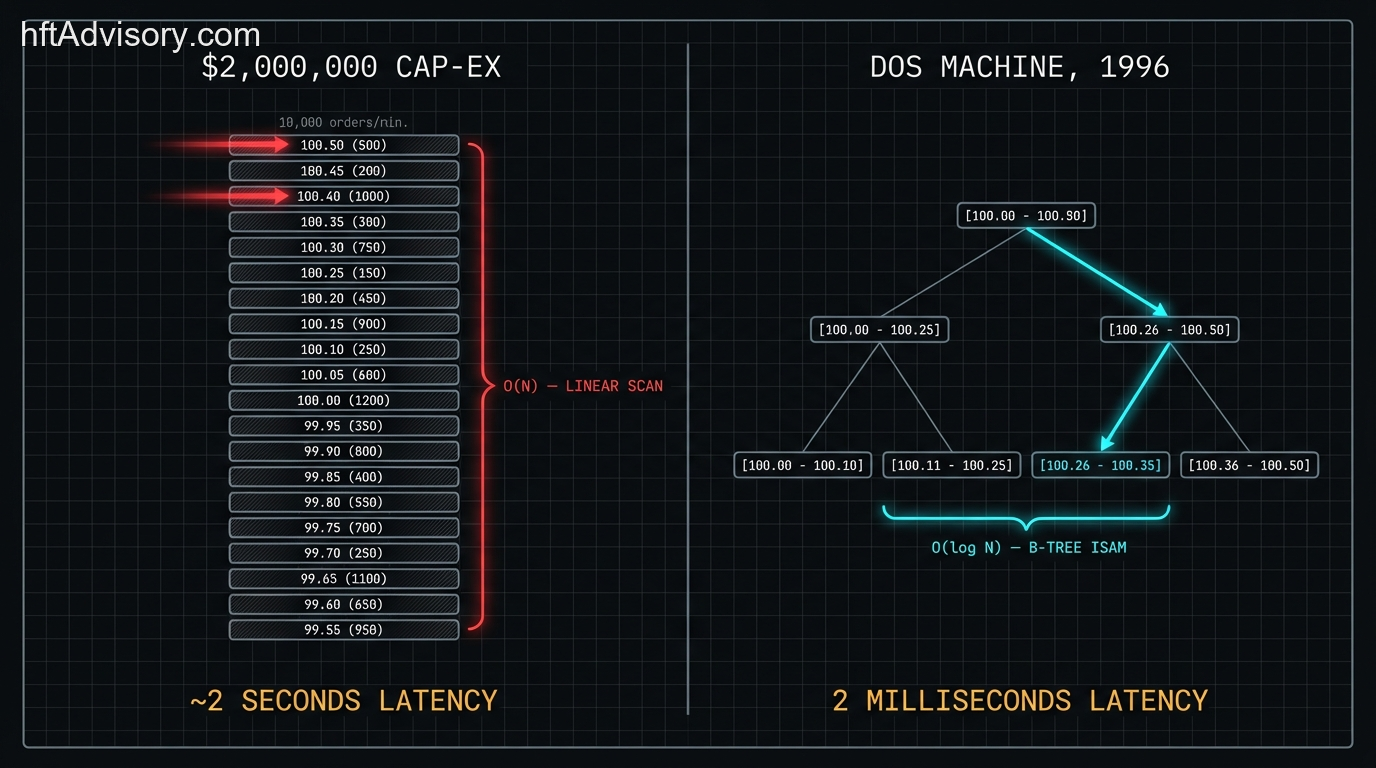

Specifically: inserts at non-best price levels were O(N). Every new order not at the top of the book triggered a linear scan to find its position. At thousands of orders per minute — the kind of volume ECNs were handling by the late 1990s — that scan compounds into a wall. The hardware was waiting on the algorithm.

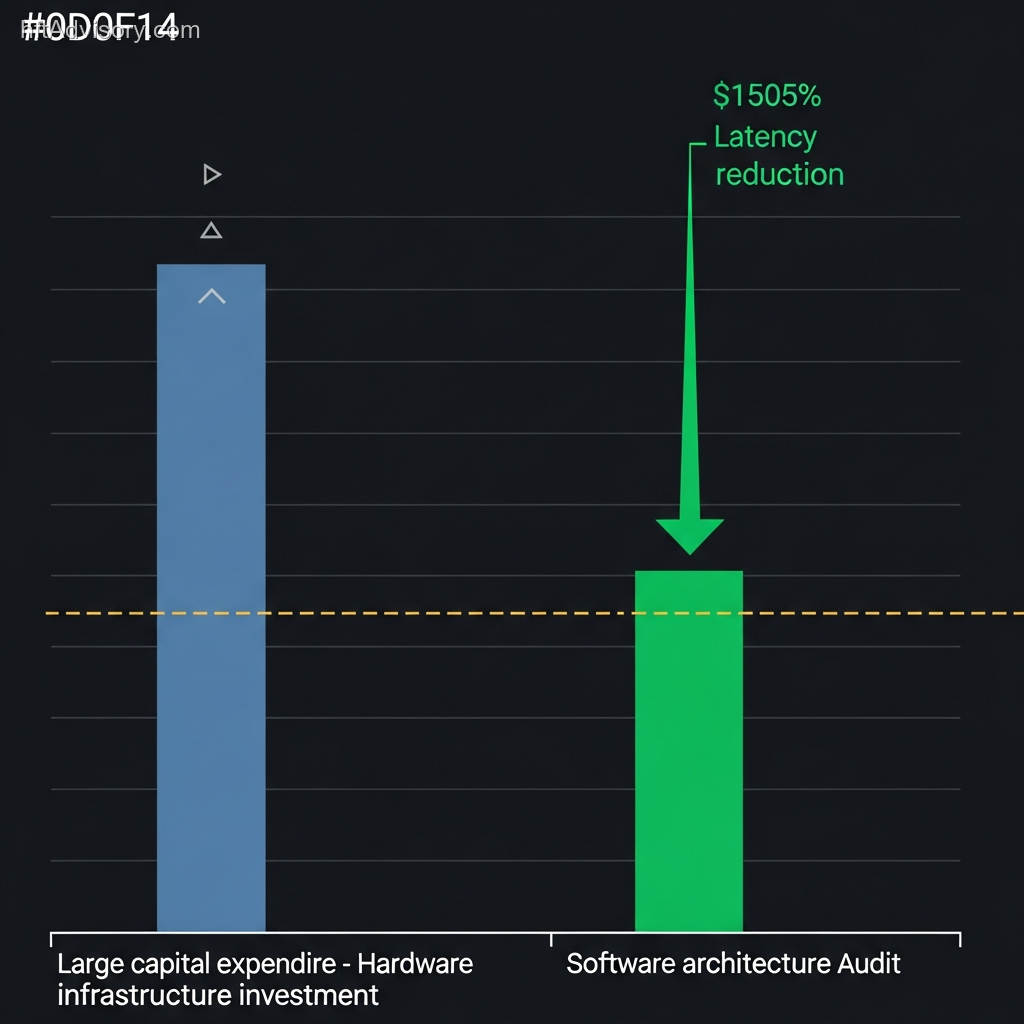

This is a story that repeats across the industry with enough regularity that it has become a diagnostic category in my practice. A firm identifies a latency problem. Leadership approves a hardware refresh. The refresh ships. The latency profile barely moves. Then someone finally profiles the software layer and finds the answer that was always there.

The pattern has a name: premature hardware investment. And it is expensive not just in the capital allocated to the wrong layer, but in the opportunity cost of the architectural debt that remains untouched underneath.

What makes this particularly striking is that the correct architectural answer has been publicly documented since 1996. The proof of concept ran on a DOS machine.

Island ECN and the 1996 Proof of Concept {#island-ecn-1996}

Josh Levine built the Island matching engine in FoxPro for MS-DOS. Co-founded with Jeff Citron at Datek Online, the system ran on a single machine at 50 Broad Street in Manhattan. Single-threaded. Event-driven by deliberate design. No context switches. No lock contention.

The order book used in-memory B-tree indexing via an ISAM storage engine. Zero disk access during matching. Every price level accessed in O(log N) time. Levine’s optimization for new-order entry latency: reuse recently freed order records for new orders — a detail he called “hugely important” in his own documentation of the system.

The result was an end-to-end latency of 2 milliseconds when Instinet, the dominant venue, was delivering 2 seconds. A three-orders-of-magnitude improvement. Not from superior hardware — from superior architecture on commodity hardware.

By 2001, Island was clearing 350 million shares per day. The FoxPro stack had evolved well beyond its original form by that point, but the design principles Levine established in 1996 remained the foundation throughout that growth.

Levine later articulated his own design philosophy in writing: “it is usually the architecture and algorithms that matter more then raw platform speed.” [sic]

That sentence does more work than most engineering teams give it credit for. It was written by the person who demonstrated it with a measurable, reproducible result in a production environment — not in a benchmark, not in a paper, in a live market that was clearing hundreds of millions of shares daily.

The lesson has been available for thirty years. Most firms have not absorbed it.

The Data Structure That Decides Everything {#data-structure}

The order book data structure is the single most consequential architectural decision in a matching engine. Everything downstream — hardware provisioning, co-location spend, FPGA investment — inherits its performance ceiling from this decision.

The diagram above shows what a sorted array insert looks like at non-best prices versus a hash map insert at the same price level. The sorted array requires a linear scan from the front of the list to the insertion point. At low order volumes, this is invisible. At thousands of orders per minute, it becomes a bottleneck that no hardware upgrade can solve because the bottleneck is in the algorithm’s time complexity, not in the clock speed executing it.

Production matching engines use a combination of three structures. The distinction between implementations is the difference between O(N) and O(1) on the operations that run most frequently:

Price-level index (BST / Red-Black Tree): A balanced binary search tree maintains a sorted list of active price levels. Adding a new price level costs O(log M) where M is the number of distinct price levels — typically a small number relative to total order volume. Best bid and best offer retrieval runs in O(1) by caching the tree’s minimum and maximum nodes.

Per-price queue (doubly-linked list): Orders at each price level sit in a doubly-linked list, ordered by arrival time for FIFO price-time priority. Adding an order at an existing price level costs O(1). Cancellation of any specific order costs O(1) because the node can be spliced out directly.

Hash maps (dual keyed): Two hash maps run in parallel. The first keys on Order ID — enabling O(1) cancel and execute operations regardless of where in the book the order sits. The second keys on price — enabling O(1) access to any specific price level node for insert operations on existing levels. This is the structure that eliminates the linear scan entirely.

The result: Add at a new price level costs O(log M). Add at an existing price level costs O(1). Cancel costs O(1). Execute costs O(1). GetBestBid/Offer costs O(1). The only non-constant operation is new price level creation — and in most markets, the number of distinct price levels is bounded and small relative to order volume.

Compare this to the sorted array implementation. Add at best price: O(1) amortized. Add at non-best price: O(N) — a linear scan through every existing order to find the insertion point. As order book depth increases and order flow concentrates away from best price, this cost compounds. The hardware cannot accelerate it because the cost grows with N, not with clock speed.

This is not an academic distinction. CME Globex processes up to 20 million orders on peak days. Production engines at that scale maintain hash maps from price to price level node to guarantee O(1) on the most frequent read operation. The architecture is not optional at those volumes — it is the only architecture that survives contact with production order flow.

Leading tier-1 matching engines on FPGA-accelerated paths achieve sub-5-microsecond per-order processing on price-level hash maps. FPGA-based systems achieve approximately 480 nanoseconds average latency. Research on FPGA-based matching architectures (IEEE Xplore, 2024) is direct on the boundary condition: hardware optimization delivers real benefits, but introduces cost and complexity and offers little value when software efficiency is the true performance bottleneck.

The sorted array is the software inefficiency that renders hardware investment inert.

Modern Evidence: LMAX, Tokyo Stock Exchange, and NSE India {#modern-evidence}

The Island ECN result from 1996 might be dismissed as historical artifact — a different era, different volumes, different market structure. The modern evidence removes that exit.

LMAX Exchange: 6 million transactions per second on one thread

LMAX built a retail financial exchange processing 6 million orders per second on a single JVM thread running on commodity hardware — a 3GHz dual-socket quad-core Nehalem Dell server with 32GB RAM. The engineering team explicitly rejected multi-threaded approaches after profiling showed that processors spent more time managing queues than executing matching logic. Lock contention was the bottleneck. The fix was architectural: the Disruptor pattern, a lock-free ring buffer that eliminated contention entirely.

Six million transactions per second. One thread. Commodity hardware. The architecture was the product.

Tokyo Stock Exchange arrowhead: 200x latency improvement through in-memory design

TSE’s arrowhead system, deployed in January 2010, reduced matching engine latency from approximately 1 second to 5 milliseconds. A 200x improvement. The architectural decision: all transactions processed on in-memory, triply-redundant servers. Zero disk access during matching. The arrowhead4.0 platform (2024) continues this architectural progression.

The improvement came from applying the same principle Levine documented in 1996 — keep the hot path in memory, eliminate I/O from the matching loop — at exchange scale with modern hardware. The hardware matters. The architecture determines whether the hardware can express its capability.

NSE India: targeting nanosecond-class response from April 2026

NSE India announced a move from 100-microsecond-class response times to nanosecond-class, effective April 11, 2026. The target: 100 million transactions per second, from a baseline of approximately 5–6 million. A 1,000x speed increase in throughput. NSE’s framing of the upgrade is explicit: the core gain is the architecture upgrade, not hardware alone.

This is the current frontier. And the pattern is identical to what Levine proved in 1996, what LMAX proved on commodity hardware, what TSE proved at exchange scale. Architecture sets the ceiling. Hardware operates within it.

The share of trades modified within 1 millisecond rose from 11% in 2019 to 17% in 2024 on DAX futures, according to research published in the Quarterly Journal of Economics. The marginal cost of each nanosecond reduction is increasing. At that cost curve, spending capital on hardware before the software architecture is validated is not a conservative investment — it is a high-probability write-off.

Five Questions Before Your Next Hardware Budget {#five-questions}

The diagnostic framework that prevents the $2M mistake is not complicated. It requires answering five questions about the current order book implementation before any hardware procurement is approved.

1. What is the time complexity of a non-best-price insert?

If the answer is O(N) — or if no one in the room knows the answer — the hardware budget is premature. The order book data structure needs to be profiled first. A sorted array insert at non-best price scales linearly with order book depth. At production volumes, this is a hard ceiling on throughput that hardware cannot lift.

2. Has the price-level access layer been profiled in production conditions?

Benchmark environments rarely reproduce the order book depth and order distribution that production markets generate. The linear scan on a sorted array may be invisible in testing at 100 orders and decisive at 10,000. Production profiling — under realistic order flow, realistic book depth, realistic price distribution across the book — is the only valid evidence for where the bottleneck sits.

3. Is there a hash map keyed on price for O(1) price-level access?

Production-grade matching engines maintain dual hash maps: one keyed on Order ID for cancel and execute operations, one keyed on price for insert operations at existing price levels. If the architecture lacks these structures, the matching loop is performing unnecessary work on every insert. No NIC upgrade addresses that cost.

4. Is there any disk I/O in the matching hot path?

Island ECN in 1996 ran zero disk access during matching. TSE’s arrowhead in 2010 ran zero disk access during matching. LMAX runs zero disk access during matching. If there is any disk I/O in the hot path — persistence, logging, audit trail generation — that I/O belongs outside the matching loop, not inside it. The hardware budget for storage is a separate decision from the matching engine architecture.

5. Is there lock contention in the order processing path?

LMAX’s profiling showed that at sufficient throughput, processors spent more time managing locks than executing logic. The fix was a lock-free ring buffer, not faster processors. If the current architecture uses locks in the order processing path, threading model analysis belongs before hardware scaling analysis. Adding cores to a lock-contended system often worsens throughput by increasing the cost of lock arbitration.

These five questions are a pre-flight checklist, not a full architectural audit. The purpose is to establish whether the software architecture is the binding constraint before capital is allocated to hardware. In my experience advising trading desks, the answer to at least one of these questions is disqualifying often enough that the checklist pays for itself in the first conversation.

Conclusion: The Standard Has Been Set {#conclusion}

Island ECN proved the architectural ceiling on a DOS machine in 1996. LMAX confirmed it on commodity hardware processing 6 million orders per second on one thread. TSE’s arrowhead achieved a 200x latency improvement through in-memory architecture. NSE India is targeting a 1,000x throughput increase driven by architecture, not hardware alone.

The data structure is the bottleneck. It almost always was.

The standard for production matching engine architecture is O(1) price-level access via price-level hash map, zero disk I/O in the matching hot path, and lock-free order processing on the critical path. If your current architecture cannot demonstrate those properties against production order flow, a hardware budget will not move the latency profile. An architectural audit will.

If your current implementation cannot answer those five questions against production order flow, the next hardware budget is premature. If you want to run those questions against your actual stack, that is the conversation that belongs before the procurement approval, not after.

This article was originally shared as a LinkedIn post. View the original post

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

I have operated on both the Buy Side and Sell Side, spanning traditional asset classes and the fragmented, 24/7 world of Digital Assets.

I lead technical teams to optimize low-latency infrastructure and execution quality. I understand the friction between quantitative research and software engineering, and I know how to resolve it.

Core Competencies:

▬ Strategic Architecture: Aligning trading platforms with P&L objectives.

▬ Microstructure Analytics: Founder of VisualHFT; expert in L1/L2/LOB data visualization.

▬ System Governance: Establishing "Zero-Failover" protocols and compliant frameworks for regulated environments.

I am the author of the industry reference "C++ High Performance for Financial Systems".

Today, I advise leadership teams on how to turn their trading technology into a competitive advantage.

Key Expertise:

▬ Electronic Trading Architecture (Equities, FX, Derivatives, Crypto)

▬ Low Latency Strategy & C++ Optimization | .NET & C# ultra low latency environments.

▬ Execution Quality & Microstructure Analytics

If my profile fits what your team is working on, you can connect through the proper channel.