Table of Contents

- What April 11 Actually Changes

- Layer 1 — Processor: CPU vs. FPGA and the Jitter You Never Measured

- Layer 2 — Kernel: The Bypass That Breaks Under Load

- Layer 3 — Clock: PTP on Paper vs. PTP Under Stress

- Layer 4 — OS: The Layer Where Confident Teams Get Caught

- Layer 5 — Protocol: The Last Place You Look, the First Place I Find the Leak

- The Economics: What Infrastructure Tier Divergence Costs on $500M Daily Flow

- April 11 Readiness Checklist: Five Questions for Your Stack

- The Standard That Matters

What April 11 Actually Changes

NSE’s CEO Ashishkumar Chauhan announced on February 21, 2026 that the exchange goes live with its next-generation matching engine on April 11, 2026. The headline numbers: from approximately 100 microseconds and 5–6 million transactions per second to a stated capacity target approaching 100 million TPS. Barring schedule changes, April 11 is NSE’s publicly committed go-live date.

I have been watching exchange benchmarks for over 20 years — CME’s Globex migrations, Nasdaq’s INET transition, the London Stock Exchange’s Millennium rollout. The pattern is consistent: when an exchange moves to a new latency class, fill quality diverges along infrastructure tier lines within weeks. The firms positioned at the new tier capture the improvement. The firms still running the prior-generation stack experience relative degradation even without changing anything on their end. The exchange became faster; they did not.

The Nasdaq INET migration between 2002 and 2005 is the documented analog. Firms at the prior infrastructure tier experienced fill quality degradation in the early INET period relative to firms that had pre-positioned their connectivity and execution stack for the new architecture. The exchange upgrade itself creates the divergence.

Thank you for reading this post, don't forget to subscribe!

NSE’s April 11 upgrade positions the exchange within the latency class of global top-tier venues. The co-location expansion from approximately 2,000 to 4,500 racks — confirmed by Data Center Dynamics and Business Standard reporting — signals that NSE is building the physical infrastructure to support that latency class at institutional scale. Trading Technologies has been empaneled as an NSE connectivity vendor for 2026, which tells you the global connectivity ecosystem is already aligning to the new tier.

The question every CTO at a connected firm should be asking right now is not whether NSE will deliver on the upgrade. The question is whether your stack can operate inside the latency budget that upgrade creates. A faster exchange does not make you faster. Five specific layers in your architecture determine whether you capture the upgrade or observe it from the wrong side of the fill log.

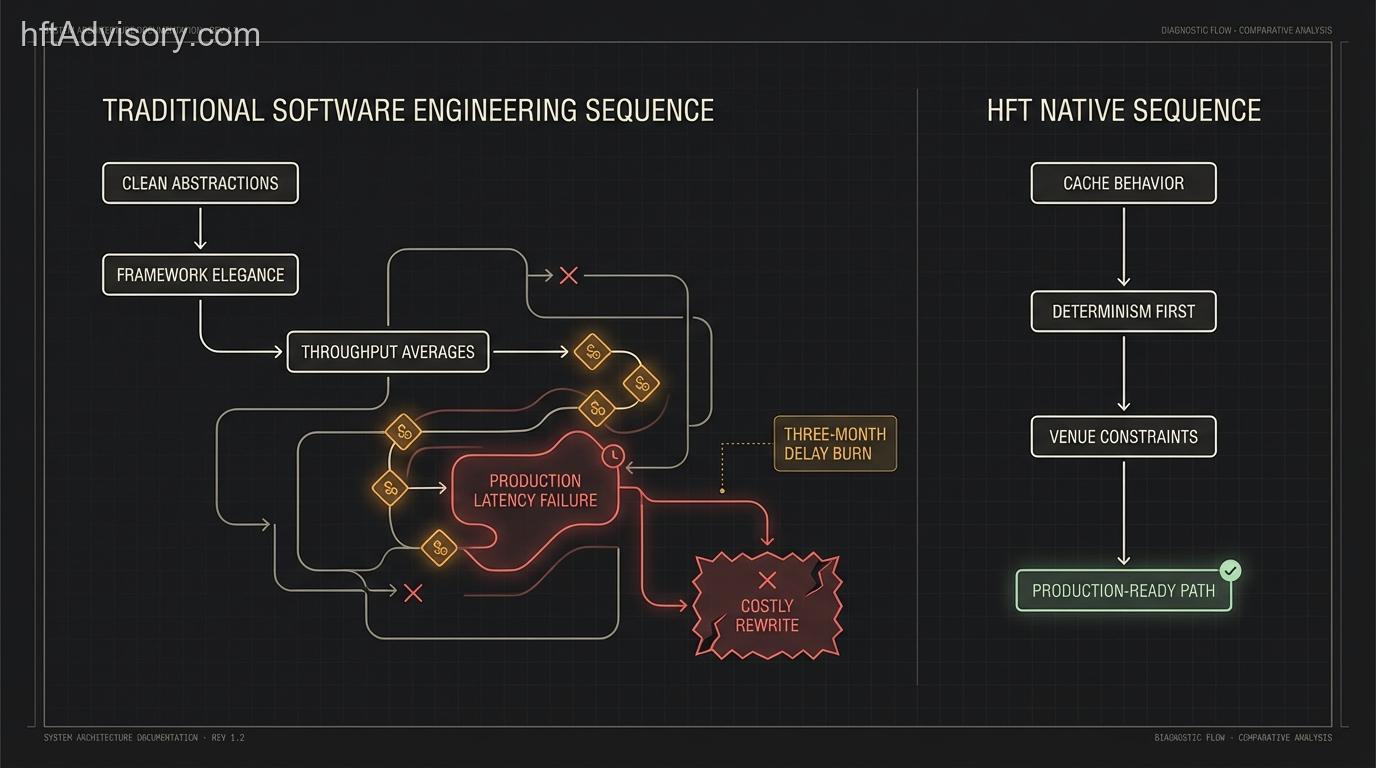

The two stack classes mapped against the five critical layers. Use this as a diagnostic baseline — not a prescription.

Layer 1 — Processor: CPU vs. FPGA and the Jitter You Never Measured

I have seen two firms swap CPU for FPGA and nothing else — same strategy, same venue, same market flow — and that single substitution was the difference between being in the trade and reading about it afterward. No algorithm change. No co-location move. One hardware substitution at the critical path.

The performance differential at this layer is not theoretical. CPU-based systems with kernel bypass run a minimum of 2–3 microseconds tick-to-trade. FPGA-based systems operate in the single-to-double-digit nanosecond range. That is a 100x to 1,000x latency advantage depending on the workload. The AMD Alveo UL3524, benchmarked under STAC-T0 conditions, achieved 13.9 nanoseconds actionable latency with 200 picosecond jitter — 49 percent faster than the prior benchmark of 24.2 nanoseconds. These are not vendor slides; this is published STAC-T0 data.

The harder question is not “should we move to FPGA?” It is whether your current critical path jitter has been costing you fills you never measured. Jitter on a CPU-based system is not a constant tax; it is an intermittent one. The fills you win confirm the system works. The fills you lose to jitter are invisible because you have no timestamp on the decision you made 800 nanoseconds before the market moved.

One practical constraint worth naming: FPGA development requires Verilog expertise that is genuinely rare and expensive. Deployment cycles are long. The architectures that resolve this most effectively are hybrid FPGA-plus-CPU designs — FPGA on the critical path for market data parsing and order generation, CPU handling everything else. The full FPGA-everywhere approach is achievable but requires the build time and talent pipeline to match.

If your processor layer is still CPU-only, the first diagnostic question is not “can we afford FPGA?” It is: what does your critical path jitter look like under production load, and what did it cost in fills last quarter that were attributed to other causes?

Layer 2 — Kernel: The Bypass That Breaks Under Load

I learned this one the hard way, and I have watched other firms learn it the same way. A kernel-bypassed feed handler that fails over to standard networking under load does not degrade gracefully. It becomes the dominant jitter source overnight, and the degradation is not visible in steady-state monitoring because it only manifests under the microburst conditions that correlate with the most active trading periods — which is exactly when it costs the most.

The Linux kernel networking stack has a measurable ceiling: it cannot reliably handle traffic rates above approximately 1 million packets per second under microburst conditions. Kernel bypass eliminates 20–50 microseconds of kernel overhead per packet. That overhead is not evenly distributed across time — it spikes under load. A feed handler that bypasses the kernel in steady state but falls back to the kernel stack under peak conditions creates a latency profile that looks acceptable in testing and fails in production at the worst moments.

Most desks I have worked with assumed this was a solved layer once kernel bypass was deployed. The ones that tested failover behavior under production-representative load — genuine microburst stress, not synthetic steady-state tests — discovered that their bypass implementation had edge cases they had never exercised. Some found race conditions in their failover logic. Some found that their monitoring did not distinguish between bypass and non-bypass mode at runtime.

The diagnostic question at this layer is not “do we have kernel bypass?” It is: what is the failover behavior under load, and has that been confirmed under conditions that represent actual market open volatility? If the answer to the second part is “we have not tested that specifically,” there is a layer two risk that will not appear in normal testing coverage.

Layer 3 — Clock: PTP on Paper vs. PTP Under Stress

I have seen a desk with correct PTP configuration on paper fail live because the grandmaster was never stress-tested during market open volatility. Everything looked correct in the documentation. The IEEE 1588v2 configuration was textbook. The grandmaster was GPS-linked. The switches were IEEE-1588 compliant with hardware timestamping. On a quiet afternoon, accuracy was well within the sub-100 nanosecond requirement.

At market open, with the full burst load hitting the network fabric, the grandmaster could not maintain stability. The jitter on the timing signal climbed outside the sub-microsecond bound that the trading system depended on. The result was timestamping inconsistencies that contaminated the sequencing logic in the feed handler. Not catastrophic — subtle. Subtle timing errors in a feed handler are the hardest class of problem to diagnose because the symptom is execution quality degradation that looks like market microstructure noise.

The requirements for sub-100 nanosecond PTP accuracy are not optional at the nanosecond tier: GPS-linked grandmaster, IEEE-1588 compliant switches operating in transparent or boundary clock mode, hardware timestamping at the slave. Software-only PTP cannot reliably achieve sub-microsecond accuracy under load. This is not a configuration question; it is a hardware procurement question.

The diagnostic question here is not whether PTP is deployed. It is whether you have proven it holds under your worst-case load condition — specifically, burst load at market open on a high-volatility day. If PTP validation testing was done at steady-state, the test did not cover the scenario where timing errors actually matter.

Layer 4 — OS: The Layer Where Confident Teams Get Caught

Source: Rigtorp Low Latency Guide

In every infrastructure review I have conducted, the OS layer is where the most technically capable teams get caught. Not because they are unaware of OS tuning — most senior infrastructure engineers know the techniques. Because they are confident the layer is handled, and confidence at layer four is where latency budget disappears.

The numbers from Erik Rigtorp’s low-latency guide are worth having in front of you: an isolated CPU core configured with isolcpus, nohz_full, and rcu_nocbs produces a maximum observed jitter of 18 microseconds. A non-isolated core on the same hardware produces 9.5–17 milliseconds of jitter — a 500x to 1,000x differential. Timer interrupts: 3–4 per 30 seconds on an isolated core versus 31,204 on a non-isolated core. Same hardware. Different OS configuration.

I have seen a fully capable FPGA deployment run at microsecond-class latency because the host OS was never tuned. The FPGA was doing its job. The OS on the host feeding the FPGA was generating interrupt storms that contaminated the critical path. The hardware was right. The configuration was wrong. Six weeks of FPGA deployment work was being taxed by an untuned kernel.

The relevant OS tuning techniques — isolcpus, nohz_full, IRQ affinity pinning, NUMA balancing disable, swap disabled, Transparent Huge Pages disabled — are documented in the Red Hat Real-Time kernel documentation and the Rigtorp guide. The gap I consistently find is not that teams have never heard of these. It is that someone applied them during the initial deployment, on a different kernel version, and nobody validated they were still active after the last OS update.

When was the last time OS tuning parameters were validated on the running system — not in the runbook, but confirmed active via direct inspection?

Layer 5 — Protocol: The Last Place You Look, the First Place I Find the Leak

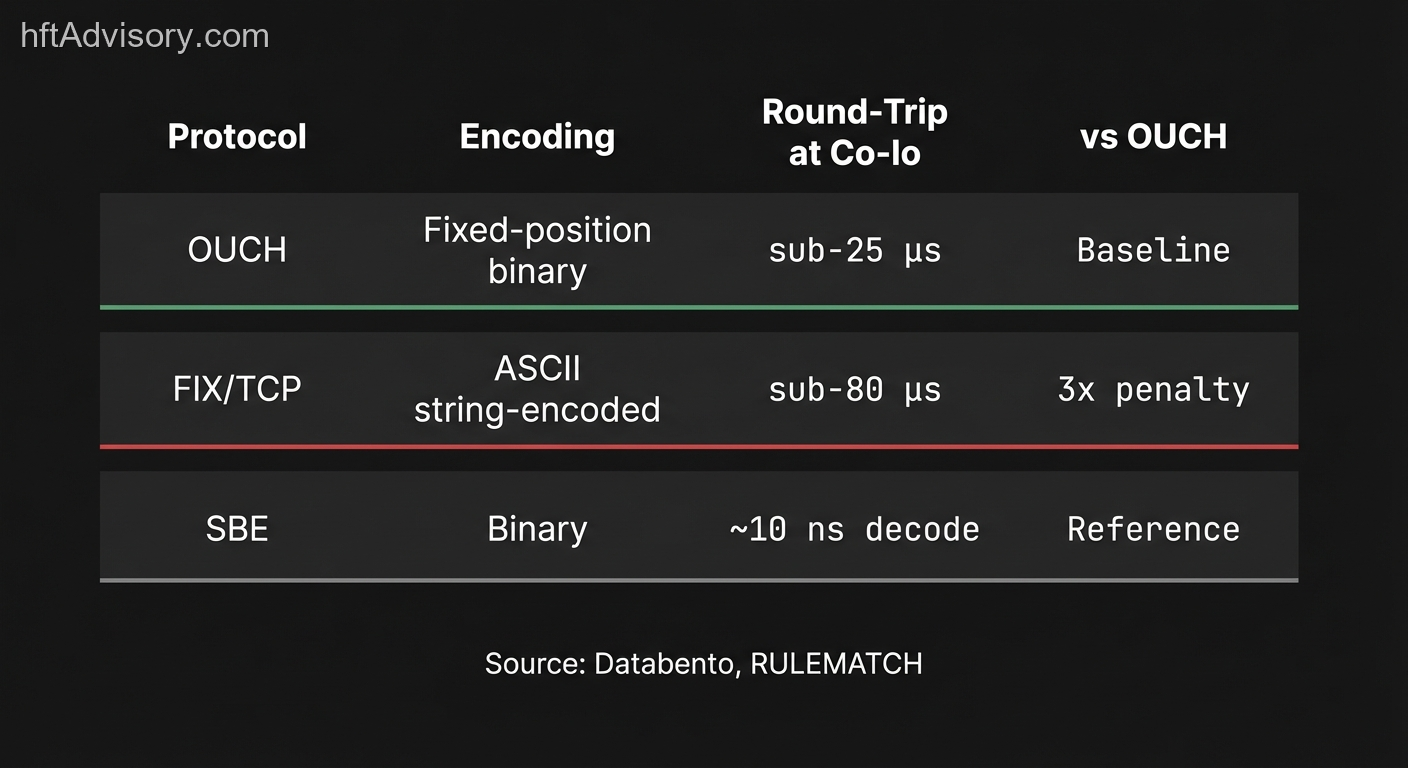

Source: Databento, RULEMATCH

Two desks I reviewed last year had FPGA-based exchange connectivity at layer one. They had kernel bypass at layer two. Their clocks were correctly configured. Their OS was tuned. And they were still running FIX over TCP for order entry.

The protocol layer was consuming more round-trip budget than the entire exchange matching engine. They had invested significantly in the bottom four layers and left the top layer untouched because “FIX works” — and because changing the order entry protocol means re-certifying with the exchange and updating the risk system integration. Organizational friction, not technical friction.

The performance differential is concrete: OUCH protocol at co-location achieves sub-25 microsecond round-trip. FIX at the same venue runs sub-80 microseconds — a 3x latency penalty from protocol selection alone. The reason is structural: OUCH fields are fixed-position native binary numbers. FIX is ASCII string-encoded with variable field lengths that require string tokenization at both encode and decode. Simple Binary Encoding processes typical messages in tens of nanoseconds. FIX ASCII string tokenization cannot match that throughput class regardless of how well the rest of the stack performs.

The protocol layer is the last place most teams audit and the first place I find the budget leak when reviewing a co-location stack. If the round-trip budget at the new NSE tier targets sub-100 microseconds and the protocol alone consumes 80 microseconds of that budget, every other optimization operates in the remaining 20. The question is whether 20 microseconds of headroom is enough to be competitive, and what the cost of the recertification work is relative to the fill quality improvement. I have found that the teams most resistant to examining this layer are those where FIX is deeply embedded in the risk system. The protocol change is real work. But “real work” is a different calculation when the alternative is leaving 3x latency on the table at a venue operating at nanosecond class.

The Economics: What Infrastructure Tier Divergence Costs on $500M Daily Flow

The practitioner observation I have carried across every co-location upgrade is that the fill quality gap between infrastructure tiers runs 2 to 4 basis points per trade. To be direct: this is first-person observation, not a figure from academic literature.

What is published: BIS Working Paper 1115 (2023) measured HFT latency arbitrage in dark pools at 1.33 to 2.36 basis points on large institutional flows. The 2–4 basis points I have observed in co-location upgrade scenarios is consistent with the upper bound of that range and extends above it — which makes sense given that co-location tier divergence is a more direct infrastructure comparison than the cross-venue latency arbitrage the BIS paper measured.

At 2 basis points per trade on $500M daily flow, execution quality degradation compounds to approximately $25M annually — invisible on any single ticket, measurable in aggregate across a full year of flow. The calculation: 0.0002 × $500,000,000 × 252 trading days = $25,200,000. This is the annual cost of being at the wrong infrastructure tier, expressed as foregone fill quality rather than explicit fees.

The April 11 upgrade creates a bifurcation point identical to the Nasdaq INET migration. Firms at the prior infrastructure tier experienced observable fill degradation in the early INET period relative to early adopters. The gap is not sudden — it accumulates invisibly across thousands of executions. On any single day it looks like normal variance. Across a quarter, it is measurable in the P&L attribution that nobody wants to write up.

April 11 Readiness Checklist: Five Questions for Your Stack

One question per layer. Not a configuration manual — a readiness audit framework. Walk through it with your infrastructure lead before April 11.

Layer 1 — Processor Has your critical path jitter been measured under production-representative load in the last 90 days? Not synthetic test load — actual market-open burst conditions. Jitter profiles change with software updates, co-location rack changes, and exchange feed format modifications. If the last measurement was at deployment, it is not a current reading.

Layer 2 — Kernel What is your failover behavior when the kernel bypass path is unavailable, and has that been tested under microburst load — the spike profile at market open on a high-volatility day? If your monitoring does not distinguish between bypass and non-bypass mode at runtime, you cannot confirm which path you are on during peak periods.

Layer 3 — Clock Has your PTP grandmaster been stress-tested under peak traffic load — not configured and validated at rest, but tested under the burst conditions that represent worst-case market open? If the timing validation was done at steady-state, the test did not cover the scenario where timing errors actually matter.

Layer 4 — OS When were your OS tuning parameters last validated on the running system — not reviewed in a runbook, but confirmed active via direct inspection? OS updates, kernel patches, and configuration drift can silently undo tuning that was correctly applied at deployment. This is the layer I most consistently find diverged from its documented state.

Layer 5 — Protocol What is your current order entry protocol at NSE co-location, and what is the measured round-trip latency at that layer? If you are running FIX over TCP and have not measured it against OUCH in the last 12 months, you are operating with an unquantified protocol tax. The recertification work to move to OUCH is a known cost. The fill quality delta over a year of $500M daily flow is also now a known cost.

The Standard That Matters

NSE’s April 11 upgrade moves the exchange within the latency class of global top-tier venues. The standard at that tier is end-to-end stack validation across all five layers — processor, kernel, clock, OS, and protocol — under production-representative load conditions, not test conditions.

If your architecture cannot guarantee nanosecond-class jitter on the critical path, sub-microsecond timing accuracy under burst load, and protocol round-trips inside 25 microseconds at co-location, you are not operating at the tier the exchange is now capable of supporting. The exchange going faster does not move your stack. It moves the standard.

What layer is your current architecture optimized for — and which layer has not been validated since deployment?

Originally shared as a LinkedIn post on February 26, 2026. View the original post

Related Infrastructure Deep-Dives

The NSE upgrade touches every layer of the trading stack. These articles provide detailed technical guidance for each area:

- Understanding Trading Latencies — how to measure, benchmark, and optimize latency across your full execution path.

- Best Practices for HFT Low-Latency Software — kernel bypass, memory management, and OS tuning techniques for production systems.

- HFT System Architecture Design (Part II) — component-level design patterns for building high-frequency trading infrastructure.

- Why You Need an FX Aggregator — aggregation layer architecture for multi-venue execution quality optimization.

- The Top 17 Trading Metrics — measuring execution quality, fill rates, and system performance in production.

Never Miss an Update

Get notified when we publish new analysis on HFT, market microstructure, and electronic trading infrastructure. No spam.

Subscribe by EmailHFT Systems Architect & Consultant | 20+ years architecting high-frequency trading systems. Author of "Trading Systems Performance Unleashed" (Packt, 2024). Creator of VisualHFT.

I help financial institutions architect high-frequency trading systems that are fast, stable, and profitable.

>> Learn more about what I do:

https://hftAdvisory.com

>> Your execution logs contain $200K+ in recoverable edge.

>> Microstructure Diagnostics — one-time audit, 3-5 day turnaround

https://hftadvisory.com/microstructure-diagnostics

... more info about me 👇